California's AI Bill Impact: Building a Regulatory Moat for Big Tech

California’s Senate Bill 1047, the Safe and Secure Innovation for Frontier Artificial Intelligence Models Act, represents one of the most prescriptive AI safety regulations proposed in the United States. The bill establishes a specific framework for managing risks from advanced AI, igniting a fierce debate across the technology sector. The central conflict is not just about safety, but about market dynamics. The legislation’s stringent requirements have prompted a critical analysis of whether its high compliance costs will create a regulatory moat for Big Tech, a theory that questions if the bill will entrench incumbents or if its public compute provisions can level the playing field. This development forces a data-driven examination of one of the most consequential policy debates in modern technology.

Key Points

• SB 1047 mandates safety certifications and a “kill switch” for developers of AI models trained with computing power exceeding 1026 FLOPs.

• The bill’s focus on a hard computational threshold for developers contrasts sharply with the EU AI Act’s risk-based approach, which regulates AI based on its specific application or use case.

• Critics, including the Electronic Frontier Foundation, argue the bill’s high compliance costs will stifle innovation and harm open-source AI, while the proposed “CalCompute” public cloud aims to mitigate this by providing subsidized compute resources.

• The legislation establishes a new state body, the Frontier Model Division, to oversee compliance and shifts liability for harm caused by a model onto its developer.

Drawing Lines in Silicon: The 10^26 FLOPs Threshold

At its core, SB 1047 introduces a precise technical trigger for regulation. The bill targets developers of “covered AI models,” defined as systems trained using more than 1026 integer or floating-point operations (FLOPs). This threshold, based on analysis from research organization Epoch, is designed to capture frontier models like Google’s Gemini Ultra and OpenAI’s GPT-4 while exempting smaller-scale projects.

Before deploying a covered model, developers must certify to a new state agency, the Frontier Model Division, that the model lacks “hazardous capabilities.” These are defined as abilities that pose unreasonable risks, such as enabling large-scale cyberattacks or the creation of chemical or biological weapons. A central and controversial mandate is the “kill switch,” a requirement for developers to build the capability to “promptly and completely” shut down their model if its safety can no longer be assured, according to the official bill text.

California’s Solo Dance in the Global Arena

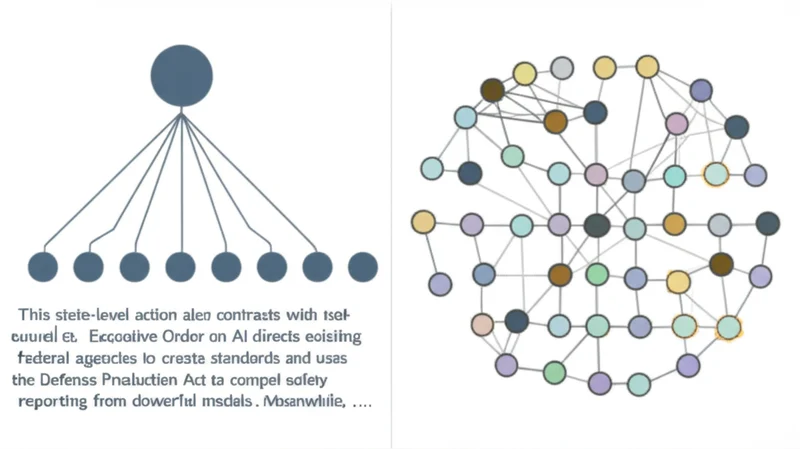

SB 1047’s approach marks a notable divergence from other major regulatory frameworks. While the EU AI Act employs a risk-based pyramid that categorizes AI systems by their use case (e. g., hiring, law enforcement), SB 1047 focuses on the raw computational power used to train the model itself. This makes the developer, not the deployer, the primary subject of regulation.

This state-level action also contrasts with federal efforts. The U. S. Executive Order on AI directs existing federal agencies to create standards and uses the Defense Production Act to compel safety reporting from developers of powerful models. Meanwhile, the NIST AI Risk Management Framework offers a voluntary set of best practices rather than a legal mandate. Proponents view California’s bill as a nimble response to a fast-moving field, while critics express concern over a potential patchwork of conflicting state laws.

Fortress Walls or Innovation Bridge?

The most contentious debate surrounding SB 1047 is its potential economic impact. A coalition of open-source advocates, industry groups, and AI researchers argues that the bill’s high costs and legal risks will create a “regulatory moat.” The Electronic Frontier Foundation (EFF), for example, states the bill would establish a “permission-to-innovate system” that only benefits large, well-resourced incumbents. AI expert Andrew Ng echoed this, arguing in his newsletter, The Batch, that the bill’s structure could lead to regulatory capture by Big Tech and stifle competition.

To counter this, the bill includes a provision for “CalCompute,” a public AI research cloud. This initiative aims to provide startups and academic researchers with subsidized access to the large-scale computing power necessary for frontier model development. The success of this provision in fostering competition hinges entirely on its scale, funding, and accessibility. The proposal for a public compute resource, < a href=”https://www.latimes.

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]