China's quantum breakthrough powers AI model Fine-Tuning

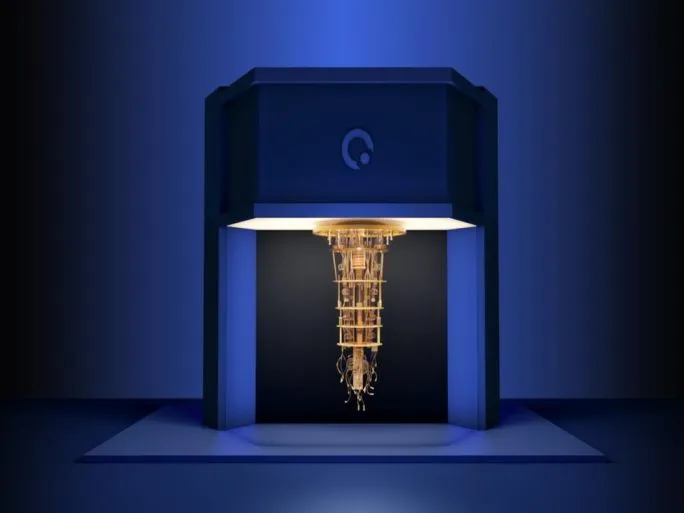

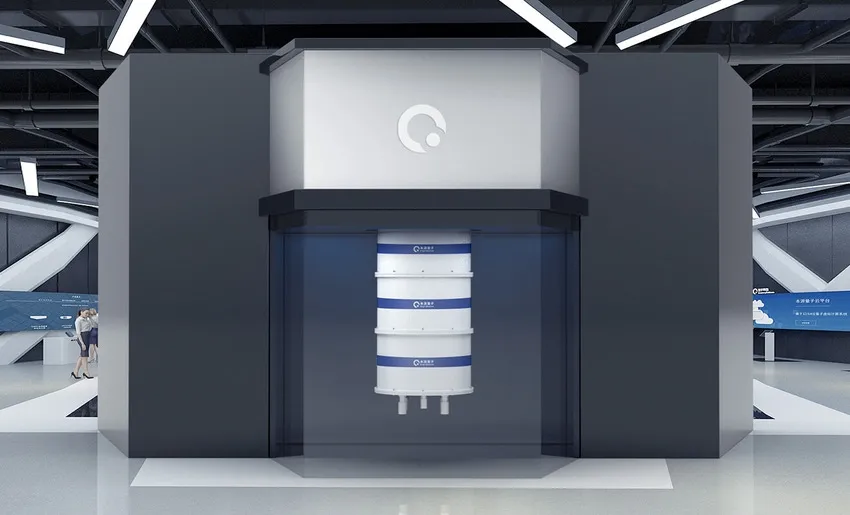

At the core of China’s quantum computing advancement stands the Origin Wukong system – a technological marvel operating on a 72-qubit superconducting chip named after the mythical Monkey King renowned for his transformative abilities. Technical specifications reveal an impressive architecture incorporating 72 working qubits and 126 coupler qubits, marking a significant milestone in China’s journey toward quantum self-sufficiency.

Since its launch on January 6, 2024, the Origin Wukong system has been accessible via cloud services and has already demonstrated remarkable productivity. The system has reportedly completed over 350,000 quantum computing tasks for users across various industries from 139 different countries, facilitated by its global cloud accessibility. This widespread utilization underscores its emergence as a functioning platform for quantum research and application development.

The specific task highlighted in this groundbreaking experiment – fine-tuning – represents a critical process in AI development. It involves adapting a large, pre-trained AI model (in this case, one with a billion parameters) for specific applications by retraining it on new, task-relevant data. Traditionally, this process demands enormous computational resources, especially for models of this scale. The Chinese research team claims to have achieved this fine-tuning far more efficiently by leveraging the unique capabilities of the Origin Wukong quantum computer.

Inside the Quantum-Assisted Fine-Tuning: The QWTHN Method

To achieve these remarkable results, researchers collaborated across Origin Quantum Computing Technology Co. and several research institutions. They developed and employed a novel technique called the Quantum Weighted Tensor Hybrid Network (QWTHN), detailed in an arXiv preprint. This approach represents a sophisticated hybrid methodology that intelligently combines the strengths of both classical and quantum computing systems. Rather than attempting to run the entire massive AI model on quantum hardware—something well beyond current capabilities—it strategically assigns specific, computationally intensive sub-tasks to the quantum processor while classical systems handle the remainder of the workload.

The results presented by the Anhui Quantum Computing Engineering Research Centre suggest remarkable improvements in both efficiency and performance. These gains were particularly evident when compared to a standard classical method (identified as LoRA Rank 4). The key performance metrics reported are impressive:

- Training Effectiveness: Despite using substantially fewer parameters, the team reported an 8.4% increase in overall training effectiveness, suggesting the quantum-assisted method learned more efficiently.

- Accuracy Improvement: On a specific mathematical reasoning task used for evaluation, the model’s accuracy reportedly increased from 68% (using the classical baseline) to 82% after fine-tuning with the QWTHN method on Origin Wukong.

- Training Loss Reduction: Supporting the efficiency claims, researchers also noted up to a 15% reduction in training loss on certain datasets, indicating a more optimized learning process.

Achieving such a dramatic reduction in parameters while simultaneously delivering significant performance improvements is particularly noteworthy. Typically, parameter-efficient techniques involve trade-offs, where efficiency gains come at the cost of slightly reduced performance compared to full fine-tuning. If independently verified, the QWTHN method’s apparent ability to avoid this trade-off could represent a genuinely innovative approach to adapting AI models.

“It’s like equipping a classical model with a quantum engine, allowing the two to work in synergy,” explained Dou Menghan, vice president of Origin Quantum Computing Technology Co. This analogy effectively captures the hybrid approach. According to the researchers, this synergy directly addresses the growing ‘computing power anxiety’ associated with the ever-increasing size of large AI models, offering a potential pathway to make powerful AI more manageable and accessible.

The Challenge of AI Scale and the Promise of Quantum PEFT

This research emerges against the backdrop of the enormous scale of modern AI systems. Foundation models now routinely contain billions, sometimes trillions, of parameters. While incredibly powerful, their sheer size makes adaptation through full fine-tuning a formidable challenge, demanding substantial computational resources, processing time, and memory. These resource constraints place full fine-tuning beyond the reach of many organizations and researchers, slowing the deployment of AI tailored for specific applications and industries.

Parameter-Efficient Fine-Tuning (PEFT) techniques emerged as a classical solution to this problem. The fundamental concept is elegant: rather than retraining the entire massive model, PEFT methods freeze most of the pre-trained model’s weights and adjust only a small, strategically selected set of parameters. This approach dramatically reduces computational requirements, lowers memory demands (a critical bottleneck), decreases storage needs for adapted models, and can help prevent “catastrophic forgetting,” where models lose general knowledge while learning specific tasks. LoRA, based on low-rank matrix factorization, has become a widely adopted and effective classical PEFT strategy.

However, classical methods like LoRA have inherent limitations. Their reliance on the low-rank hypothesis—the assumption that weight updates have a low “intrinsic rank”—may not fully capture the complexity required for all adaptation tasks. This is where quantum computing enters as a potential enhancer. Researchers are exploring how quantum principles such as superposition and entanglement, when incorporated into hybrid quantum-classical algorithms like QWTHN, might offer more powerful or efficient ways to represent necessary changes during fine-tuning. The goal is to transcend classical PEFT limitations, achieving even greater parameter reduction while potentially delivering superior performance—an exciting, though still evolving, possibility.

A Quantum Leap or Measured Steps? Scrutiny in the NISQ Era

While headlines proclaim a ‘world first’ and the reported results are compelling, characterizing this experiment as a definitive, transformative breakthrough requires careful consideration and healthy scientific skepticism. We currently operate in the Noisy Intermediate-Scale Quantum (NISQ) era of quantum computing, which imposes fundamental constraints on what today’s quantum processors, including Origin Wukong, can reliably accomplish.

NISQ devices face several significant challenges: inherent noise (errors that corrupt calculations), limited qubit counts, short coherence times (restricting the duration and complexity of quantum computations), and difficulties in efficiently transferring large classical datasets into quantum systems and extracting results. These limitations make demonstrating clear, practical advantages over highly optimized classical algorithms for real-world problems extremely challenging.

The concept of “quantum advantage”—where a quantum computer definitively solves a useful problem faster, more efficiently, or better than the best possible classical computer using optimal methods—remains largely aspirational. This is particularly true for quantum machine learning applications with classical data, where the practical implementation of QML often involves substantial overheads. While the QWTHN method reportedly outperformed a specific LoRA baseline (Rank 4), establishing true quantum advantage requires comparison against the most advanced classical PEFT techniques—a rapidly evolving field. Many promising quantum machine learning algorithms have struggled to demonstrate decisive advantages over leading classical approaches when evaluated under realistic conditions.

Therefore, independent replication and rigorous verification of the Origin Wukong experiment’s results are essential. Comprehensive benchmarking is needed to understand precisely where the reported gains originate. Do the improvements primarily stem from the clever design of the hybrid QWTHN algorithm itself (which might deliver benefits even when implemented classically on powerful hardware)? Or do they genuinely arise from leveraging the quantum properties of the Wukong hardware in ways that classical systems cannot efficiently replicate? Understanding the exact nature, scale, and error-resistance of the quantum computations performed, along with fair comparisons to a broader spectrum of cutting-edge classical PEFT alternatives, is crucial before declaring a genuine quantum breakthrough for AI fine-tuning.

Origin Quantum, National Ambitions, and the Global Race

This development must be viewed within its broader context. Origin Quantum, the company behind the Wukong computer and a key participant in the experiment, operates as part of China’s strategic technological ecosystem. The company emerged from the Key Laboratory of Quantum Information at the Chinese Academy of Sciences (CAS) and the University of Science and Technology of China (USTC). Origin Quantum plays a significant role in China’s national strategy to achieve leadership in critical technologies like quantum computing. Backed by substantial state-linked investment, including a Series B funding round reportedly between $146 million and $148 million involving investors such as CASVC Investment and CICC Jia Cheng, its progress generates considerable attention and aligns with China’s long-term objectives for technological self-reliance and advancement.

Experiments like this serve dual purposes: they represent scientific investigations while also demonstrating national engineering capabilities and progress toward ambitious technological goals. Chen Zhaoyun, a researcher at the Institute of Artificial Intelligence (part of Hefei Comprehensive National Science Center), emphasized the work’s significance, stating that it marks the first practical application of quantum computing in large model tasks, demonstrating that current hardware can support such complex operations.

Although the global Quantum AI market was valued at a relatively modest USD 240-280 million in 2023/24, its projected high compound annual growth rate (CAGR), estimated between 33% and 37.5% through 2032, indicates substantial growth potential. This rapid expansion reflects increasing recognition of quantum AI’s transformative capabilities across industries.

The integration of quantum computing with AI represents a significant evolution in computational approaches. While classical computing relies on binary bits (0s and 1s), quantum computing utilizes quantum bits or qubits, which can exist in multiple states simultaneously thanks to the principles of superposition and entanglement. This fundamental difference enables quantum systems to process certain types of complex calculations exponentially faster than classical computers.

Applications Across Industries

The potential applications of quantum AI span numerous sectors:

- Finance: Quantum AI could revolutionize portfolio optimization, risk assessment, and fraud detection through superior pattern recognition capabilities.

- Healthcare: Drug discovery processes may be dramatically accelerated as quantum AI systems model molecular interactions with unprecedented accuracy.

- Logistics: Complex optimization problems like route planning and supply chain management could benefit from quantum computing’s ability to evaluate numerous possibilities simultaneously.

- Materials Science: Quantum AI could facilitate the discovery of new materials with specific properties by simulating atomic interactions more effectively than classical systems.

Despite these promising developments, significant challenges remain. Current quantum computers are still limited by factors such as qubit stability, error rates, and the need for extreme cooling conditions. Additionally, developing algorithms that can effectively leverage quantum advantages requires specialized expertise that remains scarce in the industry.

As quantum hardware continues to advance and more organizations explore potential applications, the synergy between quantum computing and artificial intelligence is likely to yield increasingly practical solutions to complex problems that have long resisted classical computational approaches.

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]