Google MLE-STAR: An AI Agent to Automate Complex MLOps

Google AI today announced its release: a state-of-the-art machine learning engineering agent designed to automate complex AI development and deployment tasks. The announcement of this Google autonomous AI agent marks a significant shift in the MLOps landscape, moving beyond the established paradigm of integrated toolchains toward the use of autonomous AI workers. Grounded in years of Google’s foundational research in code generation and automated ML, the Google MLE-STAR release aims to address the persistent bottlenecks in operationalizing AI models. This development directly builds on the company’s prior work, including the reasoning capabilities demonstrated in AlphaCode 2 and the self-correction mechanisms explored in recent research, creating a specialized agent for the entire machine learning lifecycle.

Key Points

• Google’s release of MLE-STAR introduces an autonomous agent for machine learning engineering, designed to automate tasks from data pipeline management to model deployment and monitoring.

• The agent’s technical foundation rests on proven Google research, including the advanced code generation of AlphaCode 2 and self-debugging principles from frameworks like Self-RAG.

• This development addresses the “hidden technical debt” in ML systems, a challenge first detailed in a seminal 2015 Google paper that remains a primary driver of MLOps complexity.

• MLE-STAR’s agent-based approach presents a new model for MLOps, contrasting with existing “toolbox” platforms from competitors like Databricks and Amazon SageMaker.

Automating Away the ML Debt Burden

The introduction of an agent like MLE-STAR is a direct response to long-standing industry challenges. The need for advanced automation was famously articulated in the 2015 Google research paper, which established that core ML code is merely a small component of a real-world system. The surrounding infrastructure for data, verification, and serving creates immense complexity, a problem MLOps aims to solve. This challenge led to the creation of early MLOps frameworks, such as Google’s own TensorFlow Extended (TFX), designed to standardize and automate these complex pipelines.

This complexity has fueled a massive market for automation, a trend reflected in the growth of AutoML, a direct precursor to MLE-STAR’s capabilities. The AutoML market is projected to grow from approximately $1.5 billion in 2023 to over $21 billion by 2030, according to analysis by Fortune Business Insights. This surge reflects a broader industry shift, with major analysts like Gartner identifying MLOps and AI-augmented development as key strategic technologies. The push for automation is also driven by a critical talent gap; a 2023 McKinsey report on the state of AI identifies the scarcity of specialized talent as a top organizational challenge. MLE-STAR is positioned to address this economic imperative by amplifying engineer productivity and automating routine MLOps tasks.

AlphaCode DNA in Silicon Form

MLE-STAR’s documented capabilities are not a sudden breakthrough but the culmination of specific, publicly demonstrated research lines from Google. The agent’s ability to reason through complex engineering problems is an extension of the technology behind AlphaCode 2. In late 2023, DeepMind showed that this system, powered by Gemini Pro, performed better than 85% of human competitors in programming competitions, showcasing advanced logic and code generation.

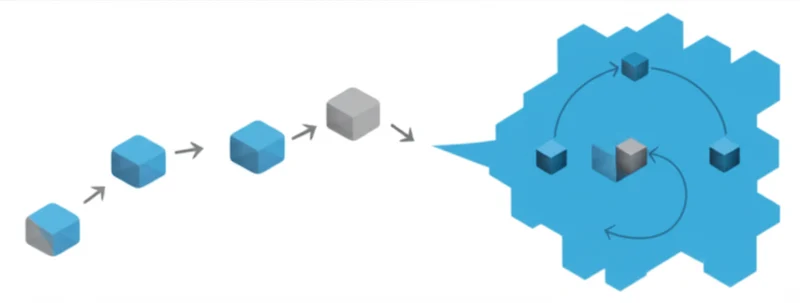

For an autonomous agent to be reliable, it must also be able to self-correct. This crucial function is based on research into self-debugging models. Frameworks like Self-RAG demonstrate how a model can retrieve information and critique its own output to improve quality. For an ML engineer agent, this translates into the ability to debug a failing data pipeline or iterate on a suboptimal model configuration without human intervention, a key requirement for autonomous operation.

When Agents Challenge Platforms

The concept of an AI engineering agent was recently brought into the spotlight by Cognition AI’s Devin. The startup claimed its agent resolved 13.86% of real-world issues from the SWE-bench benchmark unassisted, providing a public, albeit debated, metric for agent performance. While Devin is a generalist, MLE-STAR is a specialist, applying similar principles specifically to the domain of ML frameworks and MLOps infrastructure.

This agent-based model represents a paradigm shift from the dominant platform-centric approach of tools like Amazon SageMaker and Databricks, which provide an integrated “toolbox” for engineers. An agent acts more like an “autonomous worker” within that ecosystem, raising the question of an MLE-STAR MLOps replacement of existing tools. However, for widespread adoption, such an agent must integrate with, not replace, the open-source standards engineers already use. Seamless operation with tools like MLflow for experiment tracking and DVC for data versioning is a practical necessity for fitting into existing workflows.

Machines Building Machines

The release of MLE-STAR is a tangible step toward the “Software 2.0” vision articulated by Andrej Karpathy, where humans define goals and curate data while neural networks write the software. This agent embodies that concept for the very field of AI development itself. While the technology demonstrates a clear progression, its reliability in complex, real-world production environments remains the most critical test. Experts like Chip Huyen suggest that as these agents mature, the role of human engineers will evolve from direct implementation to strategic oversight and managing fleets of AI workers.

This development moves beyond theoretical discussions and places a specialized AI worker into the hands of engineering teams. As the industry begins to integrate these systems, the central question becomes: how will the practice of machine learning engineering change when the engineer’s primary collaborator is another AI?

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]