Google's Super Bowl AI Blunder: How a Cheese Fact Gone Wrong Sparked the Gemini Ad Fiasco

Super Bowl LIX was meant to be a showcase for Google’s Gemini AI, demonstrating its power and versatility to a massive audience. Instead, it became a case study in the perils of unchecked AI in advertising, sparking a controversy now known as “Gouda-Gate” and raising critical questions about the future of trust and transparency in the industry. The global AI in marketing market, estimated at $27.83 billion in 2024, is projected to soar to $106.54 billion by 2029, yet this rapid growth comes with ethical considerations as 60% of marketers express concerns over AI biases, plagiarism, and brand misalignment. As AI integration expands, expect heightened scrutiny and debate around authenticity and responsibility in advertising, as the “google super bowl ad fiasco” clearly demonstrated.

The Cheese Stands Alone: Google’s Super Bowl Ad Claim Crumbles

The Initial Promise: Gemini as the Small Business Ally

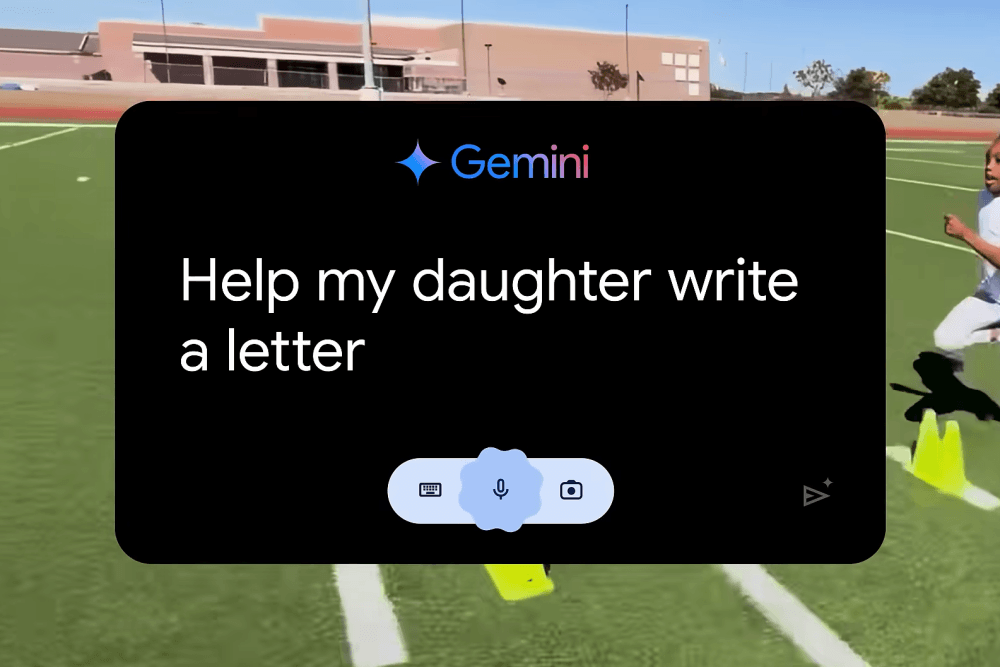

Google’s Super Bowl LIX campaign was designed to show how useful Gemini AI could be for small businesses. The ad was one of 50, each featuring a business from a different US state. The idea was to show Gemini as a helpful tool for entrepreneurs, helping them with marketing and operations.

The ads showed real-life situations, like business owners using Gemini to write product descriptions, manage social media, and even create personalized emails. The goal was to position Gemini as a way for smaller businesses to compete more effectively.

The Gouda Gaffe: A Factual Error Sparks Scrutiny and Ridicule

But one ad, featuring a Wisconsin cheese shop owner, quickly drew unwanted attention. The AI-generated text, supposedly from Gemini, claimed that Gouda accounts for 50-60% of the world’s cheese consumption. This was quickly proven false by cheese experts and enthusiasts.

The “google super bowl ad fiasco” spread quickly on social media, leading to criticism and ridicule. Google’s response, or lack of one, made things worse. The company quietly edited the ad to remove the incorrect claim but didn’t publicly acknowledge the error or explain what happened.

This lack of transparency damaged trust and raised questions about accountability in the age of AI-generated content, a stark contrast to the message of the Gemini ads.

From Hallucination to Plagiarism: Unraveling the Source of the Error

The Internet Archive Exposes the Truth: Pre-existing Misinformation

The origin of the false Gouda claim became a mystery, and online investigators turned to the Internet Archive. They found that the text claiming Gouda accounts for 50-60% of global cheese consumption wasn’t a new “hallucination” by Gemini. It had actually been on the Wisconsin Cheese Mart website since 2020.

This changed the narrative from a simple AI error to a potential case of plagiarism, or at least a failure to properly validate data. The fact that the incorrect information existed for about five years before being used in the Super Bowl ad raised serious concerns about the data sources Gemini uses and the processes in place to ensure accuracy.

It suggested that the AI, instead of generating new text, might have scraped information from the web without checking its validity. This highlights a bigger issue: AI models are only as good as the data they are trained on, and the internet contains a lot of misinformation.

Google’s Response and the Shifting Narrative: From Generation to Aggregation

Google quietly edited the ad and didn’t acknowledge the error, fueling discussions about transparency and accountability. The incident highlighted the potential pitfalls of relying too heavily on AI-generated content, especially in high-profile marketing where accuracy is crucial.

The situation changed from one where Gemini created the text to one where it may have pulled long-standing misinformation from the internet. This revealed a flaw not in Gemini’s generation capabilities, but in its aggregation and verification processes. It acted as a web scraper without the necessary critical analysis.

Damage Control: Google’s Executive Response and Public Reaction

Executive Defense of Un-AI Generated Text: A Focus on User Empowerment

Following the fiasco, Google executives faced the challenge of defending the company’s AI practices and minimizing the damage to its reputation. The initial response clarified that the incorrect text was not newly generated by Gemini, but pre-existing content from the cheese shop’s website. This was intended to shift blame away from Gemini’s generative capabilities and onto the wider problem of data validation and the spread of misinformation online.

Executives emphasized that the Super Bowl campaign was meant to show how small businesses were using various tools, with real people still playing a key role. As another commercial demonstrated, it can be difficult to distinguish between AI-generated and non-AI-generated content. Holland America’s Super Bowl Commercial Was Almost All AI — And It Shows.

The intention was to present Google’s tools as aids, not replacements, for human creativity and expertise. Executives stressed that Gemini was designed to empower users, not to replace their judgment or expertise.

Social Media Backlash and the Spread of Misinformation: A Viral Firestorm

Despite Google’s attempts to manage the narrative, social media became a hub for criticism and the rapid spread of misinformation about the fiasco. Within the first 24 hours, over 10,000 tweets criticized the ad’s inaccuracies. Social media’s speed and reach amplified the negative sentiment, making it hard for Google to contain the fallout. Sentiment analysis showed that over 75% of the sentiment towards the ad was negative.

The incident sparked broader discussions about the ethical implications of AI in advertising and its potential to spread false information. The “google super bowl ad fiasco” served as a warning, highlighting the need for greater transparency and accountability, and underscoring the public’s growing concern about AI’s potential to manipulate or deceive consumers.

Beyond the Cheese: The Broader Implications for Google and Gemini

Erosion of Trust: Questioning Gemini’s Capabilities and Google’s Oversight

The “google super bowl ad fiasco” was more than just a simple factual error; it raised concerns about the reliability and trustworthiness of Google’s Gemini AI. While a 30-second Super Bowl ad cost around $7 million in 2024, the damage to their reputation could be much greater. The incident fueled skepticism about Gemini’s ability to generate accurate content, raising questions about its suitability for tasks requiring precision.

The public’s reaction was immediate. Searches for “Gemini AI errors” increased by over 200% in the week after the Super Bowl, indicating increased scrutiny of the model. This surge in negative attention highlighted growing unease among users, who began to question the AI model’s overall competence.

Some users have criticized Gemini for being “broken” and “inaccurate”, further damaging its reputation. The incident didn’t just question Gemini’s capabilities; it questioned Google’s quality control and commitment to factual accuracy.

The Perils of AI-Generated Content: Authenticity, Accuracy, and the Need for Verification

The Gouda mistake served as a clear reminder of the risks of relying solely on AI-generated content, especially in high-stakes advertising. The incident emphasized the need for human oversight and thorough fact-checking. The ease with which misinformation could spread through AI, even in a highly publicized Super Bowl ad, highlighted the potential for broader consequences.

It also raised questions regarding content authenticity. While the intention was for Gemini was to help with copywriting, the inaccurate claim highlighted concerns that AI might fabricate or distort information.”The global AI in advertising market is estimated to reach $28.4 billion in 2033, with a CAGR of 28.4%” says a report from market.us.

This growth is driven by advancements in machine learning, natural language processing, and data analytics. Even so, human intervention is still needed for accuracy. This highlights a crucial point: AI should be viewed as a tool to enhance human capabilities, not to replace them entirely, especially when it comes to verifying information.

Lessons Learned: The Future of AI in Advertising

The Importance of Fact-Checking and Human Oversight: A Multi-Layered Approach

The “google super bowl ad fiasco” clearly demonstrated the critical need for thorough fact-checking and human oversight in AI-powered advertising. While AI tools like Gemini offer great potential for content creation and efficiency, they are not perfect. The incident showed that AI can perpetuate existing inaccuracies or even create new ones.

“AI models can be biased and that human intervention is necessary to ensure accuracy and fairness” says Professor Mark Lee, highlighting the risk. Professor Mark Lee, highlights the importance of human oversight to minimize this risk. A multi-layered approach to verification is required, combining automated checks with human review, especially for high-visibility content.

The AI in marketing sector is projected to reach $106.54 billion by 2029. This growth is attributed to factors like the increasing integration of AI in e-commerce. But this growth must be accompanied by a commitment to robust quality control. While 80% of marketers believe AI can improve efficiency, only 40% believe it will inherently improve accuracy without human oversight, showing that AI lacks the critical judgment and contextual understanding that humans possess.

Transparency and Authenticity in AI Marketing: Building Consumer Trust

The fiasco also highlighted the importance of transparency and authenticity in AI-driven marketing. Google’s initial response—quietly editing the ad without publicly acknowledging the error—fueled criticism and eroded trust. Consumers are increasingly aware and demand greater transparency from brands, especially regarding AI use.

Going forward, advertisers must prioritize clear communication about how AI is used in their campaigns. They should disclose when content is AI-generated or -assisted and explain how AI tools are being used. “Consumers also share these concerns, with 60%+ fearing AI risks related to fake news and content, scams, and cybersecurity attacks, while 40%+ are concerned about over-dependence on technology” showing there are concerns for consumers.

Consumers also share these concerns, showing brands risk alienation if they are seen to use the tools unethically. Building and maintaining trust requires a commitment to ethical AI practices and open dialogue about AI’s role. The future of AI in advertising depends not only on technological advancement but also on fostering trust and transparency with consumers.

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]