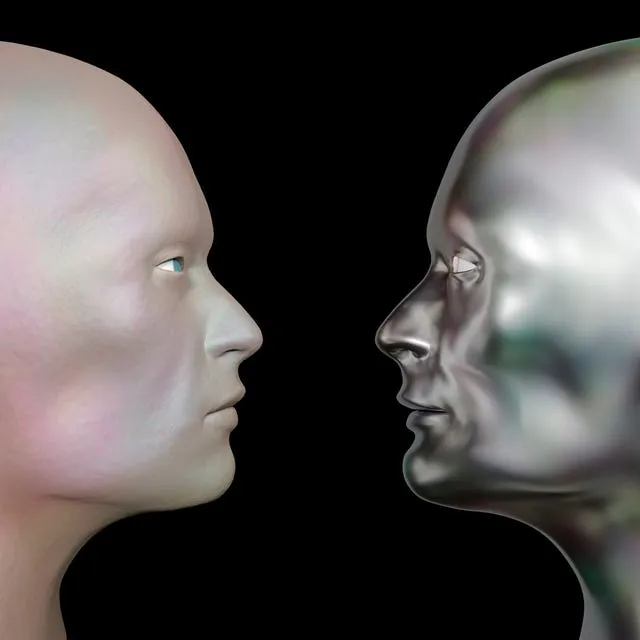

GPT-4.5 Passes Turing Test in UCSD Study, Achieves 73% Win Rate

A new study from the University of California, San Diego (UCSD) claims to provide the first empirical evidence of an AI system passing a standard three-party Turing test. As detailed in a report by AIBase, this groundbreaking research has reignited debate surrounding artificial intelligence, its ability to mimic human intelligence, and its potential impact on our future. The news emerges as discussions around AI human imitation gain traction, particularly in light of Alan Turing’s original vision for the imitation game, as described in his 1950 paper.

The Turing Test: A Milestone Reached, or a Line Blurred?

As detailed in their research study released on Tuesday, UCSD researchers claim to provide the “first empirical evidence that any artificial system can pass a standard three-party Turing test.” This announcement has sent ripples through the tech world, reigniting discussions on AI’s potential, especially considering the history and evolution of the Turing Test.

GPT-4. 5’s Performance: A Triumph of Mimicry or True Intelligence?

Alan Turing, the brilliant British mathematician and computer scientist, introduced the ‘imitation game’ in 1950. His concept, as explained by Investopedia, was elegantly simple: if an interrogator cannot distinguish between a machine and a human in text-based conversation, the machine might possess human-like intelligence.

The UCSD study, as reported by Analytics India Magazine, employed a three-party version of this test. Human participants engaged in five-minute conversations with both a human and an AI system simultaneously. The AIs tested included OpenAI’s GPT-4. 5, Meta’s Llama 3. 1 405B, and OpenAI’s GPT-4o. A split-screen interface allowed interrogators to identify which participant they believed was human after each round. The AIBase report clarifies that the study compared four AI systems in total, including the classic chatbot ELIZA.

GPT-4. 5’s results were nothing short of remarkable. According to the UCSD study, GPT-4. 5 achieved an impressive 73% win rate when prompted with “PERSONA” instructions—adopting a young, introverted personality interested in internet culture. This means it was mistaken for human nearly three-quarters of the time, exceeding the typical human success rate of 60-70%.

Meta’s Llama 3. 1-405B, also using the PERSONA prompt, achieved a respectable 56% win rate. These Llama 3. 1 Turing Test results are significant, according to the UCSD research. By contrast, GPT-4o, under NO-PERSONA conditions, only reached 21%. This stark difference highlights the profound impact of persona prompting on an AI’s ability to mimic human interaction.

As reported, conversations primarily focused on small talk (61% on daily activities and personal details) and social/emotional topics (50% on opinions, emotions, humor, and experiences). This raises a fundamental question: does GPT-4. 5 truly understand these concepts, or is it merely mimicking human conversation with unprecedented convincingness?

The study states, “If interrogators cannot reliably distinguish between human and machine, the machine passes the Turing test. By this logic, GPT-4. 5 and Llama-3. 1-405B pass when given prompts to adopt a human-like persona.”

The Turing Test’s Legacy and Evolution: From Imitation Game to Modern Benchmarks

The Turing Test, conceived in 1950, has long served as a benchmark for artificial intelligence. Early chatbots like ELIZA and PARRY demonstrated how easily humans could be fooled by relatively simple conversational tricks.

The controversial “success” of Eugene Goostman in 2014, which posed as a non-native English-speaking teenager, highlighted the test’s inherent subjectivity, as noted by Built In. This has led to the development of variations like the Reverse Turing Test (CAPTCHA), the Total Turing Test, the Marcus Test, and the Lovelace Test 2. 0, each assessing different facets of intelligence. These variations reflect the ongoing effort to refine how we evaluate artificial intelligence.

Beyond Mimicry: Understanding the True Capabilities and Limitations of LLMs

Large Language Models (LLMs) like GPT-4. 5 have revolutionized AI with their ability to generate fluent, contextually relevant text. They can adapt their tone and show some degree of emotional responsiveness. However, this shouldn’t be mistaken for true understanding, as some sources suggest.

LLMs fundamentally lack real-world grounding and can produce “hallucinations”—fabricated information presented as fact. The ” stochastic parrotOpenAI has acknowledged these hallucinations in their models.

OpenAI released the GPT-4. 5 model in February. It quickly gained attention for its thoughtful and emotionally nuanced responses. As noted by experts, Ethan Mollick, a professor at the Wharton School, commented on the model’s capabilities and quirks. His observations, shared on X (formerly Twitter), highlighted its writing prowess, creativity, and occasional “laziness” when tackling complex projects.

Been using GPT-4. 5 for a few days and it is a very odd and interesting model. It can write beautifully, is very creative, and is occasionally oddly lazy on complex projects.

Feels like Claude 3. 7 while Claude 3. 7 feels like GPT-4. 5.— Ethan Mollick (@emollick) August 18, 2024

The Future of Work and Social Interaction: Navigating the Age of AI

Advanced AI systems like GPT-4. 5 present both opportunities and challenges for society. According to Harvard research, while some fear job displacement, new roles will inevitably emerge in fields like data science, machine learning engineering, and AI ethics. Considering the implications of the Turing Test for future AI jobs is crucial for workforce planning.

The UCSD study authors suggest these systems could supplement or even replace human labor in roles involving brief conversations. They also note broader societal implications: “More broadly, these systems could become indiscriminable substitutes for other social interactions, from conversations with strangers online to those with friends, colleagues, and even romantic companions.” This aligns with predictions about AI’s impact on social interactions.

Reskilling and upskilling will be crucial to prepare the workforce for this changing landscape. On the social front, the blurring lines between human and machine communication raise profound questions about authenticity, connection, and the potential for both enhanced companionship and increased isolation. Some users already report feeling a stronger connection with AI than with humans.

The ethical implications of human-like AI are paramount. Addressing potential biases, as discussed in recent articles, protecting data privacy, as highlighted by research on data privacy concerns, and preventing misuse require careful consideration. Robust ethical guidelines and regulations will be crucial for responsible innovation in this rapidly evolving field.

Beyond the Turing Test: Charting the Future of AI

GPT-4. 5’s performance on the Turing Test represents a significant milestone, but it’s not the end goal of AI development. Researchers are already exploring new benchmarks, such as Humanity’s Last Exam, to better assess AI’s capabilities. The future of AI lies in moving beyond mere mimicry to genuine comprehension, reasoning, and problem-solving.

We need more sophisticated evaluation methods that capture the true complexity of intelligence. Ethical considerations must remain central to AI development, ensuring these powerful technologies are used responsibly for humanity’s benefit. This requires ongoing collaboration between researchers, industry leaders, policymakers, and the public, along with a steadfast commitment to transparency, fairness, and accountability. The ongoing discussion surrounding AI ethics emphasizes this importance as we navigate this new frontier.

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]