Huawei SuperPoD AI System Targets Post-Ban China Market

In a direct response to escalating tech restrictions, Chinese technology giant Huawei has unveiled a comprehensive new AI infrastructure at its Connect 2025 conference. The announcement details a multi-year roadmap for its Ascend AI chips and introduces the “SuperPoD” architecture, a system-level approach designed for massive computational scale. This move is strategically timed, following reports that China has banned domestic tech companies from purchasing Nvidia hardware, creating a significant market vacuum. The latest on Huawei AI infrastructure signals a fundamental shift in the company’s approach, pivoting from direct chip-to-chip competition to building a domestic alternative to Nvidia by innovating at the cluster level.

Key Points

- Huawei announced a multi-year roadmap for its Ascend AI chips and a new SuperPoD cluster architecture.

- The strategy focuses on system-level scale to compensate for documented limitations in leading-edge semiconductor manufacturing.

- This development directly addresses the market gap created by China’s reported ban on Nvidia hardware purchases.

- Huawei plans to open-source its CANN software platform by late 2025 to challenge Nvidia’s dominant CUDA ecosystem.

Scaling Mountains Without Climbing Nodes

Huawei’s strategy is not to outperform Nvidia on a per-chip basis but to achieve competitive performance through massive scale. The company’s plan is built on an iterative chip roadmap, a scalable interconnect fabric, and a software ecosystem designed to foster adoption.

The Ascend chip roadmap provides a predictable upgrade path, with reports suggesting a promise to double compute power with each generation. The Ascend 950 series, scheduled for 2026, will deliver up to 2 PFLOPs of performance and a 2 TB/s interconnect bandwidth—a 2.5x improvement over current models. It will also feature Huawei’s proprietary “HiBL 1.0” memory. The roadmap extends to the Ascend 970 in 2028, which targets an interconnect bandwidth of 4 TB/s and 8 PFLOPs of FP4 performance.

The core of the announcement is the Huawei SuperPoD AI system, a large-scale cluster architecture. The Atlas 950 SuperPoD, set for a Q4 2026 release, will integrate 8,192 Ascend 950 NPUs. Huawei also detailed plans for “SuperClusters” that connect multiple SuperPoD units, with the Atlas 960 SuperCluster projected to exceed 1 million NPUs . This Huawei AI chip cluster technology is enabled by a proprietary high-bandwidth network called UnifiedBus, Huawei’s answer to Nvidia’s NVLink.

To build a developer community, Huawei will open-source its CANN software toolkit by the end of 2025, a move reported by Caixin Global .

When Politics Reshapes Silicon

As The Tech Portal observes, the timing of this announcement is “clearly not accidental.” The Huawei AI strategy post-Nvidia ban is a direct result of a market forged by geopolitics. With Chinese tech firms cut off from their primary supplier of high-end AI accelerators, they “have little choice but to turn to domestic alternatives.”

According to reports, Huawei’s leadership has acknowledged that China will likely lag in leading-edge semiconductor manufacturing “for a relatively long time.” This admission is the foundation of its strategy. Unable to win on the performance of a single chip, Huawei is shifting the battleground to system-level architecture. The company is betting that “scale and clustering can compensate” for the lower power of individual chips. This approach aims to deliver competitive aggregate performance for the massive AI training workloads that define the modern era.

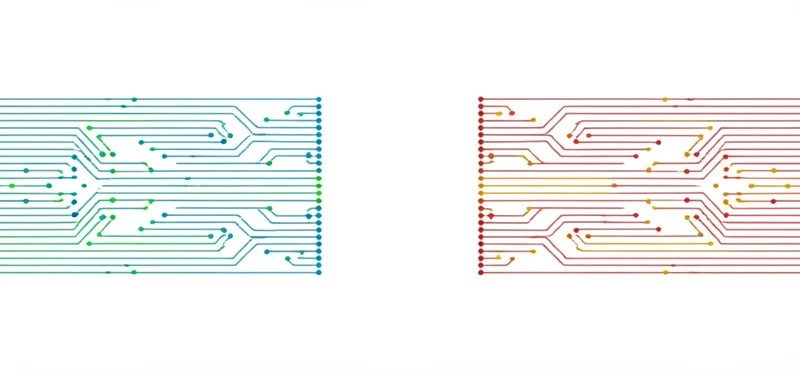

This development points toward a potential fracturing of the global AI hardware landscape. The world may be heading toward a bifurcated ecosystem: one built on Nvidia and Western technology, and a parallel China domestic AI alternative built around Huawei and other homegrown solutions, a possibility raised by Digitrendz.

Breaking CUDA’s Gravity Well

Huawei is making aggressive performance claims, projecting its upcoming Atlas 950 cluster will outperform Nvidia’s comparable NVL144 system. Indeed, one report cites a claim that its first SuperPoD will have 56.8 times more NPUs and deliver nearly seven times the processing power of the NVL144. However, these ambitions face substantial, real-world obstacles.

The primary challenge remains the semiconductor manufacturing gap. Being behind on process nodes will likely result in lower power efficiency and performance-per-watt compared to Nvidia’s hardware, a critical factor in data center operating costs. Beyond hardware, Nvidia’s greatest advantage is its CUDA software ecosystem, the industry standard for over a decade. Migrating complex AI workloads, developer talent, and established software libraries from CUDA to Huawei’s CANN platform is a monumental task that open-sourcing alone will not solve.

While this strategy is well-suited for a protected domestic market, its global competitiveness is limited. International customers without political restrictions are unlikely to abandon the mature and high-performing Nvidia ecosystem without a compelling technical and financial incentive.

Quantity as Quality: The New AI Math

Huawei’s announcement marks a significant moment in the global AI industry. By focusing on system-level scale rather than chip-level performance, the company demonstrates a pragmatic approach to overcoming manufacturing limitations. This strategy resembles the distributed computing model that powers cloud services – where the aggregate capability of many nodes compensates for individual limitations.

The technical specifications of Huawei’s SuperPoD architecture reveal a commitment to massive parallelism. With plans to integrate thousands of NPUs into unified clusters, Huawei is effectively building computational superstructures that prioritize total throughput over per-chip efficiency. This approach acknowledges the reality that modern AI training workloads are highly parallelizable and can benefit from sheer computational volume.

For China’s domestic AI industry, Huawei’s infrastructure represents more than just hardware – it establishes a foundation for technological self-sufficiency. By creating both the hardware architecture and software ecosystem, Huawei is building a complete alternative to the Western AI stack. The decision to open-source CANN by late 2025 further demonstrates a strategic understanding that developer adoption is crucial for long-term success.

Parallel Paths in AI’s Evolution

The bifurcation of global AI infrastructure into Western and Chinese ecosystems introduces new dynamics into the industry. While Nvidia continues to push the boundaries of single-chip performance with advanced manufacturing nodes, Huawei’s approach focuses on scaling existing technology to unprecedented levels. These divergent strategies may lead to different optimization priorities in AI model development.

For AI researchers and enterprises, this divergence presents both challenges and opportunities. Models developed in one ecosystem may require significant adaptation to run efficiently in the other. However, this separation may also drive innovation as each ecosystem evolves to address its unique constraints and advantages. The technical differences between these approaches will likely influence everything from model architecture to training methodologies.

The effectiveness of Huawei’s strategy will ultimately be measured by the performance of real-world AI workloads on its infrastructure. While theoretical specifications provide a baseline for comparison, the true test will be how well complex models train and run on these massive clusters. The coming years will reveal whether Huawei’s bet on scale can indeed overcome the advantages of more advanced semiconductor manufacturing.

As these parallel AI ecosystems develop, the technical community faces an intriguing question: Will different hardware architectures lead to fundamentally different approaches to AI, or will the underlying mathematics of machine learning ensure convergent evolution despite divergent infrastructure? The answer may reshape our understanding of the relationship between hardware and AI capabilities in ways we’re only beginning to comprehend.

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]