Hugging Face's Thomas Wolf Challenges AI Hype: "It's Not Creating New Knowledge – Yet"

While tech leaders make grand claims about AI revolutionizing scientific discovery, Thomas Wolf, co-founder and chief science officer of Hugging Face, offers a sobering perspective. Wolf contends that despite impressive capabilities in processing existing information, today’s AI fundamentally lacks the ability to generate truly novel knowledge—the kind essential for groundbreaking scientific breakthroughs.

The “Yes-Men on Servers” Problem

In a thought-provoking post on X, Wolf warned that without significant research breakthroughs in creative problem-solving, AI risks becoming merely “yes-men on servers”—systems that excel at processing information but remain incapable of independent, creative thought.

“The main mistake people usually make is thinking [people like] Newton or Einstein were just scaled-up good students, that a genius comes to life when you linearly extrapolate a top-10% student,” Wolf explained. This highlights a crucial limitation: while current AI systems can identify patterns within existing human knowledge, they cannot fundamentally question that knowledge or challenge core assumptions in ways that lead to paradigm shifts.

Wolf argues that even with access to most of the internet, today’s AI primarily fills gaps between established knowledge points rather than connecting previously unrelated facts to generate genuinely new insights.

Silicon Valley Optimism vs. Technical Reality

Wolf’s cautious assessment stands in stark contrast to the bold predictions from other industry leaders. OpenAI CEO Sam Altman suggested in an essay earlier this year that “superintelligent” AI could “massively accelerate scientific discovery.” Similarly, Anthropic CEO Dario Amodei has claimed AI could help formulate cures for most types of cancer.

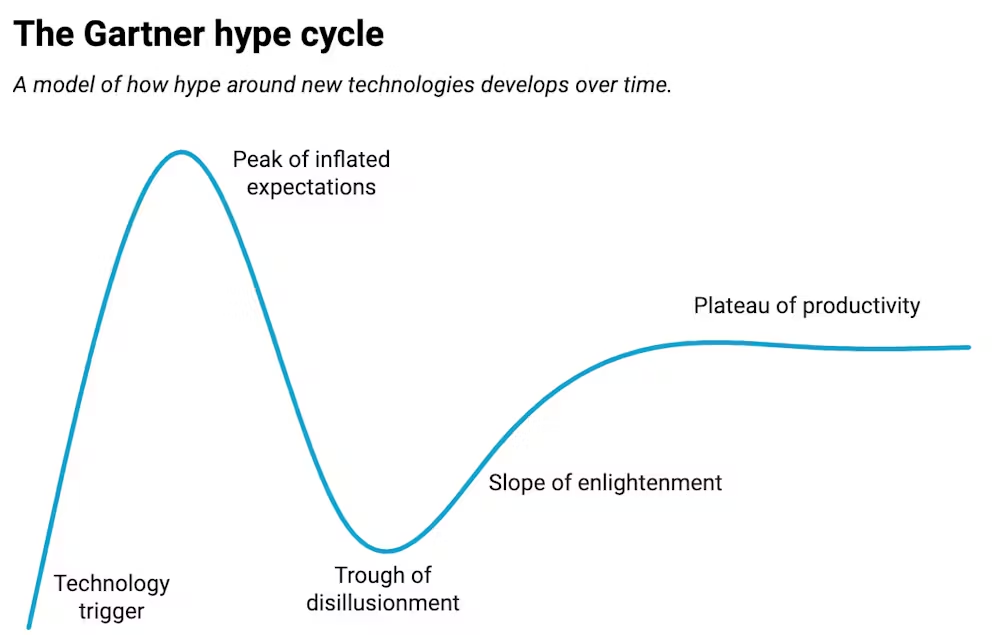

These contrasting viewpoints highlight a fundamental tension in the AI landscape between aspirational visions and current technical capabilities—a divide that could be characterized as the Altman vs. Wolf perspective on AI’s future.

The “Evaluation Crisis”: Why Current Benchmarks Fall Short

A significant part of the problem, according to Wolf, lies in how we measure AI progress. He points to what experts increasingly recognize as an “evaluation crisis” in AI research. Most benchmarks consist of closed-ended questions with clear, definitive answers—essentially testing an AI’s ability to recall and apply existing knowledge rather than generate new insights.

This critique finds support from other prominent voices in the field. Ex-Google engineer François Chollet has expressed similar concerns, suggesting that while AI can memorize reasoning patterns, it likely cannot generate “new reasoning” when confronted with novel situations.

Reimagining AI Evaluation

Wolf proposes a fundamental shift in how we evaluate AI systems. Rather than focusing solely on accuracy in answering known questions, he advocates for measures that assess whether AI can take “bold counterfactual approaches,” make general proposals based on “tiny hints,” and ask “non-obvious questions” that open entirely new research paths.

This emphasis on counterfactual thinking—the ability to consider alternative possibilities and reason about hypothetical scenarios—speaks to a crucial aspect of scientific discovery that current AI systems largely lack. While developing such evaluation methods presents significant challenges, Wolf believes the effort could yield transformative results.

Beyond “Obedient Students”: The Quest for AI That Challenges Assumptions

“To create an Einstein in a data center, we don’t just need a system that knows all the answers, but rather one that can ask questions nobody else has thought of or dared to ask,” Wolf explained. “One that writes ‘What if everyone is wrong about this?’ when all textbooks, experts, and common knowledge suggest otherwise.”

Current AI training methodologies produce what Wolf describes as “very obedient students”—systems that excel at absorbing and applying existing knowledge but lack the incentive to question established paradigms or propose ideas that contradict their training data.

Rethinking AI Training Incentives

The solution may lie in fundamentally reimagining how we train AI systems. Instead of exclusively rewarding accuracy, Wolf suggests creating incentives for questioning, challenging, and proposing ideas that deviate from conventional wisdom.

“[T]he most crucial aspect of science [is] the skill to ask the right questions and to challenge even what one has learned,” Wolf emphasized. “We don’t need an A+ [AI] student who can answer every question with general knowledge. We need a B student who sees and questions what everyone else missed.”

The Path Forward: A Balanced Approach to AI in Scientific Discovery

Wolf’s critique doesn’t dismiss AI’s potential in science but calls for a more nuanced approach to development and evaluation. It acknowledges that while AI has made remarkable progress, it still faces fundamental limitations in replicating the creative, independent thinking that drives scientific breakthroughs.

Moving forward requires a multi-faceted strategy:

- Reimagining Evaluation: Developing metrics that assess AI’s ability to reason, question, and generate novel hypotheses beyond closed-ended benchmarks.

- Transforming Training Paradigms: Creating systems that reward challenging existing knowledge rather than merely reproducing it.

- Adopting Realistic Expectations: Balancing enthusiasm with a clear-eyed assessment of AI’s current capabilities and limitations.

By embracing this more critical perspective, we can potentially harness AI not just as a tool for accelerating existing research approaches, but as a genuine partner in the quest for transformative scientific breakthroughs and new knowledge.

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]