Kioxia vs. CXL: A New Direct-Attached Flash for AI Bottlenecks

Kioxia has unveiled a 5TB high-bandwidth flash module, a novel class of device that serves as a Kioxia 64 GB/s flash for AI and high-performance computing (HPC) to directly address critical data bottlenecks. Unlike traditional SSDs, this prototype connects flash memory directly to the CPU over a full PCIe 5.0 x16 interface, the same used by high-end GPUs. The core innovation is its ability to bypass the conventional NVMe storage protocol, creating a new ‘memory-semantic’ storage tier. This approach offers DRAM-like bandwidth with the persistence and capacity of flash storage, representing a significant development in the ongoing convergence of memory and storage. The latest storage solution for AI bottlenecks challenges the emerging Compute Express Link (CXL) standard by offering a powerful, direct-attached alternative for accelerating the most demanding data-intensive workloads.

Key Points

• Kioxia’s prototype delivers 64 GB/s sequential read speeds and 11 million random read IOPS by utilizing a full PCIe 5.0 x16 interface.

• The design bypasses the NVMe protocol, enabling direct, memory-mapped access that reduces latency and software overhead for AI and HPC applications.

• This memory-semantic flash module creates a new storage tier between DRAM and traditional SSDs, competing with and coexisting alongside the CXL standard for memory expansion.

• Adoption of this technology depends on the development of a supporting software ecosystem and its total cost of ownership compared to CXL alternatives.

Breaking the NVMe Bottleneck

A Direct Path to the CPU

Kioxia’s flash module represents a fundamental architectural shift from standard solid-state drives. It leverages a full PCIe 5.0 x16 slot, providing four times the data lanes of a typical M. 2 NVMe SSD. This massive hardware pipeline is what enables its specified sequential read speed of 64 GB/s and write speed of 18 GB/s.

This performance is built on Kioxia’s BiCS FLASH™ 3D NAND technology, which stacks memory cells vertically to achieve the necessary density and raw speed. The underlying media is Kioxia’s 8th-generation 3D NAND, which features 218 active layers to provide the raw throughput required for such a device. As noted by AnandTech’s analysis, this direct connection maximizes the physical bandwidth between the storage media and the processor.

Protocol Bypass: The Latency Leapfrog

The key innovation is how the Kioxia flash module bypasses NVMe by removing its protocol stack. While NVMe is efficient for block storage, it still introduces software overhead. Kioxia’s memory-semantic approach allows applications to perform load/store operations directly on the flash device, similar to system RAM. This bypass of the kernel’s filesystem and block I/O layers, highlighted in coverage from ServeTheHome, significantly reduces latency. This is critical for workloads like real-time analytics and AI data preprocessing, which demand the fastest possible data access.

Quenching AI’s Data Thirst

Cracking the ‘I/O Wall’

The demand for such a device is driven by the explosive growth of AI models. As models grow from millions to trillions of parameters, as detailed in analysis by McKinsey, CPUs and GPUs are often left idle, waiting for data. This “I/O wall” is a primary performance limiter in AI training and large-scale HPC simulations. Kioxia’s module is engineered to saturate these powerful processors with data, turning I/O-bound problems into compute-bound ones.

The Birth of Memory-Semantic Storage

This module helps define what is memory-semantic storage: a new tier in the data center hierarchy, sitting between volatile DRAM and slower NVMe SSDs. This layer, often called Storage-Class Memory (SCM), provides a “warm” tier for datasets too large or costly to fit in system RAM. The enterprise flash market is responding to this need, with firms like TrendForce reporting a 28.1% quarterly revenue increase in the NAND Flash market in Q4 2023, largely driven by enterprise SSD demand. Kioxia’s device carves out a niche at the highest end of this market.

Speed vs. Standards Showdown

Challenging CXL’s Memory Domain

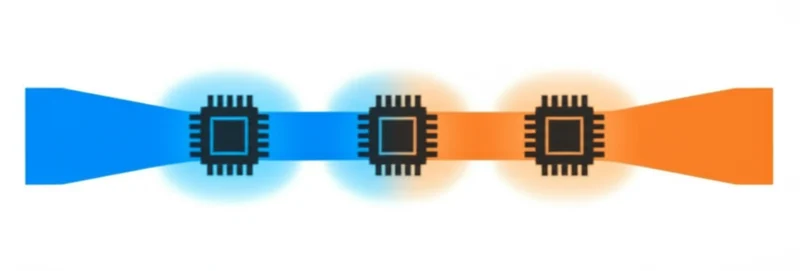

The most significant related technology is Compute Express Link (CXL), an open industry standard for memory expansion and sharing. As the CXL Consortium explains, CXL enables CPUs to access pools of memory with low latency. Competitors like Samsung are heavily invested in CXL, demonstrating products like their CXL Memory Expander, which allows memory capacity and bandwidth to be scaled far beyond what is possible with traditional DIMM slots. The discussion of the Kioxia memory-semantic flash vs CXL highlights a key architectural choice: Kioxia’s proprietary, direct-attached solution offers simplicity and high performance in a single server, while CXL provides a standardized ecosystem for memory pooling and sharing across multiple nodes.

Beyond Enterprise SSDs: A New Category

It is essential to differentiate this module from a faster SSD. Its 64 GB/s bandwidth is over four times that of the fastest PCIe 5.0 x4 enterprise SSDs, which top out around 14 GB/s. The architectural choice to use a PCIe x16 interface and bypass the NVMe stack places it in a different performance category. It is designed not for general-purpose storage but for memory expansion and workload acceleration, a distinction noted by industry analysts at Blocks & Files.

Code and Compatibility Conundrum

Building the Missing Software Bridge

Despite its impressive hardware specifications, the module’s success hinges on software. Because the Kioxia flash module bypasses NVMe, it requires specialized drivers and APIs to enable its memory-semantic capabilities. Applications may need significant modification to move away from standard file I/O operations and take full advantage of direct memory access. This software engineering effort is a considerable hurdle to adoption.

Slots, Standards, and Server Economics

The path to widespread use faces several practical challenges. The device requires a free PCIe 5.0 x16 slot—a high-value resource in servers often occupied by GPUs. Furthermore, as a proprietary solution, it lacks the interoperability of an open standard like CXL, presenting a single-vendor investment risk for customers. The total cost of ownership, factoring in both the hardware and necessary software development, will be a critical factor in its competition against CXL-based solutions and large-DRAM server configurations.

Memory’s Evolving Architecture

Kioxia’s high-bandwidth flash module is a tangible example of the ongoing convergence of memory and storage. It demonstrates a clear industry trajectory toward more fluid data architectures where different memory technologies are connected via high-speed interconnects. This approach, outlined by organizations like the Storage Networking Industry Association (SNIA), is essential for building data centers capable of handling next-generation AI. While challenges in software and standardization remain, this development pushes the boundaries of server performance. How will the industry balance the raw performance of proprietary, direct-attached solutions against the interoperability of open standards like CXL?

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]