Libby AI Feature Ignites Debate on Privacy vs. Librarian Curation

OverDrive has rolled out a new AI-powered discovery feature in its popular Libby library app, sparking a significant debate that pits the tech industry’s push for data-driven personalization against the foundational ethics of public libraries. The new feature, reportedly named “Inspire Me,” moves beyond traditional recommendation engines by using a Large Language Model (LLM) to offer conversational search capabilities. This development places one of the world’s largest digital library platforms at the center of a conflict between meeting user expectations set by commercial streaming services and upholding the library’s core commitments to privacy, intellectual freedom, and human-led curation. The integration of such sophisticated AI into a public service context is a notable test case for the ethical application of personalization technologies, with the Libby AI feature privacy concerns becoming a central point of discussion among librarians and privacy advocates.

Key Points

• Libby’s new feature represents a technical shift from traditional collaborative filtering algorithms to an LLM capable of processing nuanced, conversational user queries.

• The implementation raises immediate ethical challenges, particularly concerning the American Library Association’s (ALA) “Code of Ethics” on patron privacy and the risk of algorithmic bias.

• This development is driven by a broader market trend where user expectations for personalization are shaped by commercial platforms like Netflix and Spotify.

• OverDrive’s massive scale, with 662 million digital checkouts in 2023, provides the extensive dataset required for training such an AI but also amplifies concerns over Overdrive Libby AI data collection.

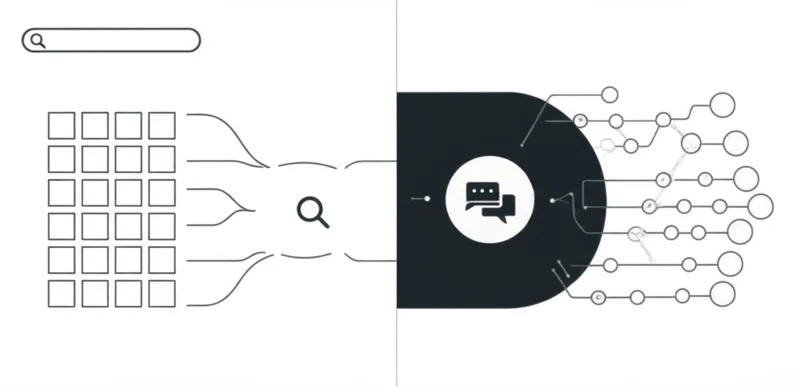

From Keywords to Conversations: Libby’s AI Leap

Libby’s AI feature marks a substantial technical evolution from the recommendation systems commonly used in digital platforms. Traditional engines rely on collaborative filtering (recommending based on similar users) or content-based filtering (recommending based on item attributes). This new implementation incorporates an LLM, enabling a far more sophisticated, semantic understanding of user intent.

This architecture allows users to make complex, natural language queries that older systems cannot process, such as, “Find me a fast-paced mystery set in Japan that isn’t too violent, similar to the style of Keigo Higashino.” The system’s ability to interpret such nuanced requests is based on its training on a massive corpus of book metadata, reviews, and anonymized user data, according to research on LLMs for recommendation systems. As detailed in a survey by Google Research on conversational recommendation systems, the technical challenge lies in managing dialogue state and generating relevant suggestions in real-time, a resource-intensive process that demands high-quality, broad training data.

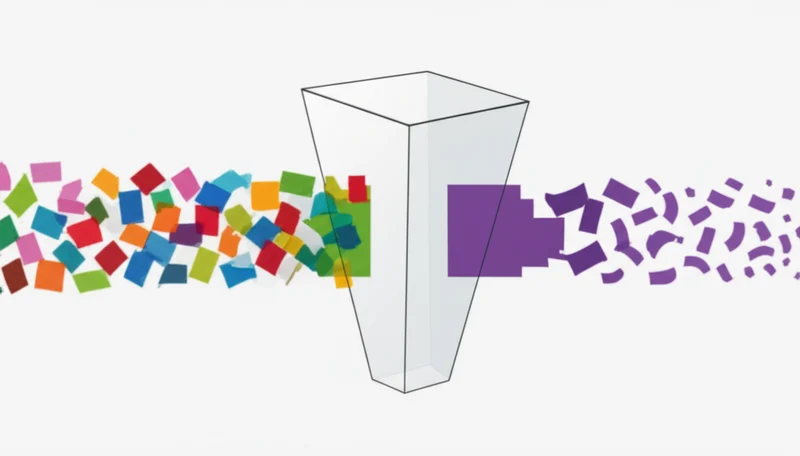

Curation Crossroads: Algorithms vs. Expertise

The introduction of this AI tool creates a direct conflict with the professional ethos of librarianship. A core function for librarians is curation—the thoughtful selection of materials to broaden a community’s horizons. The fear is that the Libby AI vs librarian curation dynamic will favor automation that inadvertently narrows user discovery.

Algorithmic systems can create “filter bubbles” by over-recommending popular titles, a phenomenon that can reduce content diversity over time, as demonstrated in a 2019 ACM study. This challenges the library’s role in promoting intellectual freedom by providing access to a wide range of viewpoints. These professional concerns are echoed by the American Library Association itself, which in an analysis of AI trends warns against “ceding professional judgment to automated systems.” Furthermore, patron privacy is a cornerstone of library ethics. The ALA Code of Ethics mandates the protection of user confidentiality. An AI that analyzes reading habits and search queries collects sensitive data, raising critical questions about how that information is stored and used, a central theme in the Libby Inspire Me feature backlash.

Digital Expectations: The Personalization Pressure

Libby’s move into advanced AI is not happening in a vacuum but is a response to immense market pressures and established user expectations. Commercial platforms have set the standard for personalization; Netflix claims its system influences 80% of viewer hours, while Spotify’s AI-driven playlists are an industry benchmark. This trend is fueled by a massive market for AI in media, valued at USD 23.6 billion in 2023 and projected to grow at a 28.1% CAGR according to Grand View Research.

This commercial momentum creates pressure for all digital platforms to adopt similar technologies. OverDrive’s scale, which saw a 19% increase to 662 million digital checkouts in 2023, provides the necessary data to build a competitive AI model for a large and engaged digital readership; a Pew Research Center survey found that library card holders are more likely than non-holders to be readers in any format. The success of platforms like The StoryGraph, which gained popularity by offering nuanced, human-tagged discovery as reported by The New York Times, also shows a clear user demand for better recommendation tools. Libby’s feature is an attempt to meet this demand through automation, leveraging its vast user base to compete in an increasingly AI-driven content landscape and raising questions about AI in public libraries ethics.

Between Pages: Ethics and Algorithms Collide

Libby’s introduction of an LLM-powered discovery tool is a significant development that crystallizes the tension between technological innovation and public service values. The system’s documented capabilities for understanding complex user queries offer a clear advancement over previous recommendation engines. However, this progress is directly challenged by the library’s ethical mandates for privacy, intellectual freedom, and the value of human curation. This implementation will serve as a critical test case for whether data-hungry AI can be aligned with principles that prioritize user rights over user engagement. Can an algorithm be truly engineered to champion diversity and privacy, or will the logic of personalization inevitably narrow the pages we turn to next?

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]