Manus AI Agent Shows Promise but Faces Reliability and Accuracy Challenges in Early Testing

If you’ve been following tech news lately, you’ve probably heard about Manus. This new “agentic” AI platform from Chinese company The Butterfly Effect has been creating quite a stir since its preview launch. Some tech enthusiasts are calling it the “most impressive AI tool” they’ve ever used. But with all the excitement and comparisons to giants like OpenAI and Anthropic, we need to ask: Is Manus really as revolutionary as people claim, or is this just another case of AI overhype?

Explosion of Interest: The Manus Launch

The buzz around Manus has been nothing short of incredible. In just a few days, their Discord server reportedly grew to over 138,000 members. People were so desperate to get their hands on invite codes that they were apparently buying them for thousands of dollars on Chinese reseller apps like Xianyu. That’s some serious FOMO for a product that isn’t even fully available yet!

When the head of product at Hugging Face calls something “the most impressive AI tool I’ve ever tried,” people pay attention. AI policy researcher Dean Ball even described Manus as the “most sophisticated computer using AI.” With endorsements like these from respected voices in AI, it’s no wonder everyone wants in.

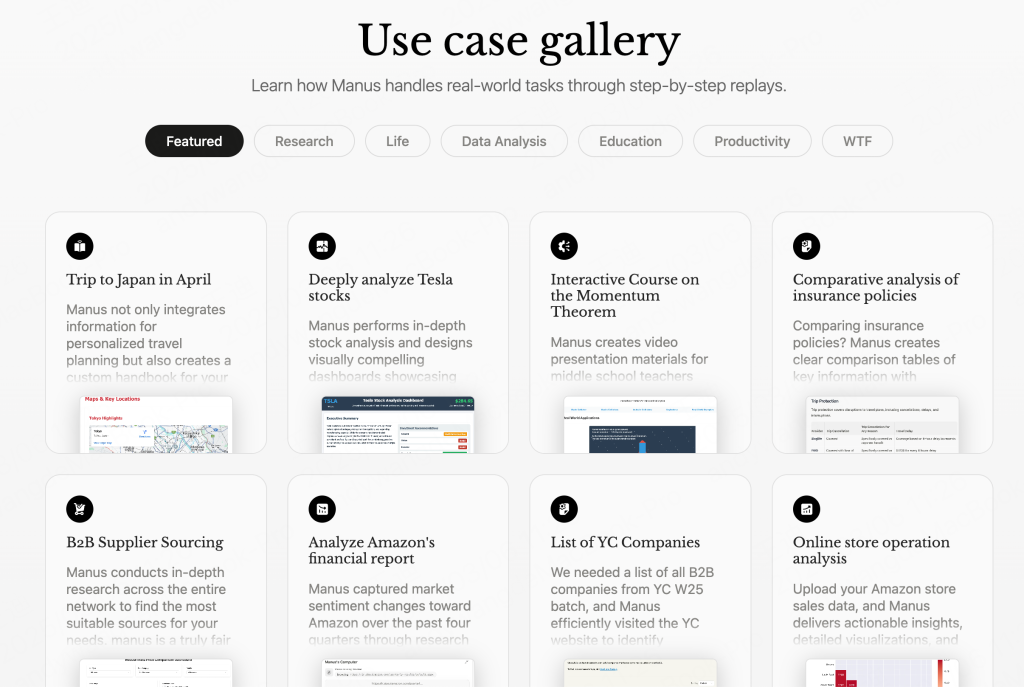

The claims made by The Butterfly Effect on their website are pretty bold too. They showcase examples of Manus apparently doing everything from buying real estate to programming video games. Combine these lofty promises with limited access, and you’ve got a recipe for massive hype.

Even Jack Dorsey seemed impressed, with this simple tweet in response to a post about Manus:

What Makes Manus Tick: The Tech Behind the Hype

Manus isn’t starting from scratch with its technology. From what we’re hearing on social media, it’s built on a mix of existing models like Anthropic’s Claude and Alibaba’s Qwen . These are already powerful language models, known for their impressive natural language abilities. The secret sauce seems to be in how The Butterfly Effect has blended and fine-tuned these models, though they’re keeping those details close to the chest.

The big deal with Manus is that it’s “agentic.” Unlike your standard chatbot that just responds to what you ask, Manus is designed to act more like a personal assistant that takes initiative. It’s supposed to plan and execute tasks to achieve whatever goal you set. In theory, this means it could research topics, analyze data, and create reports almost autonomously—much more than just answering your questions.

Behind the scenes, Manus likely uses a system of specialized “sub-agents” working together. Think of it like having different experts handling different parts of a task—one for web searching, another for analyzing the information, and another for writing up the results. This modular approach is what could potentially allow Manus to handle complex tasks like analyzing financial reports or conducting research.

How Does Manus Stack Up Against the Competition?

A lot of the excitement around Manus comes from claims that it outperforms existing tools, especially those from OpenAI. In a viral video on X, Yichao “Peak” Ji (a research lead for Manus) suggested that the platform beats tools like OpenAI’s Deep Research and Operator. He specifically mentioned that Manus scores better on the GAIA benchmark , which tests how well AI can navigate the web, use software, and solve real-world problems.

GAIA is no joke—it’s a tough test that pushes AI beyond simple Q&A into complex reasoning and planning. If Manus really does outperform Deep Research here, that would be impressive. But we should take these claims with a grain of salt. Benchmark tests don’t always translate to real-world usefulness, and we don’t have all the details on exactly how these tests were run.

Real Users, Real Experiences: Mixed Reviews

This is where things get interesting. Despite all the hype and impressive benchmark claims, actual users have had pretty mixed experiences with Manus so far.

Derya Unutmaz, MD, shared on X that Manus couldn’t complete a task that Deep Research handled in under 15 minutes:

Alexander Doria, co-founder of AI startup Pleias, ran into error messages and endless loops during his tests. Other users on X have pointed out that Manus sometimes gets facts wrong, doesn’t consistently cite sources, and misses information that’s easily available online.

These real-world experiences highlight some pretty significant issues:

- Reliability: Crashes and error messages suggest the system isn’t stable enough for everyday use yet.

- Accuracy: Factual mistakes and poor citation practices raise red flags about how trustworthy Manus’s information really is.

- Efficiency: When it takes longer to complete tasks than other tools (or fails to complete them at all), that’s a problem.

- Completeness: Missing easily available information suggests its web browsing capabilities aren’t as robust as claimed.

Ashutosh Shrivastava offered a more balanced take after spending some time with the platform:

Honest opinion after trying Manus AI for the last 3 days, here’s the good and the bad.

Good:

– The research it does on the internet and the reports it generates are incredible.

– Its ability to run scripts behind the scenes to execute tasks is impressive.

– The plans it…

— AshutoshShrivastava (@ai_for_success)

March 9, 2025

The original article’s author also tried some basic tasks like ordering food and booking flights. The results? Crashes, incomplete processes, and broken links. Not exactly the seamless experience you’d expect from a supposedly revolutionary AI assistant.

How the Hype Machine Works: Exclusivity, Media, and Misinformation

The Manus phenomenon isn’t just about technology—it’s also a fascinating case study in how hype builds around tech products. The Butterfly Effect played this game well. By making access invitation-only, they created artificial scarcity that drove demand through the roof. Nothing makes people want something more than being told they can’t have it!

Chinese media jumped on the bandwagon too. QQ News proudly called Manus “the pride of domestic products.” Meanwhile, AI influencers were busy spreading some questionable information about what Manus can actually do. One viral video supposedly showed Manus controlling multiple smartphone apps, but Ji later confirmed this wasn’t actually a Manus demo at all.

Other influential AI accounts on X tried to draw parallels between Manus and Chinese AI company DeepSeek, comparisons that weren’t entirely accurate. Unlike DeepSeek, The Butterfly Effect didn’t develop their own in-house models. And while DeepSeek has been relatively open with their technology, Manus hasn’t been as transparent—at least not yet.

This perfect storm of exclusivity, media hype, influential endorsements, and some outright misinformation created expectations that would be hard for any product to live up to, especially one still in beta.

What The Butterfly Effect Is Saying

To their credit, The Butterfly Effect seems to recognize the gap between expectations and reality. A Manus spokesperson told TechCrunch via DM: “As a small team, our focus is to keep improving Manus and make AI agents that actually help users solve problems […] The primary goal of the current closed beta is to stress-test various parts of the system and identify issues. We deeply appreciate the valuable insights shared by everyone.”

This is a pretty standard response for a product in beta, but it does acknowledge that they’re still working out the kinks. The big challenges they’ll need to tackle include:

- Fixing Technical Issues: They need to squash bugs, improve stability, and make the whole system more reliable.

- Setting Realistic Expectations: Being upfront about what Manus can and can’t do will help build trust.

- Proving Real Value: Eventually, Manus needs to show it can actually complete useful tasks reliably.

- Keeping Up With Competitors: The agentic AI space is getting crowded, and they’ll need to continuously innovate.

The Bigger Picture: What’s Happening with Agentic AI

The Manus story reflects what’s happening in the broader agentic AI world. The potential is undeniably exciting—who wouldn’t want AI assistants that can actually get things done for you? But the reality is that building truly reliable AI agents is incredibly difficult. They need advanced language processing, reasoning, planning, and the ability to interact with all kinds of software and websites.

Progress in this field is likely to be bumpy, with breakthroughs followed by periods of refining and problem-solving. The Manus situation reminds us to approach new AI technologies with healthy skepticism and to judge them based on what they can actually do, not just what they’re claimed to do.

The Bottom Line: Promising, But Not There Yet

Manus is an intriguing glimpse at what agentic AI might become, but in its current form, it’s clearly still a work in progress. The platform shows promise in areas like research and report generation, but struggles with reliability, accuracy, and completing basic tasks consistently.

The massive hype—fueled by exclusivity, media coverage, and some misinformation—has created expectations that the current version simply can’t meet. The Butterfly Effect acknowledges they’re still in early stages, and their focus on improvement is encouraging. But they’ve got their work cut out for them if they want Manus to live up to its initial billing.

The coming months will be crucial for Manus. Can it evolve from an interesting but flawed beta into the revolutionary AI assistant it wants to be? We’ll be watching with interest. The story of Manus is still being written, and whether it becomes a major player in the AI landscape or just another flash in the pan remains to be seen. One thing’s for sure—the race to build truly effective AI agents is just heating up.

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]