New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, cost, and reliability. This latest AI agent framework benchmark provides a clear, quantitative guide for teams navigating the increasingly complex decision of which foundation to build upon.

The findings arrive as the AI agent market is set for explosive growth, with Gartner predicting that 40% of enterprise applications will incorporate task-specific agents by the end of 2026. The analysis underscores that the choice is not about finding a single “best” tool, but about aligning a framework’s specific architectural strengths with a project’s primary goal, whether that’s production stability or initial development velocity.

Key Points

- A new benchmark quantifies a core tradeoff between AI frameworks optimized for prototyping speed versus production consistency.

- Microsoft’s Agent Framework demonstrates the highest output consistency and token efficiency, making it a strong production candidate.

- CrewAI excels in rapid development due to its intuitive API but incurs significantly higher operational token costs.

- LangGraph is positioned as the most mature production-ready option, evidenced by its 1.0 release and major enterprise adoption.

The Production-Ready Equation

The analysis argues that for enterprise applications, output consistency is a more critical metric than average quality. A framework that produces predictable results minimizes the need for complex error handling, a key reason an estimated 95% of generative AI pilots fail to reach production. The MS Agent Framework consistency benchmark is particularly notable, showing a standard deviation of just 0.10, which the analysis attributes to its deterministic, sequential pipeline architecture. In contrast, AutoGen’s more conversational model results in the highest variance, with a standard deviation of 0.45.

This decision between AI agent framework production vs prototyping also has direct financial implications. The benchmark reveals a sharp divide in operational efficiency, driven by architectural choices. Highlighting the tradeoff between CrewAI token cost vs development speed, the framework consumes nearly four times more tokens than its most efficient competitor. Its role-playing architecture costs approximately $220 per 1,000 runs, compared to just ~$60 for the MS Agent Framework—a cost difference highlighted by the study as a crucial factor for any application intended to operate at scale.

From Beta to Enterprise Scale

Performance metrics alone do not determine a framework’s suitability for enterprise deployment. The analysis highlights ecosystem maturity as a decisive factor, where LangGraph emerges as the clear leader. As the only benchmarked framework with a 1.0 General Availability (GA) release, it offers the stability and long-term support necessary for production systems. Its massive adoption, reflected in 34.5 million monthly downloads reported in early 2026, has cultivated a robust community and tooling ecosystem.

This production readiness is validated by its integration into enterprise platforms like Teradata’s Enterprise AgentStack. Conversely, the MS Agent Framework, despite its superior benchmark scores, remains in beta with a GA release expected around March 2026. This status makes it a high-potential but higher-risk choice for current production deployments, as its API is still subject to change.

Choosing Your Agent Architecture

The benchmark data provides a practical guide for matching a framework to a specific task. For complex, production-grade workflows requiring long-term stability, LangGraph is the recommended choice. The benchmark highlights its graph-based model as explicitly designed for applications with intricate branching, conditional logic, and cycles, offering developers granular control. The discussion around LangGraph vs CrewAI for production often centers on this control versus speed.

CrewAI, on the other hand, is the champion of development velocity. Its task-based API features the lowest boilerplate, enabling teams to build and demonstrate multi-agent systems quickly, aligning with its reputation for “‘team of agents’ setups with role specialization.” For projects where raw performance and cost-efficiency are paramount, the MS Agent Framework presents a compelling, if riskier, option. Its simple, sequential architecture makes it highly predictable and easy to debug, positioning it as a strong future contender once it reaches general availability.

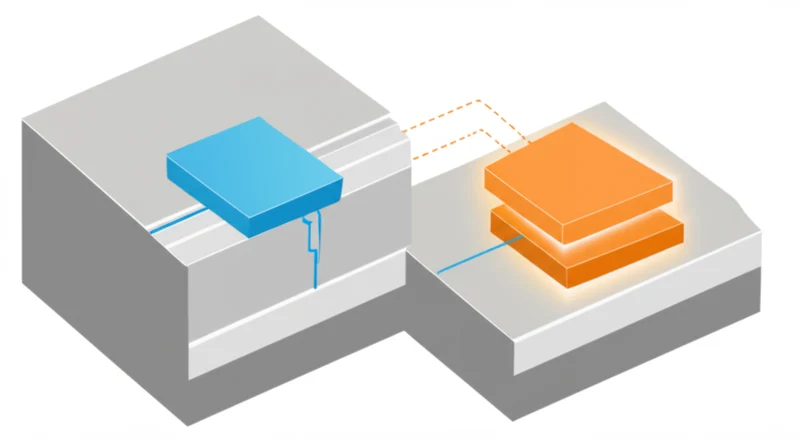

The Rise of the Hybrid Stack

The framework debate is occurring within a broader market shift away from single-solution architectures. Enterprises are increasingly building heterogeneous platforms, or “multi-tool” stacks, that leverage the strengths of different frameworks for different tasks. The Teradata Enterprise AgentStack, which incorporates LangGraph, CrewAI, and Flowise, exemplifies this pragmatic approach, acknowledging that no single tool is optimal for every use case.

This trend is part of a market bifurcation between pro-code frameworks and proprietary low-code platforms. Microsoft’s strategy reflects this, offering both the pro-code MS Agent Framework and the low-code Microsoft Copilot Studio Agent Builder, which features over 1,200 data connectors for business automation. This maturation caters to diverse needs, from deep developer customization to rapid deployment by business users, as the global agent market is projected to surge to $52.62 billion by 2030.

A Framework for Every Function

The 2026 AI agent landscape is not a race to crown a single winner but the formation of a specialized toolkit. The latest data-driven analysis confirms that the optimal choice is contingent on a project’s most critical constraint. By starting with a clear understanding of whether the priority is production stability, development speed, or operational cost, teams can select the framework that explicitly optimizes for that goal. The central question for developers is no longer “What is the best framework?” but “Which framework is the right tool for this specific job?”

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]