Nvidia's Blackwell Platform in Full Production, Powers AI Reasoning at GTC 2025

SAN JOSE, Calif. — Nvidia CEO Jensen Huang took center stage at the SAP Center on Tuesday morning, clad in his signature leather jacket and speaking without a teleprompter, to deliver one of the technology industry’s most anticipated keynotes. The GPU Technology Conference (GTC) 2025, which Huang dubbed the “Super Bowl of AI,” comes at a pivotal moment for both Nvidia and the artificial intelligence industry as a whole.

“What an amazing year it was, and we have a lot of incredible things to talk about,” Huang told the packed arena, addressing an audience that has grown significantly as AI has evolved from niche technology to transformative force across industries. The stakes were particularly high following market turbulence triggered by Chinese startup DeepSeek‘s highly efficient R1 reasoning model, which sent Nvidia’s stock tumbling earlier this year amid concerns about potential reduced demand for its premium GPUs.

Against this backdrop, Huang presented a comprehensive vision for Nvidia’s future, outlining a clear roadmap for data center computing, advancements in AI reasoning, and ambitious moves into robotics and autonomous vehicles. Nvidia’s stock traded down throughout the presentation, closing more than 3% lower for the day.

“The market reaction reflects a degree of ‘buy the rumor, sell the news,'” said Michael Diamond, senior technology analyst at Baird. “Investors were likely anticipating even more groundbreaking reveals, given Nvidia’s recent stock performance.”

Huang’s message was clear: AI isn’t slowing down, and neither is Nvidia. Here are the five most significant takeaways from GTC 2025:

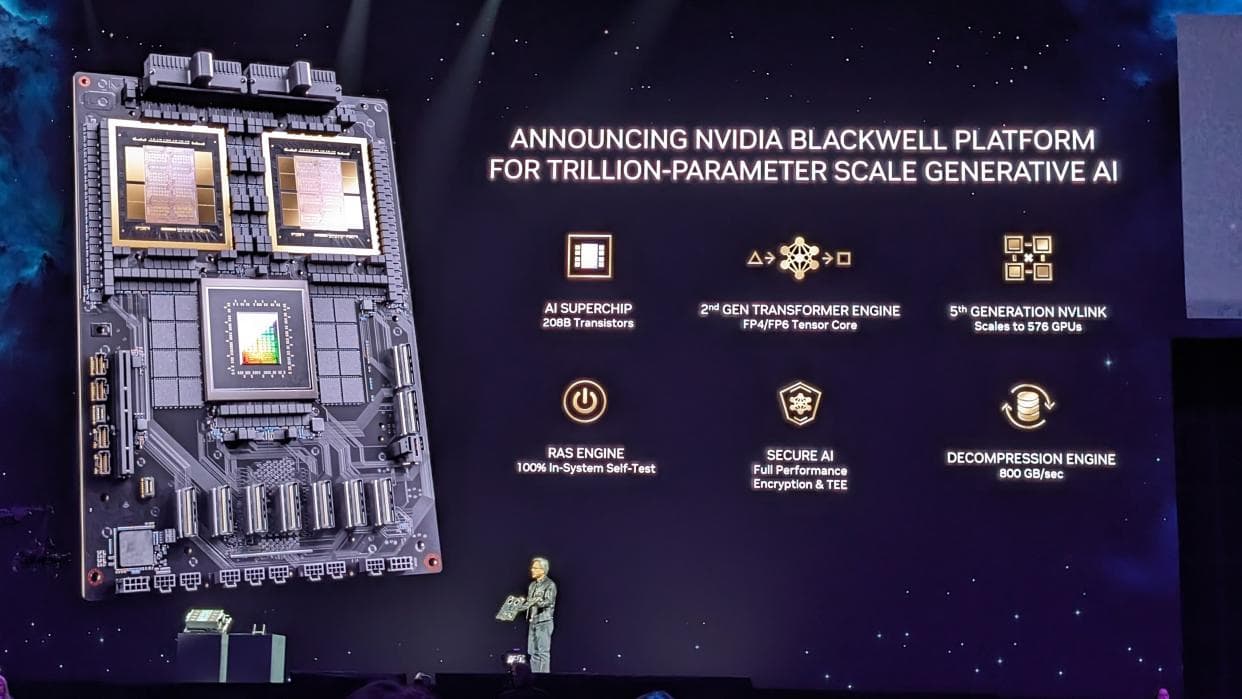

Blackwell Platform: Powering the Age of AI Reasoning

The cornerpiece of Nvidia’s AI computing strategy, the Blackwell platform, is now in “full production,” according to Huang, who emphasized that “customer demand is incredible.” This marks a significant milestone after what Huang had previously described as a “hiccup” in early production.

Huang made a striking comparison between Blackwell and its predecessor, Hopper: “Blackwell NVLink 72 with Dynamo is 40 times the AI factory performance of Hopper.” This performance leap is crucial for inference workloads, which Huang positioned as “one of the most important workloads in the next decade as we scale out AI.”

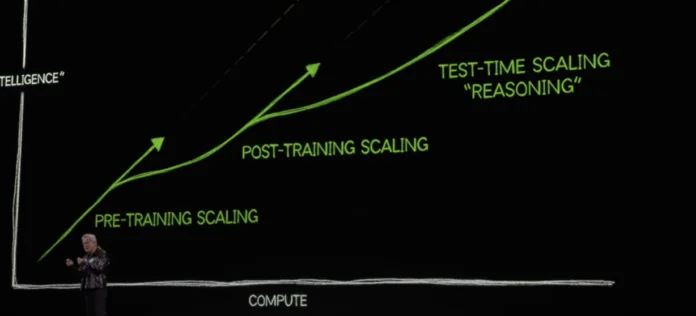

The timing of these performance gains is critical, as reasoning AI models require substantially more computation than traditional large language models. Huang demonstrated this by comparing a traditional LLM’s approach to a wedding seating arrangement (439 tokens, but incorrect) versus a reasoning model’s approach (nearly 9,000 tokens, and correct).

“The amount of computation we have to do in AI is so much greater as a result of reasoning AI and the training of reasoning AI systems and agentic systems,” Huang explained, directly addressing the challenge posed by more efficient models like DeepSeek’s.

“As AI tackles more complex tasks, the overall demand for computation will continue to grow, even if individual models become more efficient,” stated Dr. Emily Carter, a leading AI researcher at Stanford University.

Rubin Architecture: A Roadmap for the Future of AI Infrastructure

In an unusual move for a hardware company, Huang laid out a detailed roadmap for future GPU architectures through 2027, giving enterprise customers and cloud providers confidence in Nvidia’s long-term trajectory. This included details of the Nvidia Rubin architecture.

“We have an annual rhythm of roadmaps that has been laid out for you so that you could plan your AI infrastructure,” Huang stated, emphasizing the importance of predictability for customers making substantial capital investments.

The roadmap includes new chips coming in the second half of 2025, offering 1.5 times more AI performance than the current Blackwell chips. This will be followed by chips named after the astronomer who discovered dark matter, in the second half of 2026. Rubin will feature a new CPU that’s twice as fast as the current Grace CPU, along with new networking architecture and memory systems.

“Basically everything is brand new, except for the chassis,” Huang explained about the Vera Rubin platform, indicating a complete architectural overhaul.

The roadmap extends to Rubin Ultra in the second half of 2027, which Huang described as an “extreme scale up” offering 14 times more computational power than current systems. “You can see that Rubin is going to drive the cost down tremendously,” he noted, addressing concerns about AI infrastructure economics.

“Nvidia’s roadmap provides a clear signal to the market: they are not resting on their laurels,” said Dr. James Chen, professor of computer science at MIT. “They are committed to continuous innovation and maintaining their leadership position in the AI hardware space.”

Dynamo: The Operating System for AI Factories

One of the most significant announcements was Nvidia Dynamo, an open-source software system designed to optimize AI inference. Huang described it as “essentially the operating system of an AI factory,” drawing a parallel to how traditional data centers rely on operating systems to orchestrate enterprise applications.

Dynamo addresses the complex challenge of managing AI workloads across distributed GPU systems, handling tasks like pipeline parallelism, tensor parallelism, expert parallelism, in-flight batching, disaggregated inferencing, and workload management.

The system gets its name from the dynamo, which Huang noted was “the first instrument that started the last Industrial Revolution, the industrial revolution of energy,” positioning Dynamo as a foundational technology for the AI revolution.

By making Dynamo open source, Nvidia is strengthening its ecosystem and ensuring its hardware remains the preferred platform for AI workloads. Partners including Perplexity are already implementing Dynamo.

“We’re so happy that so many of our partners are working with us on it,” Huang said, specifically highlighting Perplexity as “one of my favorite partners” due to “the revolutionary work that they do.”

“The open-sourcing of Dynamo is a brilliant move,” commented Sarah Lee, a software engineer at a leading AI startup. “It allows the entire community to contribute to its development, leading to faster innovation and broader adoption.”

Physical AI and Robotics: The Next Frontier

In perhaps the most visually striking moment of the keynote, Huang unveiled a significant push into robotics and physical AI, culminating with the appearance of “Blue,” a Star Wars-inspired robot that walked onto the stage and interacted with Huang.

“By the end of this decade, the world is going to be at least 50 million workers short,” Huang explained, positioning robotics as a solution to global labor shortages and a massive market opportunity.

The company announced Nvidia robotics Groot N1, described as “the world’s first open, fully customizable foundation model for generalized humanoid reasoning and skills.” As detailed in Huang’s “Super Bowl of AI” presentation, making this model open source represents a significant move to accelerate development in the robotics field.

Alongside Groot N1, Nvidia announced a partnership with DeepMind and Disney, also revealed during the keynote, to develop Newton, an open-source physics engine for robotics simulation.

“Using Omniverse to condition Cosmos, and Cosmos to generate an infinite number of environments, allows us to create data that is grounded, controlled by us and yet systematically infinite at the same time,” Huang explained, describing how Nvidia’s simulation technologies enable robot training at scale.

“Nvidia’s entry into robotics is a game-changer,” said Dr. Robert Anderson, a robotics expert at Carnegie Mellon University. “Their expertise in AI, simulation, and hardware, combined with their open-source approach, could revolutionize the field.”

The GM Partnership: Driving the Future of Autonomous Vehicles and Industrial AI

Rounding out Nvidia’s strategy of extending AI from data centers into the physical world, Huang announced a significant partnership with GM to “build their future self-driving car fleet.” This partnership, a major topic of Huang’s GTC address, is not just about autonomous driving; it’s about transforming the entire automotive industry with AI.

“GM has selected Nvidia to partner with them to build their future self-driving car fleet,” Huang announced. “The time for autonomous vehicles has arrived, and we’re looking forward to building with GM AI in all three areas: AI for manufacturing, so they can revolutionize the way they manufacture; AI for enterprise, so they can revolutionize the way they work, design cars, and simulate cars; and then also AI for in the car.”

Alongside the GM partnership, Nvidia announced Halos, described as “a comprehensive safety system” for autonomous vehicles. Huang emphasized that safety is a priority that “rarely gets any attention” but requires technology “from silicon to systems, the system software, the algorithms, the methodologies.”

“The partnership with GM is a major validation of Nvidia’s autonomous driving technology,” said John Thompson, an automotive industry analyst. “It positions Nvidia as a key player in the future of transportation.”

The Architect of AI’s Second Act: Nvidia’s Strategic Evolution Beyond Chips

As shown throughout the “Super Bowl of AI” keynote, GTC 2025 revealed Nvidia’s transformation from GPU manufacturer to end-to-end AI infrastructure company. Through the Blackwell-to-Rubin roadmap, Huang signaled Nvidia won’t surrender its computational dominance, while its pivot toward open-source software (like Dynamo) and models (like Groot N1) acknowledges hardware alone can’t secure its future.

Nvidia has cleverly reframed the rise of efficient models, arguing more efficient models will drive greater overall computation as AI reasoning expands—though investors remained skeptical, sending the stock lower despite the comprehensive roadmap.

What sets Nvidia apart is Huang’s vision beyond silicon. The robotics initiative isn’t just about selling chips; it’s about creating new computing paradigms that require massive computational resources. Similarly, the GM partnership positions Nvidia at the center of automotive AI transformation across manufacturing, design, and vehicles themselves.

Huang’s message was clear: Nvidia competes on vision, not just price. As computation extends from data centers into physical devices, Nvidia bets that controlling the full AI stack—from silicon to simulation—will define computing’s next frontier. In Huang’s world, the AI revolution is just beginning, and this time, it’s stepping out of the server room.

Tags

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]