OpenAI Drops o3, o4-mini: AI's New Frontier

OpenAI has just raised the bar for artificial intelligence with the release of what it calls its “smartest models yet.” The AI research powerhouse today announced o3 and o4-mini, two new large language models that promise unprecedented reasoning capabilities, alongside Codex CLI, an open-source tool designed to bring these advanced AI systems directly into developers’ workflows.

Breaking New Ground in AI Capabilities

The new models represent a significant leap forward in AI design philosophy. Rather than simply generating text or code from prompts, o3 and o4-mini are built to actively utilize a comprehensive suite of tools—including web browsing, Python execution, file analysis, and image generation—as integral parts of their reasoning process. This approach enables them to tackle complex problems with far more flexibility and effectiveness than previous iterations.

“These aren’t just incremental updates,” says OpenAI in its announcement. “They’re designed from the ground up to reason more deeply across domains including coding, mathematics, science, and visual understanding.”

Two Models, Two Different Approaches

The flagship o3 model targets users requiring exceptional performance and reliability for the most demanding tasks. According to OpenAI, o3 makes 20% fewer major errors than its predecessor on complex real-world assignments, particularly excelling in areas like advanced programming and creative work.

Meanwhile, o4-mini offers a more accessible alternative without sacrificing capability. Though more compact, it outperforms previous models in benchmarks while supporting higher usage limits. This strategic differentiation gives developers and organizations clear options based on their specific needs and resource constraints.

Both models share several key improvements, including:

- Enhanced creative thinking and instruction following

- Ability to dynamically leverage up-to-date web information

- Improved conversational memory and personalization

AI That Uses Tools to Think

What truly distinguishes these models is how deeply tool usage is integrated into their reasoning process. Unlike previous systems that primarily generated outputs based on prompts, o3 and o4-mini actively employ tools as extensions of their thinking.

During the launch presentation, OpenAI President Greg Brockman highlighted this capability, noting that “in one instance, o3 used 600 tool calls in a row trying to solve a really hard task.” This sustained, goal-directed problem-solving represents a fundamental shift toward more autonomous and capable AI systems.

Better at Code Than Its Creators

Perhaps most surprising was Brockman’s candid admission that these models are “better than I am at navigating through our OpenAI codebase.” Coming from a technical expert intimately familiar with OpenAI’s systems, this statement suggests these models have achieved remarkably sophisticated code comprehension.

This capability extends beyond simple code completion to understanding complex relationships between modules, tracing logic flows across multiple files, and constructing mental models of software architecture—tasks that frequently challenge even experienced developers.

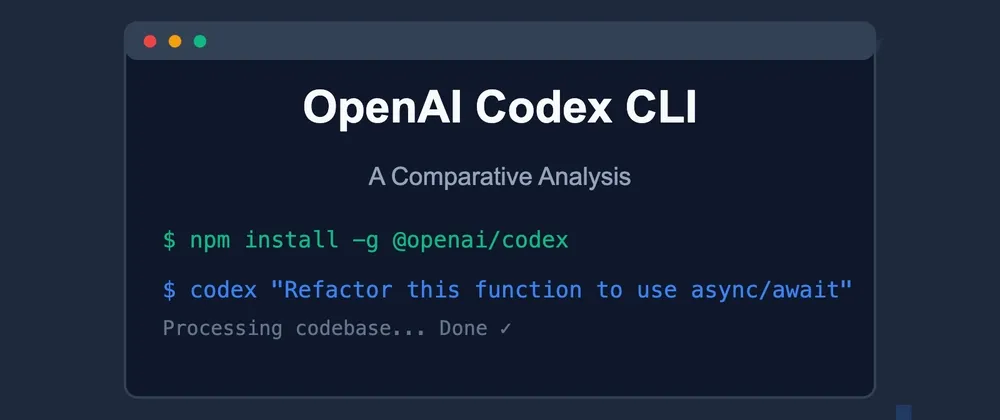

Codex CLI: Bringing AI Directly to Developers

Complementing these models, OpenAI has introduced Codex CLI, described as a “lightweight coding agent” that runs locally on developers’ machines. What sets it apart is both its open-source nature and its multimodal capabilities, which allow developers to incorporate visual information alongside text and code.

This local integration offers significant advantages over cloud-only solutions, enabling seamless work with actual project files rather than isolated snippets. By releasing it as open source, OpenAI is inviting the developer community to customize and extend its capabilities, potentially accelerating its evolution through collaborative improvement.

Transforming the Development Workflow

Practical applications of these tools span the entire software development lifecycle, from initial code generation to debugging, refactoring, documentation, and learning. The multimodal approach particularly enhances developer experience—for instance, allowing engineers to share UI screenshots alongside code when troubleshooting visual bugs.

While challenges remain—including occasional subtle bugs in AI-generated code and evolving questions about intellectual property—the trajectory is clear: AI-powered coding assistants are becoming essential components in modern development environments.

Rather than replacing human programmers, these systems appear poised to amplify their capabilities, handling routine tasks and complexity management while freeing developers to focus on higher-level problem-solving and creativity. As these technologies mature, they promise to fundamentally transform how software is created, potentially democratizing development and accelerating innovation across industries.

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]