OpenAI's Infrastructure Crisis: Ghibli Filters Break the Bank

In recent weeks, OpenAI has found itself at the center of the tech world’s attention as its GPT-4o model unleashed a viral wave of “Ghibli-style” AI-generated images. This success story, however, reveals much more than just a popular feature. Combined with CEO Sam Altman’s cryptic hints about forthcoming innovations, it exposes the profound challenges facing the AI pioneer. OpenAI now navigates a complex landscape of rapid innovation demands, infrastructure limitations, ethical controversies, and intensifying competition – offering us a fascinating glimpse into both the company’s strategy and the future direction of artificial intelligence.

The Ghibli Phenomenon: Showcasing GPT-4o’s Capabilities and Challenges

For weeks, social media platforms have been dominated by a distinctive aesthetic trend. Users across platforms have been captivated by ChatGPT’s ability to transform ordinary photos into Ghibli-style artwork. This phenomenon transcended simple entertainment, becoming a powerful demonstration of OpenAI’s advancing image generation capabilities through their flagship GPT-4o model.

Known for its seamless integration of text and visual processing, GPT-4o significantly enhanced ChatGPT’s creative toolset. The accessibility of this feature – available even to users on the free plan – created a content creation explosion that reportedly pushed ChatGPT’s user base beyond 150 million. This milestone highlights the immense public interest in creative AI applications.

However, this viral success quickly revealed the challenges inherent in rapid AI deployment. The “Ghiblification” trend placed what Altman described as ‘insane’ pressure on OpenAI’s GPU infrastructure – the specialized computing hardware powering their AI systems. This situation vividly illustrated the scaling difficulties faced by advanced AI: the very features driving user engagement threatened to overwhelm the underlying systems, creating a persistent tension between innovation and operational stability.

Beyond these technical challenges, the Ghiblification trend ignited significant copyright debates. Artists and critics voiced concerns that OpenAI’s model likely learned the distinctive Ghibli aesthetic by training on copyrighted artwork without permission or compensation – a practice they argue fundamentally devalues human creativity.

OpenAI responded by characterizing these images as “inspired original fan creations” and pointed to policies against directly copying living artists’ styles. Yet these responses failed to resolve deeper ethical questions about using artistic styles derived from potentially copyrighted data – a central issue for generative AI’s future.

In response to growing concerns, Altman publicly acknowledged creators’ perspectives, notably during a TED event. He suggested exploring new compensation models for artists, potentially allowing them to opt-in and earn revenue when users specifically request their style. Though details remained limited, this signaled OpenAI’s recognition that addressing copyright and fairness issues requires more than technical solutions – marking an important evolution beyond capabilities toward considering broader societal impacts.

Decoding Altman’s Tease: Between Promise and Pragmatism

On Sunday, Sam Altman sparked widespread anticipation with a tantalizing social media post suggesting that “a lot of good stuff” was planned for users in the coming week. This cryptic message generated speculation about major releases or even a “new product line,” particularly following the excitement surrounding GPT-4o’s image generation capabilities.

Altman’s post on X (formerly Twitter) heightened the anticipation: “We’ve got a lot of good stuff for you this coming week! kicking it off tomorrow.” In his characteristic informal style, he added personal advice about baby products: “We bought a lot of silly baby things that we haven’t needed, but I recommend a Cradlewise crib and a lot more burp rags than you think you could possibly need.” While the parenting tips were unexpected, the primary message about upcoming announcements captured significant attention across tech communities.

The developments that followed, however, revealed a more nuanced reality than some anticipated. Rather than a revolutionary new product, a key announcement focused on security and access management. OpenAI introduced mandatory “Verified Organisation” checks for entities seeking access to its most advanced models and APIs. This move, requiring formal ID verification, demonstrated a strategic emphasis on safety, trust, and misuse prevention – reflecting responsible governance rather than purely technical advancement.

Users did receive meaningful enhancements during this period. Shortly before Altman’s tease, around April 10, 2025, OpenAI began rolling out “Enhanced ChatGPT Memory.” This upgrade allowed the AI to retain information from past conversations (with user permission), enabling more personalized and context-aware interactions – a valuable step toward more helpful AI assistants. The feature’s phased, opt-in deployment (initially for Pro users outside the UK/EEA) demonstrated tangible progress, even if it wasn’t the entirely new product line some had anticipated.

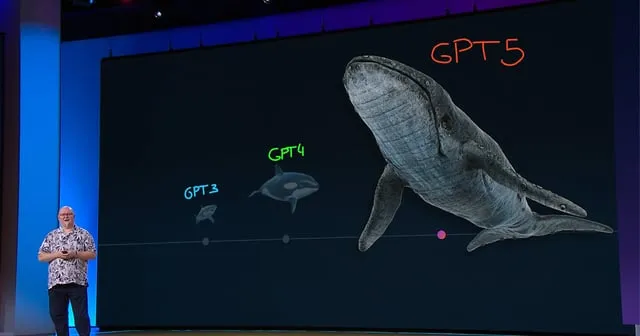

Managing expectations formed another part of the “good stuff.” Earlier in April, Altman had announced a delay for the highly anticipated GPT-5 model, pushing it back “a few months” from internal targets. Interim models, codenamed “o3” and “o4-mini,” would arrive instead. This decision highlighted the enormous resources required for frontier AI development, citing integration challenges, testing requirements, and critically, the need to strengthen infrastructure to handle the expected “unprecedented demand” for the new model.

OpenAI’s Urgent Quest for Infrastructure Talent

The pressure within OpenAI wasn’t limited to system slowdowns during the Ghibli trend. In mid-April 2025, Altman made a remarkably direct appeal for specialized engineering talent, signaling that recruiting infrastructure specialists had become a top organizational priority.

The 39-year-old CEO stated that OpenAI was actively seeking passionate engineers and system designers skilled at maximizing computer system performance. He specifically mentioned, “If you have a background in compiler design or programming language design, we might have something great for you” – highlighting the urgent need for experts who could optimize how AI models run efficiently on hardware.

“If you are interested in infrastructure and very large-scale computing systems, the scale of what’s happening at OpenAI right now is insane, and we have very hard/interesting challenges,” he added, emphasizing the unprecedented scope of their technical hurdles. His follow-up was even more direct, stating that OpenAI “could desperately use your help.”

This urgency directly reflects OpenAI’s growing pains and the massive computing demands of its advanced models. As experienced during the GPT-4o image generation phenomenon, user demand can quickly overwhelm capacity. Moreover, the GPT-5 delay was linked not just to development complexity but also to the critical need for robust infrastructure to handle the expected “unprecedented demand” upon release.

Underlying this computing power expansion are the fundamental “scaling laws” in AI development. Research, including OpenAI’s own studies, demonstrates that AI model performance improves predictably with increased computing power, larger datasets, and bigger models. Achieving cutting-edge results requires enormous computational resources – not just for training but also for serving these models to millions of users in real-time. OpenAI’s Head of Hardware, Richard Ho, reportedly confirmed this trend would continue.

Consequently, OpenAI’s recruitment efforts targeted highly specialized roles crucial for optimizing this complex technological ecosystem:

- Hardware/Software Co-Design Engineers: Experts who help design future AI chips and collaborate with hardware partners like NVIDIA to maximize performance for OpenAI’s specific requirements.

- Triton Compiler Engineers: Specialists developing software that translates AI code into efficient instructions for GPUs, essential for maximizing the potential of expensive hardware, as advertised on platforms like Built In SF.

- Large-Scale Systems Engineers: Professionals experienced in building and managing vast computing infrastructure needed to train and run models across thousands of chips with high reliability.

The substantial compensation packages, such as a reported base salary of $360,000–$530,000 plus equity for a Hardware/Software Co-Design role, underscores how critical this expertise is to OpenAI’s mission. In the intensely competitive AI landscape, efficient computation at scale represents a fundamental strategic advantage, essential for launching advanced models like GPT-5 and progressing toward artificial general intelligence.

Public Reaction: Anticipation, Demands, and Competitive Comparisons

Altman’s social media posts about upcoming releases and recruitment needs generated significant public discussion. Much of the conversation centered on what might be coming next, especially after GPT-4o’s impressive image generation capabilities had been demonstrated.

The competitive AI landscape featured prominently in these discussions. One user directly asked: “Will it be better than Gemini 2.5?” – reflecting the constant comparisons being drawn between leading AI labs. Another questioned if OpenAI would be “showcasing something that will finally compete with Anthropic’s Claude 3 Opus,” highlighting perceptions that competitors currently hold certain advantages.

The announcement’s timing also generated speculation about potential collaborations. With the news coming shortly before Apple’s September 9 event, some users wondered if there might be partnership announcements between the companies. “Maybe they’ll announce GPT-5 and say it’s coming to iOS,” suggested one commenter.

Beyond technical capabilities, there was substantial discussion about OpenAI’s business model. Several users expressed concern about potential pricing changes. “I hope they don’t increase the Plus subscription price,” wrote one person, while another wondered if the announcement would introduce “new pricing tiers with different features.”

The community also debated whether OpenAI might launch specialized models for enterprise customers or industry-specific applications. “Maybe they’ll finally release something for coding that can actually compete with GitHub Copilot,” suggested one developer.

As artificial intelligence continues to advance rapidly, these announcements have captured the attention of both industry professionals and technology enthusiasts. The global response reflects both excitement about new capabilities and practical concerns about implementation and monetization approaches.

The upcoming event will be livestreamed, allowing a worldwide audience to witness what could be another significant milestone in AI development.

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]