Perplexity's Third-Party Defense Escalates AI Data Wars

AI search engine Perplexity is facing intense scrutiny following investigations by Wired and Forbes, which accuse the company of systematically scraping content from publishers who explicitly block AI crawlers using the Robots Exclusion Protocol (). The evidence suggests Perplexity is bypassing these web standards, potentially using crawlers that don’t identify themselves, to ingest data. In response to the scraping allegations, Perplexity’s CEO, Aravind Srinivas, has offered a nuanced defense, stating that while the company’s own crawler respects web protocols, the service also relies on data from third-party crawlers. This Perplexity third party crawler defense places the company at the center of the escalating AI data wars, highlighting a contentious strategy of deflecting accountability and raising critical questions about the future of data ethics and the open web.

Key Points

• Investigations by publications like Wired and Forbes present evidence that Perplexity AI ignores robots.txt directives, summarizing content from firewalled sites that block its known crawler.

• Perplexity’s CEO confirms that its internal crawler, PerplexityBot, respects web protocols, but the service also sources data from third-party providers who may not.

• The investigations reveal the use of undisclosed crawlers tied to Perplexity’s AWS IP addresses, a practice that makes it difficult for publishers to identify and block unwanted scraping.

• This situation intensifies the conflict between AI firms seeking data and publishers protecting their IP, testing the limits of the voluntary standard and the “fair use” legal doctrine.

Digital Wolves in Bot Clothing

The core accusations against Perplexity are grounded in technical evidence suggesting its systems are accessing content in defiance of widely accepted web standards. An investigation by Wired, supported by security researcher Robb Brave, found Perplexity was able to summarize articles that were not only blocked via but also firewalled. This indicates that Perplexity’s systems found a way to access content that was explicitly protected from its crawlers.

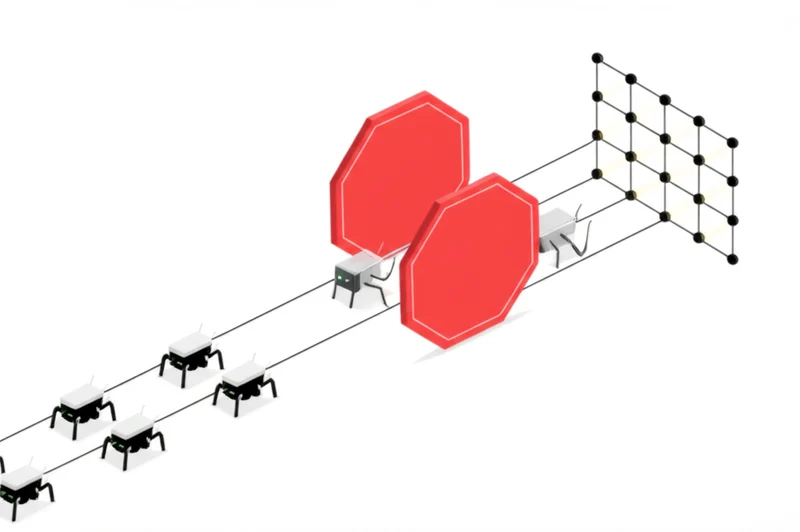

Furthering these claims, a separate Forbes investigation discovered that a machine originating from an Amazon Web Services (AWS) IP address tied to Perplexity probed its servers. Critically, this crawler did not use the company’s published user-agent, effectively operating in a clandestine manner. This use of undisclosed or misleading user-agents undermines the trust-based system that allows web administrators to manage bot traffic, making it nearly impossible for publishers to opt out of Perplexity’s data collection.

Accountability Shell Game

In his public Perplexity response to scraping allegations, CEO Aravind Srinivas has attempted to reframe the issue by distinguishing between the company’s own actions and those of its partners. He maintains that Perplexity’s internal crawler is “respectful of robots.txt” but acknowledges that the service aggregates information from a mix of sources, including third-party web crawlers. This argument implies that if a third-party partner ignores a publisher’s directives, the responsibility lies with that partner, not Perplexity. However, critics argue this stance is a deliberate attempt to deflect accountability for the data that ultimately powers its product.

This controversy operates within the larger context of the AI data wars Perplexity scraping has inflamed. The protocol has always been a voluntary “gentleman’s agreement,” not a legally binding rule. While ethical crawlers have historically honored it, the immense data appetite of LLMs is testing this norm. AI companies often lean on the “fair use” doctrine to justify training on public data, a legal argument central to high-profile lawsuits like the one filed by The New York Times against OpenAI and the ongoing case against GitHub Copilot. However, this defense is weakened when a company actively circumvents explicit opt-out signals.

Digital Handshakes Breaking Down

Industry experts view Perplexity’s actions and subsequent defense as a significant test for the web’s unwritten rules. Legal analysts suggest that knowingly bypassing a directive could be interpreted as “bad faith” in a copyright dispute. As discussed in U. S. Copyright Office sessions on AI, such actions could undermine a company’s “fair use” argument and potentially lead to greater damages.

Perplexity’s approach stands in contrast to that of Google. Facing similar pressures, Google introduced , a specific user-agent that publishers can block to prevent their content from being used in generative AI models without affecting their traditional search ranking. This method, detailed in Google’s crawler documentation, provides a clear and respectful opt-out mechanism. The fear is that if more companies adopt Perplexity’s strategy, the standard will become obsolete, forcing an arms race of aggressive blocking and clandestine scraping that could damage the open web. This has spurred development of more robust standards like the TDMRep protocol, designed to create machine-readable and legally explicit usage policies for AI training.

Trust’s Digital Crossroads

The controversy surrounding Perplexity’s data acquisition methods marks a critical juncture for the internet. The company’s “third-party crawler” defense pushes the boundaries of accountability, effectively creating a loophole to bypass established web etiquette. This strategy forces a confrontation between the relentless drive for AI innovation and the foundational principles of consent and control that have long governed the web. The industry is now faced with a choice: develop collaborative frameworks through licensing deals and new technical standards, or descend into a chaotic digital wild west. As the lines blur between direct and third-party data sourcing, who is ultimately responsible for upholding the web’s foundational protocols?

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]