Photoroom's Ablation Strategy: Efficient AI Model Design

In a move that emphasizes process over sheer scale, Photoroom has publicly detailed the training design for its PRX text-to-image model, presenting its findings as a transparent “experimental logbook.” This release provides a methodical analysis of their ablation-driven approach, offering the AI community a rare, in-depth look at how to systematically improve model performance. By documenting their successes and failures, Photoroom’s latest AI research delivers a practical blueprint for developing competitive models, focusing on efficiency and intelligent design rather than just massive computational resources. This open strategy represents a notable development in how smaller companies compete with big tech AI, shifting the focus from secret recipes to shared, reproducible science.

Key Points

- Photoroom’s research details a methodical process for improving text-to-image models through systematic ablation studies, starting with a clean baseline.

- The study validates Representation Alignment (REPA) as a key method for accelerating training by aligning model features with a pre-trained vision encoder.

- This open “logbook” approach provides a valuable guide on efficient AI model training techniques, particularly for teams with limited compute budgets.

- The work underscores a strategic shift toward building highly optimized, application-specific foundation models as a competitive alternative to general-purpose APIs.

Clean Slate Science: The Methodical Baseline

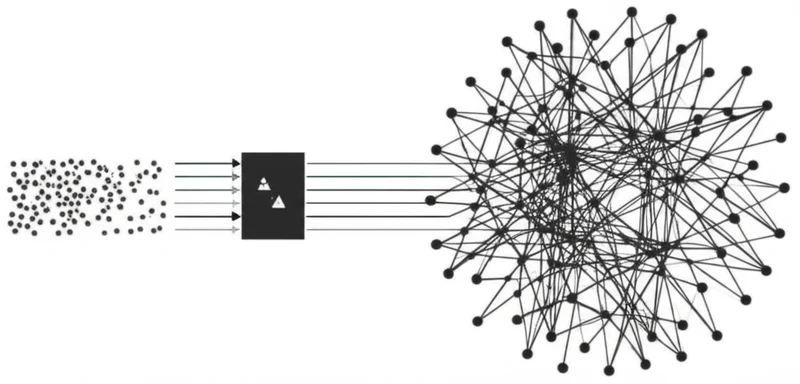

The effectiveness of any ablation study hinges on the quality of its starting point. Photoroom’s investigation begins by establishing a deliberately simple and clean baseline, ensuring that the impact of each new technique can be measured without confounding variables. This rigorous foundation is a core component of the Photoroom ablation training strategy.

The baseline model is the PRX-1.2B, a single-stream transformer trained with a pure Flow Matching objective, as introduced in a 2022 paper by Lipman et al. It operates on a 1 million image dataset at 256×256 resolution with a global batch size of 256. This controlled environment allows the team to confidently attribute performance changes directly to specific interventions.

To quantify these changes, the study employs a suite of benchmarks beyond the standard Fréchet Inception Distance (FID). By including CLIP Maximum Mean Discrepancy (CMMD) and DINOv2 MMD, the evaluation provides a more holistic view of image quality, capturing both fidelity and perceptual alignment. As the researchers note, this multi-metric scoreboard is non-negotiable for credible ablation studies for text-to-image models, as single metrics can often be misleading.

The Digital Apprenticeship: Learning from Vision Masters

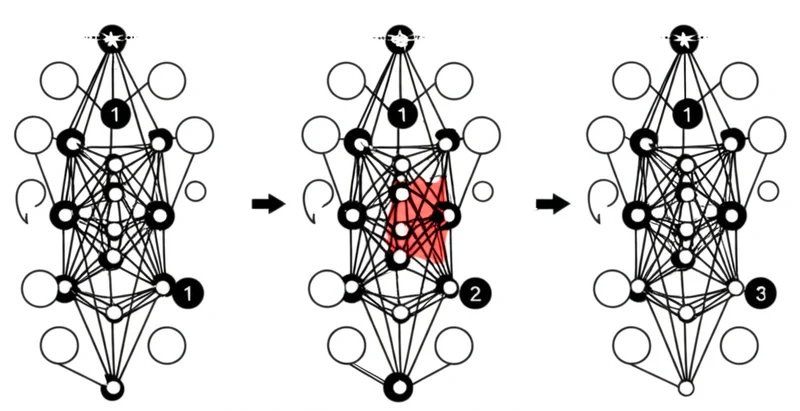

The primary technique analyzed in Photoroom’s logbook is Representation Alignment (REPA), a method designed to accelerate training by directly supervising the model’s intermediate features. REPA addresses a key bottleneck in early training where a model must learn a good internal representation of images while simultaneously learning the generation task.

The technique works by using a powerful, pre-trained vision encoder like DINOv2 as a “teacher.” This frozen teacher model processes the original image to extract high-quality patch embeddings, which serve as a ground-truth representation. The text-to-image model being trained—the “student”—is then guided by an auxiliary loss function. This loss encourages the student’s internal hidden states to mimic the structure and semantics of the teacher’s embeddings. By providing this strong representational prior, REPA helps the model learn meaningful features much earlier in the training process.

The result is significantly faster convergence, allowing the model to reach a target level of quality with less compute. This architectural decoupling, a key finding from their experiments, offloads some of the representational learning to a specialized component, demonstrating an efficient use of existing, powerful models to bootstrap new ones.

David’s Slingshot: Precision Over Power

Photoroom’s work carries strategic implications that extend beyond technical implementation details. By building and openly documenting its own foundation model, the company illustrates a viable path for smaller organizations to achieve state-of-the-art performance in specialized domains. This approach signals the rise of application-specific foundation models as a powerful alternative to relying on costly, general-purpose APIs from larger providers.

Owning the model provides deep technical expertise, cost efficiency at scale, and unparalleled control for customization. In an industry often characterized by closed-source models, Photoroom’s open and methodical research democratizes knowledge, lowering the barrier to entry for other teams. This fosters a more competitive and innovative ecosystem where the focus shifts toward training smarter, not just bigger.

The lessons shared are primarily about maximizing performance within a given compute budget. This focus on efficiency provides a more practical and sustainable path forward, challenging the “scale is all you need” narrative and providing a replicable strategy for building highly competitive models without requiring nation-state levels of investment.

Scientific Transparency: The New Competitive Edge

Photoroom’s detailed account of its training process is more than just a technical report; it’s a strategic asset for the broader AI community. By transparently sharing what works, what doesn’t, and why, the company provides a valuable guide for navigating the complex landscape of generative model development. This commitment to open and reproducible research helps build trust and empowers other developers to build upon their findings.

The focus on systematic, ablation-driven improvement demonstrates a mature and sustainable approach to AI development. As the industry continues to evolve, this transparent, efficiency-focused methodology establishes a new standard for building competitive advantage in AI model development, where reproducible science replaces proprietary black boxes as the path to innovation.

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]

UChicago AI Model Beats Optimization Bias with Randomness

In a direct challenge to the prevailing “AI as oracle” paradigm, where systems risk amplifying existing biases, researchers at the University of Chicago have demonstrated that intentionally incorporating randomness into scientific AI systems leads to more robust and accurate discoveries. A study published in the Proceedings of the National Academy of Sciences details a computational […]