Zhipu GLM-5 Escalates China AI Race: Scale vs Efficiency

Zhipu AI has unveiled its new GLM-5 model series, a strategic move detailed in a recent Bloomberg report designed to set a new performance benchmark in China’s competitive AI landscape and preempt an anticipated release from rival DeepSeek. The announcement from the newly public company highlights a significant strategic split in the nation’s AI development, pitting Zhipu’s massive-scale approach directly against DeepSeek’s established focus on architectural efficiency. This Zhipu GLM-5 model launch escalates the high-stakes China AI race, forcing the market to weigh the merits of raw power against cost-effective performance.

This development is not merely an incremental upgrade but a calculated maneuver following Zhipu’s landmark IPO on January 8,2026, which, according to a deep-dive analysis, established it as the world’s first publicly traded pure-play foundation model developer. This latest development in the China AI model competition shows a clear divergence in strategy, with Zhipu leveraging its capital to push the performance envelope while DeepSeek continues to build on its reputation for delivering powerful AI at a fraction of the computational cost.

Key Points

- Zhipu AI announced its GLM-5 model, featuring 745 billion parameters and a 512,000-token context window.

- The release directly challenges rival DeepSeek, framing the Zhipu vs DeepSeek AI race as a contest of scale versus efficiency.

- DeepSeek’s counter-strategy relies on its mHC architecture, documented to deliver superior cost-performance and intelligent scaling.

- This competition occurs as both firms navigate U.S. sanctions, driving innovation in hardware optimization and resource efficiency.

Goliath’s Gambit: The 745 Billion Parameter Play

Zhipu’s GLM-5 series represents a significant architectural leap, built on a “bigger is better” philosophy. The upcoming flagship model features a staggering 745 billion parameters, a clear investment in scaling to achieve more powerful and nuanced performance. This is complemented by a massive context window of up to 512,000 tokens, enabling the model to process and analyze entire books or extensive codebases in a single prompt.

Internal testing demonstrates that GLM-5 surpasses leading Western models like GPT-4 Turbo on critical benchmarks such as MMLU and HumanEval. This builds on the performance of its predecessor, GLM-4.7, which already outperformed GPT-4o on the SWE-bench coding benchmark, according to a report from The GLM Architect and China’s AGI Race – FinancialContent. Zhipu also announced “GLM-5-Flash,” an enterprise-focused variant targeting the crucial enterprise market, optimized for high-speed, low-cost inference to address market segments where latency is a primary concern.

This strategy is supported by Zhipu’s unique GLM architecture, which, as detailed in an analysis of China’s AGI race, uses an autoregressive blank-filling objective and 2D positional embeddings—a design that has historically delivered strong performance, particularly for the Chinese language.

David’s Slingshot: Efficiency as Competitive Edge

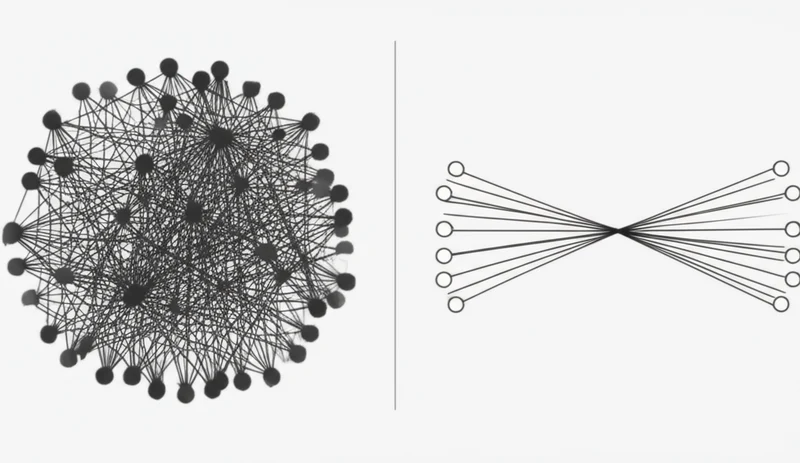

In stark contrast to Zhipu’s push for scale, DeepSeek has cultivated a reputation for its efficiency-first approach. While the company remains quiet on its next commercial model, its strategy centers on delivering competitive performance at a fraction of the computational cost—a powerful value proposition for enterprise adoption. Industry analysts predict DeepSeek’s response to GLM-5 will be a model delivering “95% of the performance at 50% of the cost,” as reported by China’s Zhipu Unveils New AI Model, Jolting Race With DeepSeek.

The foundation of this strategy is a novel architecture called Manifold-Constrained Hyper-Connections (mHC). According to analysis from IBM, mHC is designed to make LLM training more efficient, stable, and scalable. Kaoutar El Maghraoui, a Principal Research Scientist at IBM, notes this is about “scaling AI more intelligently rather than just making it bigger.” This focus on smart design rather than brute force has already allowed DeepSeek’s existing models to gain significant traction, a factor that has reportedly “disrupted the AI market” and led to over 10 million downloads from platforms like HuggingFace.

This track record of disrupting the market with affordable, high-performing models lends credibility to the DeepSeek efficiency-first approach, setting the stage for a compelling showdown in the China AI race scale vs efficiency debate.

Silicon Showdown: East Asia’s AI Battleground

The intensifying rivalry between Zhipu and DeepSeek is indicative of a rapidly maturing Chinese AI ecosystem where agile, well-funded startups are increasingly driving innovation. These firms are closing the performance gap with Western counterparts like OpenAI and Google at an accelerated pace, a trend noted by industry observers. Zhipu’s recent IPO and its prior history as a spin-out from Tsinghua University’s Knowledge Engineering Group highlight this trend.

This competition is also shaped by significant geopolitical headwinds. Both companies are innovating under the pressure of U.S. sanctions on advanced AI chips, a reality underscored by Zhipu’s addition to the U.S. Entity List in January 2025.

This environment has forced them to develop “hardware sovereignty” strategies and optimize models for available resources, a necessary adaptation to the challenging geopolitical environment . The ultimate goal for both is to build a robust domestic ecosystem of applications and enterprise partners, with Zhipu CEO Zhang Peng envisioning general base models as a “new type of infrastructure” for key industries.

Scale vs. Efficiency: The Fork in China’s AI Road

Zhipu’s GLM-5 launch is the latest salvo in a dynamic and fast-paced competition, successfully seizing the spotlight with impressive claims of scale and power. However, DeepSeek’s anticipated response, grounded in a proven doctrine of architectural efficiency and market-disrupting affordability, ensures the race for dominance is far from over. This intense domestic rivalry is producing world-class models while simultaneously forcing a diversification of strategies that will shape the global AI landscape. As these two philosophies unfold, will market leadership be defined by raw power or by accessible, cost-effective performance?

Read More From AI Buzz

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]

Anyscale Ray Adoption Trends Point to a New AI Standard

Ray just hit 49.1 million PyPI downloads in a single month — and it’s growing at 25.6% month-over-month. That’s not the headline. The headline is what that growth rate looks like next to the competition. According to data tracked on the AI-Buzz dashboard , Ray’s adoption velocity is more than double that of Weaviate (+11.4%) […]

Pydantic vs OpenAI Adoption: The Real AI Infrastructure

Pydantic, a data validation library most developers treat as background infrastructure, was downloaded over 614 million times from PyPI in the last 30 days — more than OpenAI, LangChain, and Hugging Face combined. That combined total sits at 507 million. The gap isn’t close. This single data point exposes one of the most persistent blind […]