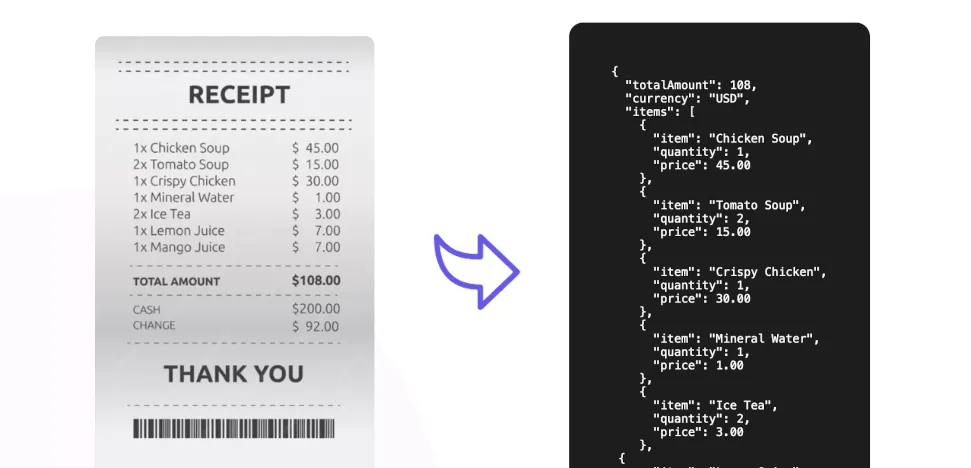

ChatGPT's new image tool produces eerily convincing receipts

Could you spot a fake receipt if it looked almost perfect? Thanks to powerful AI like OpenAI’s GPT-4o, creating incredibly realistic fake documents, like the ChatGPT fake receipts now appearing, is now surprisingly easy. This new ability creates serious fraud risks for people and companies, forcing us to rethink how we check if something is real in a world increasingly shaped by AI.

The AI Breakthrough: Making Text Look Real in Images

AI image generation has come a long way, moving from blurry shapes to images that now exhibit remarkable photorealism. For years, though, AI struggled with one big problem: putting clear, readable text into these images. Older AI systems, like GANs and early Diffusion models, were great at pictures but treated text like any other shape, often creating messy squiggles or gibberish — a common issue highlighted by users discussing why accurate text rendering was such a challenge. This made it hard for AI to create useful images needing text, like signs, labels, or important documents.

Things started changing in late 2023 and picked up speed in 2024. Major tech companies focused on fixing the text-in-image issue, marking significant milestones. As detailed in summaries of AI image generation progress in 2023, Google’s Imagen 2 added specific text support, Midjourney v6 introduced a text-drawing feature, and OpenAI’s DALL-E 3 got better at understanding prompts, leading to improved text. These were vital steps, connecting AI’s visual skills with the need for accurate text.

This month, everything changed again when ChatGPT unveiled a new image generator within its 4o model. This ChatGPT 4o image generator text update wasn’t just a small improvement; it was a major leap forward, largely because of its “native multimodal architecture.” Unlike older systems that might have combined separate image and text tools, GPT-4o handles text, images, and audio all within one integrated system. This unified design helps it understand how text should look visually – the right font, size, position, and how it fits with the image’s background, like paper texture or lighting. This built-in understanding makes 4o much better at putting text into images, opening the door for creating convincing fakes.

Fake Receipts Go Viral: The Alarming Reality

What was once a theoretical risk quickly became real. People are already using GPT-4o to generate fake receipts, adding another tool to the already-extensive toolkit of AI deepfakes available to scammers. The consequences are immediate and worrying for anyone who relies on visual checks for tasks like approving expense reports or handling returns, potentially undermining established verification processes.

Venture capitalist and active social media user Deedy Das drew attention to the problem when he posted on X a picture of a fake receipt for a real San Francisco steakhouse, claiming it was made with 4o. The image wasn’t just text; it showed realistic printing, item details, tax, and totals, looking real enough to cause widespread concern and spark debate, as reported by outlets like the Hindustan Times.

What makes these AI fakes so effective is their detail. GPT-4o can add small touches that mimic reality – slight wrinkles, folds, realistic paper textures, and even fitting backgrounds suggesting where the photo was taken (like a table). Others quickly achieved similar results, including user Michael Gofman, who tried adding food or drink stains to make a generated receipt look even more real. This addressed comments that the text in early examples looked too clean or seemed to “float” above the paper.

The most convincing example TechCrunch found didn’t come from X, but from France. A LinkedIn user posted a crumpled AI-generated receipt for a French restaurant chain, showing the AI’s ability to realistically simulate physical wear and tear.

TechCrunch also tested 4o and successfully generated a fake receipt for an Applebee’s in San Francisco. This ease of creation is exactly what has experts worried. Systems that depend on visual checks for expense reimbursements, product returns, or warranty claims are now much more vulnerable. An employee might create a fake receipt for a meal they never had, or a customer could fake proof of purchase for a return, with a better chance of getting away with it than ever before.

However, the technology isn’t flawless—at least not yet. TechCrunch’s generated receipt had clear signs it was fake. First, the total used a comma instead of a period for the decimal point, which is unusual in the US. Second, the math was wrong when adding up the items, subtotal, and total. Large language models (LLMs) like GPT-4o are known to still struggle with basic math, a weakness that can sometimes reveal AI generation. Users have also pointed out other potential flaws, like text looking too perfect or not matching the paper’s texture or folds.

But fixing these mistakes wouldn’t be difficult for a scammer. They could quickly correct the numbers using photo editing software or perhaps by giving the AI more specific instructions. Refining prompts given to the AI – known as iterative prompting – can often fix initial errors or add missing details. For someone determined to create a convincing fake, these current flaws are likely just minor obstacles.

It’s obvious that making fake receipt generation so simple opens the door to widespread fraud. It’s easy to see how criminals could use this technology to get reimbursed for expenses that never happened, potentially causing a wave of small, hard-to-catch financial losses for businesses.

Beyond Receipts: AI Fuels a Broader Wave of Document Fraud

While incredibly realistic receipts are making headlines now, experts in cybersecurity and fraud prevention warn this is just one part of a much bigger issue: the AI generated receipts fraud risk is just one example of generative AI being used for harmful purposes. The main problem is AI’s power to create convincing, often personalized, fakes quickly and easily. This challenges older verification methods that rely on spotting visual errors.

A major factor making the threat worse is that these powerful AI tools are becoming widely available. Advanced AI models aren’t just in research labs anymore; the public can increasingly access them. This lowers the barrier for entry, allowing people with little technical skill to create fake invoices, IDs, financial statements, and other documents that look incredibly real. Experts worry this accessibility will lead to a sharp rise in fraud attempts across many industries, potentially overwhelming current detection systems.

The threat goes far beyond receipts and is changing fast. AI is already being used in several complex scams:

- Creating fake invoices that look exactly like real ones, charging for goods or services that were never delivered, a trend fueling invoice fraud concerns.

- Improving Business Email Compromise (BEC) attacks by writing more convincing emails or slightly changing real invoices intercepted during communication. Specialized AI tools like the “Business Invoice Swapper,” reportedly used by cybercrime groups like “GXC Team,” help automate this process.

- Crafting highly personalized phishing messages using specific details about the target, making them much more effective, requiring new methods for detecting and preventing AI-based phishing.

- Using deepfake audio and video for sophisticated social engineering. In one alarming case, a finance worker in Hong Kong was tricked into transferring $25 million after a video call with people who turned out to be deepfake versions of the company’s senior executives.

These aren’t just possibilities; they are happening now. Real cases and the appearance of specialized criminal tools show that AI-driven document fraud is a real and growing danger. Even academic research isn’t safe, with examples of AI-generated text showing up in research papers, sometimes getting past reviewers. This highlights AI’s ability to create plausible, yet potentially false, content and underscores the urgent need for strong defenses.

OpenAI’s Stance: Balancing ‘Creative Freedom’ with Safety

OpenAI spokesperson Taya Christianson informed TechCrunch that all images created by ChatGPT include metadata showing their origin, serving as a basic source indicator. Christianson also stated that OpenAI “takes action” against users who break its usage rules and is “always learning” from real-world usage and feedback to enhance its safety systems.

TechCrunch followed up, asking for the official OpenAI response fake receipts generation being permitted at all, especially considering OpenAI’s usage policies explicitly prohibit facilitating fraud.

Christianson explained that OpenAI’s “goal is to give users as much creative freedom as possible.” She suggested fake AI receipts might have legitimate uses, such as “teaching people about financial literacy,” creating original artwork, or making product advertisements. This stance, reflected in OpenAI’s own discussion of GPT-4o’s image generation capabilities

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]