Frontier LLMs Fail ARC AGI 3: Multi-Step Execution Flaw

The latest wave of frontier large language models, including Alibaba’s Qwen 3 and Moonshot AI’s Kimi K2, have arrived with claims of state-of-the-art performance on standard industry evaluations. However, these advanced systems are encountering a significant roadblock on the newly released ARC AGI 3 benchmark, a test designed to measure true abstract reasoning. As the AI community tracks the development of LLMs striving for human-level intelligence, this new benchmark is proving to be a critical differentiator. Recent analysis reveals that the widespread struggles are not due to a fundamental inability to reason, but rather a critical failure in maintaining execution reliability over multiple steps.

This performance gap highlights a core architectural challenge for current LLMs, exposing the difference between single-turn accuracy and the sustained logic required for genuine problem-solving. Understanding why LLMs are failing ARC AGI 3 provides a clearer picture of the next hurdles in the pursuit of artificial general intelligence.

Key Points

- The ARC AGI 3 benchmark presents novel reasoning puzzles that cause even top-tier frontier LLMs to struggle significantly.

- Research indicates failures stem from poor multi-step execution reliability, where small errors cascade, rather than a lack of reasoning ability.

- A “self-conditioning” effect is observed where a model’s own prior errors increase the likelihood of subsequent mistakes in a sequence.

- This execution gap represents a major commercial barrier, as massive investments in AI are predicated on automating complex, multi-step tasks.

Abstract Puzzles, Concrete Failures

The Abstraction and Reasoning Corpus (ARC) and its successors represent a necessary evolution in AI evaluation. As traditional metrics become less discerning, the community requires novel tests that probe deeper cognitive abilities. The ARC AGI 3 benchmark is designed to present challenges that are simple for humans but difficult for systems reliant on pattern matching. As one analysis notes, the goal is to move beyond testing known facts to challenge AI with small puzzles requiring computational creativity and abstract reasoning, making benchmarks themselves an elusive measure of AGI.

Unlike benchmarks that test accumulated knowledge, ARC assesses an AI’s ability to solve problems it has never seen before. This focus on novel problem-solving is why even the most advanced models “struggle to complete any tasks on the benchmark,” according to one recent analysis (Discovering Top 3 Frontier LLMs Through Benchmarking — Arc AGI 3). The reliance on self-reported scores on potentially saturated benchmarks, as seen with claims around models like Qwen 3, further underscores the need for independent evaluations. For instance, while Alibaba has promoted Qwen’s performance, it has yet to publish a full technical report for independent substantiation, making objective tests like ARC all the more crucial.

When Logic Chains Break

A compelling technical explanation for why frontier LLMs fail this new AGI test lies not in a reasoning deficit but in an execution failure. A recent study on long-horizon tasks reveals that the LLM multi-step execution reliability problem is the primary culprit. An LLM might correctly understand the goal of an ARC puzzle but make a minor error midway through its internal process, derailing the entire solution.

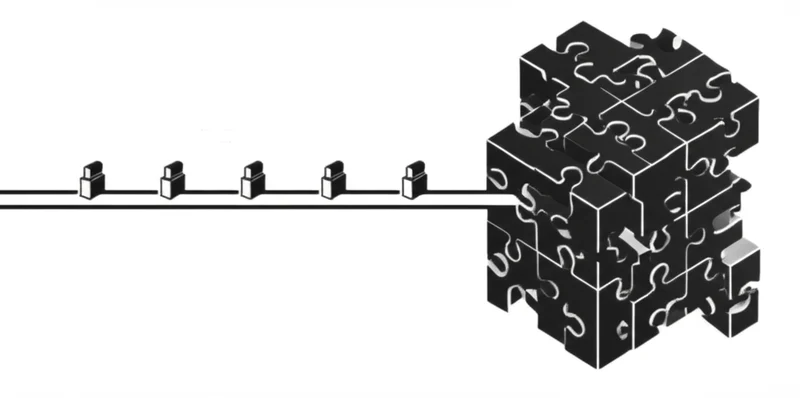

This research highlights two critical phenomena. First is the effect of compounding errors; even a tiny per-step error rate makes successfully completing a long chain of reasoning statistically improbable. Second, researchers identified a “self-conditioning” effect, where models become more likely to make subsequent mistakes when their own prior errors are present in the context. In a multi-step ARC puzzle, a single misstep can poison the context, leading to a cascade of further errors.

This issue is not easily solved by simply scaling up a model’s size, pointing to a more fundamental architectural limitation.

Trillion-Dollar Bets on Flawed Foundations

The struggles on the ARC AGI 3 benchmark have profound implications beyond academic circles, touching on the commercial viability of AI. The industry is witnessing massive investments in infrastructure, such as Oracle’s race to build out data centers fueled by massive contracts, including a reported $300 billion, five-year computing deal with OpenAI. These bets are predicated on AI’s ability to automate complex, real-world projects.

However, the ARC AGI 3 benchmark results analysis reveals a critical reliability gap. The inability to flawlessly execute long sequences of logical steps is the main barrier to deploying agents that can, as the aforementioned study puts it, “tackle entire projects, not just isolated questions.” An AI that cannot construct a coherent, error-free plan has limited economic value for high-stakes autonomous tasks. Success on benchmarks like ARC AGI 3 is therefore a leading indicator that models are developing the reliability needed to justify these infrastructural investments.

Beyond Pattern Recognition: The Intelligence Crucible

The ARC AGI 3 benchmark serves as more than just a final exam; it is a vital diagnostic tool explaining why frontier LLMs fail new AGI tests. It pinpoints the specific weaknesses in today’s frontier models: fragile execution, error propagation, and a lack of adaptive problem-solving. While models like Qwen 3 continue to post impressive scores on standard tests, as announced upon its release, their performance on ARC reveals a more nuanced reality. This discrepancy highlights a growing consensus that traditional benchmarking is facing a crisis, with issues like data contamination and an overemphasis on knowledge recall rather than true problem-solving.

The path forward requires a shift in focus from simply scaling parameter counts to architecting models with robust execution capabilities. The models that eventually conquer this benchmark will have demonstrated a form of intelligence far beyond today’s text generators, signaling a true leap in the quest for AGI. As the community continues to grapple with what to measure, benchmarks like ARC AGI 3 help define the path forward in what has become an elusive search for a true measure of AGI. What new architectures will emerge to solve this fundamental problem of reliability?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]