Geometric Defense for AI: TDA Achieves 98% Attack Detection

A recent multimodal AI security breakthrough demonstrates a powerful new defense against sophisticated threats, using a mathematical approach to analyze the fundamental ‘shape’ of data. Researchers have shown that Topological Data Analysis (TDA) can identify malicious inputs designed to fool multimodal AI systems with over 98% accuracy. This development introduces a geometrically-grounded security layer that detects subtle data distortions that often evade traditional methods. Systems like the T-GUARD detector, detailed in recent studies, validate the effectiveness of TDA on advanced vision-language models like CLIP and BLIP. By focusing on the structural integrity of a model’s internal data representations, this technique represents a notable advancement in the ongoing effort to secure AI from adversarial attacks, a critical step for building trust in systems being deployed in high-stakes environments.

Key Points

• Research demonstrates that Topological Data Analysis (TDA) detectors achieve over 98% accuracy in identifying strong adversarial attacks on advanced multimodal AI models.

• The method functions by analyzing the geometric ‘shape’ and structure of data within an AI model’s hidden layers, using a technique called persistent homology to spot anomalies.

• Unlike methods trained on specific attack patterns, TDA shows the ability to detect novel ‘zero-day’ attacks by identifying general structural deviations in data.

• This development directly addresses critical vulnerabilities in widely adopted multimodal systems, which are increasingly targeted by complex, cross-modal attacks.

Geometry as Guardian: The Mathematics Behind Attack Detection

Adversarial attacks exploit the complex, high-dimensional decision boundaries learned by AI models. An attacker introduces tiny, often human-imperceptible perturbations to an input—like a few pixels in an image or a word in a caption—to push it across a boundary and cause a misclassification. In a multimodal context, this threat is amplified, as subtle changes across both image and text can create a potent attack that is difficult to detect, a class of threat detailed in NIST’s taxonomy of adversarial machine learning.

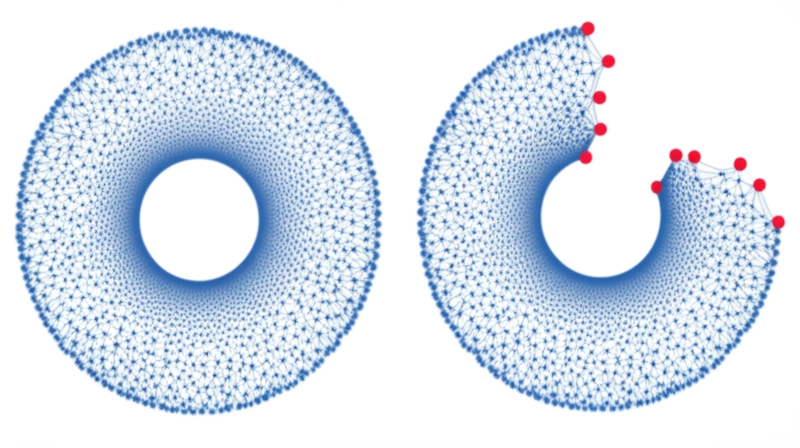

The TDA approach provides a new defensive paradigm for AI security by analyzing the shape of data. Instead of analyzing input statistics, it examines the fundamental structure of data as it’s processed inside the model. The process involves four primary steps: an input is fed to the model, activation data is extracted from its hidden layers, a “topological fingerprint” is generated using persistent homology, and this fingerprint is compared against a baseline of known clean inputs. A significant deviation flags a potential attack. A 2023 study confirmed that adversarial examples create statistically significant changes—or “topological artifacts”—in the persistent homology of a model’s latent space, providing a clear signal for detection.

Beyond Signatures: TDA’s Unique Defense Position

TDA is not a standalone solution but a significant addition to the AI security toolkit, offering distinct advantages over existing techniques. Its primary strength is the documented ability to detect novel attacks it has not been specifically trained on. Because it looks for general structural anomalies rather than signatures of known attacks like FGSM or PGD, it provides a more generalized defense layer, a key feature of geometric defenses highlighted in a 2023 survey on adversarial robustness.

This contrasts with adversarial training, which improves model robustness but is effective only against attack types seen during its training. It also differs from gradient masking, a technique that has been shown to provide a false sense of security against sophisticated attacks. TDA complements these methods by providing a model-agnostic detection mechanism. However, the approach has documented limitations. Calculating persistent homology is computationally intensive, presenting an engineering challenge for real-time applications, though researchers are exploring more scalable TDA algorithms to address this. Furthermore, researchers acknowledge the theoretical possibility of adaptive attacks designed to fool a model while preserving its data topology, an active area for further security research.

Cross-Modal Vulnerabilities: The New Battleground

This technical advancement is particularly timely. The rapid integration of powerful multimodal models like OpenAI’s GPT-4o and Google’s Gemini into business and consumer applications has massively expanded the attack surface for malicious actors. From autonomous driving to medical diagnostics, the reliability of these systems is paramount, making robust defenses a top priority. This is especially true as the research frontier pushes forward, with studies now applying TDA to the fused data representations where different modalities are combined, a critical point for detecting sophisticated attacks.

Industry analysts have identified AI Trust, Risk and Security Management (AI TRiSM) as a top strategic trend, according to Gartner. This reflects a growing enterprise need for verifiable security solutions. A Deloitte survey found that a significant number of executives harbor concerns about AI risks, including a lack of transparency, which is closely linked to adversarial vulnerabilities. As the Stanford HAI AI Index Report emphasizes, progress in AI capabilities necessitates a parallel focus on “red-teaming” and robust evaluation. TDA represents one of the latest AI adversarial attack detection methods that can contribute to these new standards for AI safety and security.

Topological Shields: From Theory to Practice

The application of Topological Data Analysis to AI security marks a shift from purely statistical defenses to ones grounded in the fundamental geometry of data. With benchmarked performance for this Topological Data Analysis AI adversarial attack defense showing over 98% accuracy in detecting strong attacks, TDA is no longer a theoretical concept but a validated technique. It provides a new lens for understanding and identifying how adversarial examples corrupt a model’s internal “reasoning” process.

While challenges in computational efficiency and defense against adaptive attackers remain, the method adds a critical and complementary layer to the security stack. As AI systems become more autonomous and influential, defenses that can assess the structural integrity of their operations are essential. As attackers inevitably adapt to these new geometric defenses, how can the computational demands of TDA be optimized for real-time protection in the most critical systems?

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]