Google DeepMind Introduces Gemini Robotics for Enhanced AI-Powered Robot Capabilities

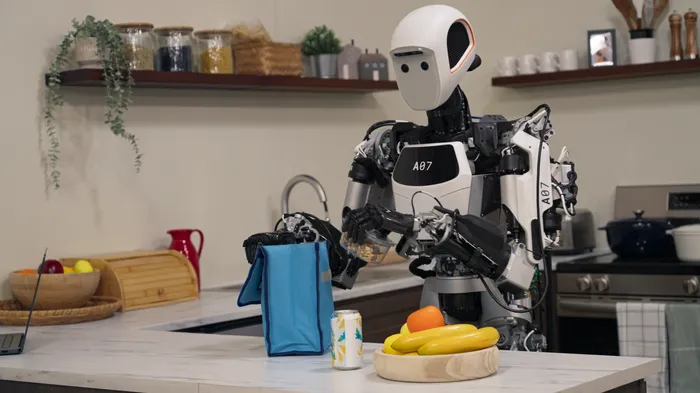

In a significant leap forward for artificial intelligence and robotics, Google DeepMind has introduced two groundbreaking AI models designed to transform how robots interact with the physical world. Gemini Robotics and Gemini Robotics-ER (Embodied Reasoning) promise to enable robots to handle a wider variety of real-world tasks with unprecedented adaptability, interactivity, and precision.

Bringing Multimodal Intelligence to Physical Robotics

Google DeepMind has unveiled Gemini Robotics, a revolutionary system built on their powerful Gemini 2.0 model. This technology represents a fundamental shift in how robots understand and interact with their environment, enabling them to perform complex tasks with remarkable flexibility.

At its core, Gemini Robotics is a vision-language-action model capable of interpreting visual and linguistic inputs and translating them into precise physical actions. What sets it apart is its ability to understand and adapt to new situations without requiring specific prior training—a remarkable advancement in robotic capabilities.

Carolina Parada, Senior Director and Head of Robotics at Google DeepMind, explained during a press briefing: “Gemini Robotics draws from Gemini’s multimodal world understanding and transfers it to the real world by adding physical actions as a new modality.”

Google’s vision extends beyond specific robot designs. According to Analytics India Magazine, the company aims to develop AI that can work for any robot, regardless of shape or size. Meanwhile, industry analysts at AINvest believe this technology has the potential to reshape industries and redefine human-robot interaction.

Three Key Pillars: Generality, Interactivity, and Dexterity

Generality: Thriving in Unpredictable Environments

One of Gemini Robotics’ most impressive features is its generality—the ability to adapt to unfamiliar situations, objects, and environments without specialized training. This adaptability comes from Gemini’s sophisticated understanding of the world and its ability to reason across different modalities including text, images, and actions.

Gadgets360 reports that in internal testing, Gemini Robotics more than doubled the performance on comprehensive generalization benchmarks compared to other leading vision-language-action models. This represents a crucial advancement toward creating robots that can function effectively in unstructured, unpredictable real-world settings.

Interactivity: Natural Human-Robot Collaboration

Gemini Robotics excels at human interaction, understanding and responding to natural language instructions in real-time. This enables fluid communication and collaboration between humans and robots.

The Robot Report highlights how the system can adapt to changes in the environment or instructions on the fly, making it particularly valuable for dynamic real-world scenarios. This level of interactivity opens new possibilities for human-robot teamwork, positioning robots as intelligent assistants capable of understanding context and intent.

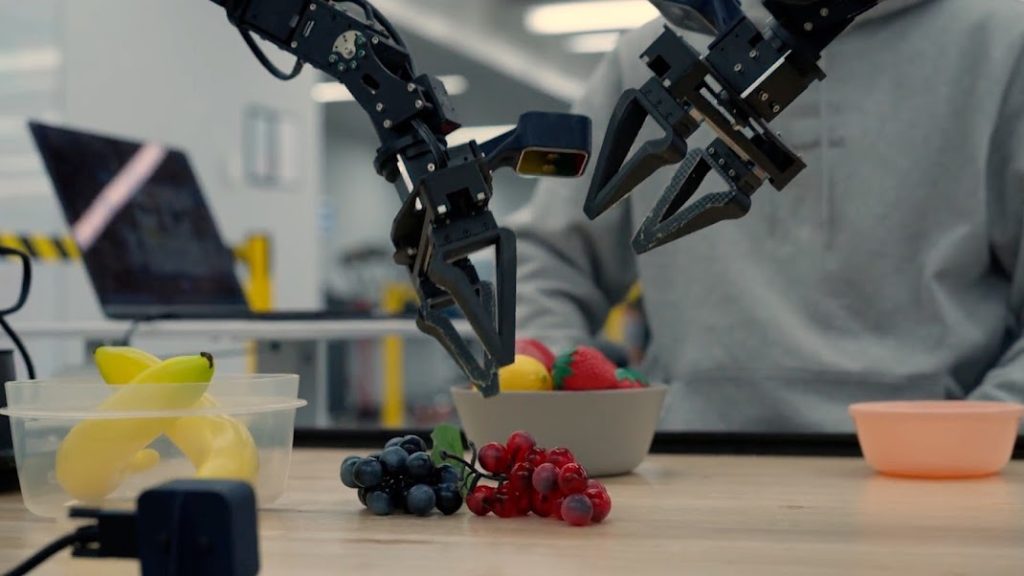

Dexterity: Mastering Complex Physical Tasks

The third pillar of Gemini Robotics is its dexterity—enabling robots to perform complex, multi-step tasks requiring precise object manipulation. This capability stems from its ability to reason about physical spaces and translate instructions into precise actions, as described by The Robot Report.

DeepMind researchers trained the model primarily using data from the bi-arm robotic platform, ALOHA 2. Parada emphasized, “While we have made progress in each of these areas individually in the past with general robotics, we’re bringing drastically increasing performance in all three areas with a single model. This enables us to build robots that are more capable, more responsive, and more robust to changes in their environment.”

Gemini Robotics-ER: Advanced Spatial Understanding

Complementing Gemini Robotics is Gemini Robotics-ER (Embodied Reasoning), a specialized model that enhances robotic understanding of complex environments. This model allows robots to understand and reason about dynamic, three-dimensional situations.

For example, when packing a lunchbox, a robot using Gemini Robotics-ER can understand spatial relationships between items, comprehend the lunchbox’s opening mechanism, and determine optimal item placement. The model achieves significant improvements in tasks requiring spatial awareness and planning.

Importantly, Gemini Robotics-ER is designed to integrate with existing robotic infrastructure. It can work alongside current low-level controllers, including collision avoidance and force-limiting systems. This integration enables new capabilities powered by advanced reasoning while leveraging the safety and control of existing systems.

Safety First: A Multi-Layered Approach

DeepMind has placed safety at the center of its robotics initiative. The company is developing a comprehensive, layered approach to ensure safe and responsible robot behavior.

Vikas Sindhwani, a researcher at Google DeepMind, explained that Gemini Robotics-ER models “are trained to evaluate whether or not a potential action is safe to perform in a given scenario.” This represents just one layer of the safety framework.

Drawing inspiration from Isaac Asimov’s Three Laws of Robotics, DeepMind is also developing a “Robot Constitution”—a framework that allows for the incorporation of safety guidelines expressed in natural language to guide robot behavior.

To advance safety research further, DeepMind is introducing a new dataset called ASIMOV. This dataset provides a standardized benchmark for assessing the effectiveness of different safety mechanisms. These new frameworks and testing methodologies will contribute to building more reliable and trustworthy AI-powered robots.

Industry Partnerships and Future Vision

Google DeepMind isn’t developing this technology in isolation. The company is actively collaborating with leading robotics firms to evaluate and refine Gemini Robotics-ER. These “trusted testers” include Agile Robots, Agility Robotics, Boston Dynamics, and Enchanted Tools.

Gadgets360 also reports that Google is partnering with Apptronik to build Gemini 2.0-powered humanoid robots, further expanding the practical applications of this technology.

“We’re very focused on building the intelligence that is going to be able to understand the physical world and be able to act on that physical world,” Parada explained. “We’re very excited to basically leverage this across multiple embodiments and many applications.”

Market Impact and Competitive Landscape

The robotics and AI market is experiencing rapid growth, driven by increasing automation demands and technological advancements. AINvest reports that the global artificial intelligence in robotics market is expected to grow at a substantial compound annual growth rate, presenting significant opportunities for companies like Google DeepMind.

As robots become more prevalent across industries, regulatory considerations around safety, ethics, and data privacy will be increasingly important. Organizations like the International Organization for Standardization (ISO) have developed safety standards to prevent accidents and injuries.

In this competitive landscape, DeepMind’s combined offering of Gemini Robotics and Gemini Robotics-ER, along with its commitment to safety and collaboration, provides a strong competitive advantage. The focus on generality, interactivity, and dexterity, coupled with advanced reasoning capabilities, positions DeepMind as a leader in this rapidly evolving field.

A Transformative Future

The introduction of Gemini Robotics and Gemini Robotics-ER represents a pivotal moment in robotics development. These technologies have the potential to transform industries ranging from manufacturing and logistics to healthcare and home assistance.

By enabling robots to adapt to new situations, interact naturally with humans, and perform complex tasks with precision, DeepMind is opening up unprecedented possibilities. As the company continues to refine these technologies and expand partnerships, we can expect increasingly sophisticated and capable robots to emerge.

The long-term implications are vast, potentially leading to a future where robots play an increasingly integral role in solving complex problems and enhancing human capabilities. As this future unfolds, continued focus on safety, ethical considerations, and responsible development will be essential to realizing the full potential of AI-powered robotics.

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]