Google QEC Milestone Validates Roadmap, Quantum Stocks Surge

In a development that sent ripples from research labs to Wall Street, Google’s Quantum AI division announced a landmark achievement in December 2025, successfully demonstrating a core principle of quantum error correction. For the first time, researchers showed it was possible to reduce computational errors by increasing the number of physical quantum bits (qubits) used to form a single, more stable logical qubit. This technical validation of a long-held theory served as a pivotal moment for the entire quantum computing industry, providing tangible evidence that one of the technology’s most formidable obstacles is practically solvable. The news, amplified by a year of rapid advancements from competitors, ignited a stock market frenzy for quantum-focused companies as investors recalibrated their timelines for the technology’s commercial viability.

Key Points

- Google demonstrated for the first time that scaling physical qubits can reduce logical qubit error rates.

- This breakthrough validates the primary engineering roadmap toward building fault-tolerant quantum computers.

- The news catalyzed a significant surge in pure-play quantum computing stocks as investor confidence grew.

- Company-specific execution, such as product delays, continues to drive stock volatility amid sector-wide optimism.

Cracking the Quantum Noise Barrier

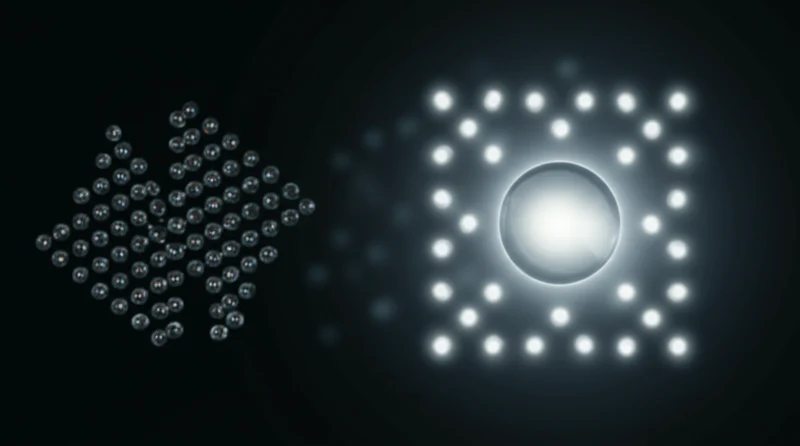

The central challenge in quantum computing is decoherence, a process where fragile qubits lose their quantum state due to environmental “noise,” leading to high error rates. For years, the proposed solution has been Quantum Error Correction (QEC), a theory that involves encoding the information of an ideal “logical qubit” across many noisy physical qubits. Google’s experiment was the first to prove this theory works in practice.

By encoding a logical qubit across a larger group of physical qubits, their system effectively detected and corrected errors, achieving a net reduction in the logical error rate. As Alphabet CEO Sundar Pichai told the Financial Times, “for the first time, we’ve demonstrated that it’s possible to reduce errors by increasing the number of qubits, a fundamental concept of quantum error correction.” In its own 2025 research recap, Google framed the achievement as part of a year of “relentless progress” that moved the technology’s trajectory “from a tool to a utility.” This achievement is a crucial “necessary but not sufficient step” that confirms the primary strategy being pursued by virtually all leading quantum hardware companies is a viable path toward fault tolerance.

Quantum Ripples on Wall Street

The technical progress announced by Google had a direct and pronounced impact on financial markets. The period saw a surge in the valuation of publicly traded quantum companies, a reaction to the perceived de-risking of the technology’s long-term roadmap. A year-in-review analysis from ConstellationR noted that “Pure play quantum stocks were hot” as the boardroom conversation began shifting from AI to quantum computing plans.

This market excitement was reflected in specific stock activity. Following the latest quantum computing developments in 2025, market data showed analysts raising the price target for D-Wave Quantum (QBTS), citing “Qubit Control and Error Correction Advances.” However, the market also showed its sensitivity to execution risk. Rigetti Computing’s (RGTI) stock slipped in early January 2026 after the company announced a delay in its 108-qubit system, highlighting that investors are now scrutinizing individual company performance alongside sector-wide breakthroughs.

Symphony of Quantum Milestones

The impact of Google’s quantum breakthrough on the industry was magnified because it did not occur in a vacuum. The year 2025 was marked by accelerated development across the landscape, with one report noting “a new development emerging almost weekly.” This collective momentum created a powerful narrative of industry-wide progress that challenged earlier skepticism, such as Nvidia CEO Jensen Huang’s comment at CES 2025 that useful quantum computing was 15-30 years away.

Other key industry players contributed to this narrative. D-Wave Systems signaled its ambition by acquiring Quantum Circuits for $550M to expand into gate-based systems, a strategic move tracked by market analysts. In February 2025, Microsoft launched its Majorana 1 chip, pursuing a different path to fault tolerance with inherently more stable topological qubits, another key milestone documented by industry analysts. Meanwhile, IonQ secured projects with DARPA and AstraZeneca, demonstrating a focus on finding real-world use cases for today’s intermediate-scale machines.

From Theory to Engineering Marathon

The events of late 2025, spearheaded by the Google quantum error correction breakthrough news, represent a significant inflection point. The validation of the theoretical roadmap toward fault-tolerant machines has transformed the field from a primarily scientific endeavor into a high-stakes engineering race. The focus now shifts to the immense challenges of scaling systems, improving qubit quality, and implementing more sophisticated correction codes. While the market frenzy was a rational response to clearing a major scientific hurdle, the path ahead remains long, with one analysis cautioning that the industry is still “years away from big commercial adoption.” Which companies will demonstrate the execution discipline to lead the next phase of this technological marathon?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]