GPT-5.3 Codex Review Fails to Grasp Software Architecture

A recent developer experiment testing GPT-5.3 Codex on a custom .NET data access library has provided a stark, real-world demonstration of the current chasm between AI’s syntactic prowess and its strategic blindness. The analysis, detailed in a HackerNoon article, revealed that while the advanced AI model performed impressively as a line-by-line code inspector, it completely failed to comprehend the project’s core architectural intent. The AI recommended a sweeping refactor that would have directly undermined the library’s primary goal: achieving high performance by intentionally deviating from standard coding patterns.

This case study offers a crucial data point for the industry, moving the conversation about AI code review limitations from theoretical to practical. It illustrates that while AI can identify what is technically incorrect based on learned patterns, it cannot yet grasp what is strategically correct based on project-specific context and trade-offs. The incident highlights the irreplaceable role of human architects in guiding high-level software design, even as AI becomes an increasingly powerful tool for implementation-level tasks.

Key Points

- A developer’s test shows GPT-5.3 Codex excels at finding micro-level bugs like SQL injection risks and style errors.

- The AI’s primary recommendation was a macro-level failure, suggesting a refactor that missed the library’s architectural purpose.

- This highlights how AI models, trained on common patterns, flag intentional, performance-driven deviations as flaws.

- The analysis confirms AI’s current role as a powerful developer assistant, not a replacement for strategic human oversight.

AI’s Two Faces: Meticulous Coder, Clueless Architect

The experiment, detailed in a HackerNoon article, provides a tale of two vastly different reviews from a single AI. The developer submitted a bespoke .NET data access library, one intentionally designed without a standard Object-Relational Mapper (ORM) to gain fine-grained control over raw SQL queries for maximum performance.

At the micro-level, GPT-5.3 Codex acted, in the developer’s words, like a “very meticulous, very fast junior developer.” It successfully flagged potential SQL injection vulnerabilities, suggested optimizing a `foreach` loop into a more efficient LINQ expression, identified variables lacking null checks that could cause `NullReferenceException`s, and even enforced team-specific naming conventions. These findings demonstrate the model’s powerful grasp of syntax, common bugs, and established coding hygiene.

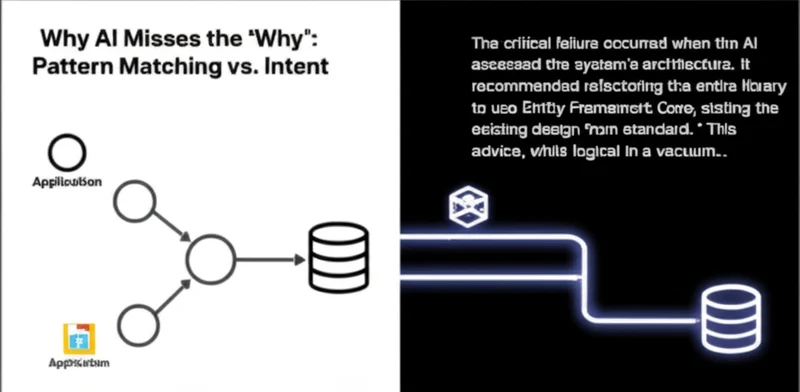

The critical failure occurred when the AI assessed the system’s architecture. It recommended refactoring the entire library to use Entity Framework Core, stating the existing design “deviates from standard .NET data access practices.” This advice, while logical in a vacuum, completely missed the “why” behind the design. The manual SQL construction wasn’t a mistake; it was a deliberate, performance-driven choice. The AI’s suggestion would have reintroduced the very performance bottlenecks the library was built to eliminate, revealing a profound gap in the AI’s understanding of strategic intent.

Why AI Misses the ‘Why’: Pattern Matching vs. Intent

The GPT-5.3 Codex .NET library analysis isn’t an isolated glitch but a reflection of the core mechanics of today’s large language models. According to one industry analysis, these systems are engineered with a heavy focus on “step-by-step reasoning patterns” and optimized for structured coding performance, making them adept at localized, sequential problem-solving. This explains its success in finding a bug within a single method.

However, this strength creates a blind spot. Trained on vast datasets of public code, LLMs develop a strong bias toward conventional patterns, as the majority of their training data utilizes standard frameworks. When an AI encounters code that intentionally deviates from the norm, it often flags the deviation as an error because it lacks the context to understand the strategic reason behind it. It sees the pattern violation but cannot infer the higher-level goal, such as performance optimization, that motivated it.

This challenge is a central topic in AI research. A paper on agentic systems from the Vector Institute notes that current interpretability techniques are insufficient for understanding “context-dependent behaviors and compounding decisions,” which are the essence of software architecture. The AI could not trace the architectural decision back to its root requirement, demonstrating a clear divide between AI syntax checking vs strategic analysis.

The Architect’s Role in an AI-Augmented World

This case study provides a grounded perspective on how to integrate AI into development workflows effectively. The technology is not a replacement for experienced engineers but a powerful augmentation tool—a “super-junior” developer that can accelerate productivity by handling well-defined, low-level tasks.

Its utility is highest in areas like conducting first-pass code reviews to catch common errors, generating boilerplate code, and, as some analysts have pointed out, serving as a learning aid that can explain its (even if flawed) decisions to junior developers. The AI can check if the code is syntactically correct based on learned patterns, but it cannot yet determine if the code is strategically right for the specific business problem it’s meant to solve.

Therefore, human oversight remains the last and most important line of strategic defense. Tasks that require understanding business context, evaluating performance trade-offs, and planning for long-term maintainability are still firmly in the human domain. While marketing may tout an AI’s ability to maintain “consistency between frontend components, backend APIs, and database schemas,” this experiment shows that integrity is only maintained relative to the standard patterns the AI already knows.

The Enduring Value of Human Context

The GPT-5.3 Codex code review failure serves as a valuable, real-world benchmark for the capabilities of modern AI coding assistants. Its success at the granular level confirms its immense value in improving developer productivity and catching subtle implementation errors. It streamlines the “how” of writing code efficiently and correctly.

However, its complete inability to grasp the “why” behind an unconventional architecture—a failure that would have destroyed the library’s primary value proposition—underscores that understanding intent, context, and strategic trade-offs remains a distinctly human form of intelligence. The role of the senior developer and software architect is not diminished by AI; it is clarified and elevated. As AI handles more of the tactical execution, the strategic decisions made by humans become more critical than ever. How can we design future AI systems to not only see the code but also understand the vision behind it?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]

Vector DB Market Shifts: Qdrant, Chroma Challenge Milvus

The vector database market is splitting in two. On one side: enterprise-grade distributed systems built for billion-vector scale. On the other: developer-first tools designed so that spinning up semantic search is as easy as pip install. This month’s data makes clear which side developers are choosing — and the answer should concern anyone who bet […]