ICLR AI Paper Controversy Sparks Debate on Ethics and Transparency in Peer Review

A new ethical dilemma is shaking the foundations of academic publishing as AI-generated research papers flood into scientific conferences. The recent controversy at the International Conference on Learning Representations (ICLR), a prestigious gathering focused on artificial intelligence, has ignited an intense debate about the boundaries between human and machine contributions to science.

When AI Authors Submit Papers: The ICLR Controversy

A storm of controversy has erupted over several “AI-generated” studies secretly submitted to this year’s ICLR, challenging the very nature of scientific peer review.

Three AI research labs — Sakana, Intology, and Autoscience — have claimed success in having AI-generated studies accepted to ICLR workshops. Sakana AI publicly documented their experiment in “AI-Scientist: First Publication,” while Intology announced their achievement via social media. Autoscience boldly characterized their AI system, CARL, as “the first AI system to produce academically peer-reviewed research.” These workshop papers undergo review by organizers before publication in the conference proceedings.

A crucial ethical distinction emerged between the approaches. Sakana AI took the transparent route, informing ICLR leadership of their intentions and securing peer reviewers’ consent before submission. In stark contrast, both Intology and Autoscience proceeded without such disclosures, as confirmed by an ICLR spokesperson to TechCrunch.

The Hidden Cost of AI Research: Exploiting Peer Review

The academic community’s reaction was swift and largely condemning, with many prominent researchers criticizing what they viewed as an exploitation of the scientific peer review process.

“All these AI scientist papers are using peer-reviewed venues as their human evals, but no one consented to providing this free labor,” argued Prithviraj Ammanabrolu, assistant computer science professor at UC San Diego, in a pointed critique. “It makes me lose respect for all those involved regardless of how impressive the system is. Please disclose this to the editors.”

This controversy spotlights the already strained peer review system. A recent Nature survey revealed that 40% of academics dedicate two to four hours reviewing a single study—a significant investment of specialized expertise. Meanwhile, submission volumes have skyrocketed, with NeurIPS, the largest AI conference, seeing 17,491 papers in the past year—a staggering 41% increase from 12,345 in 2023.

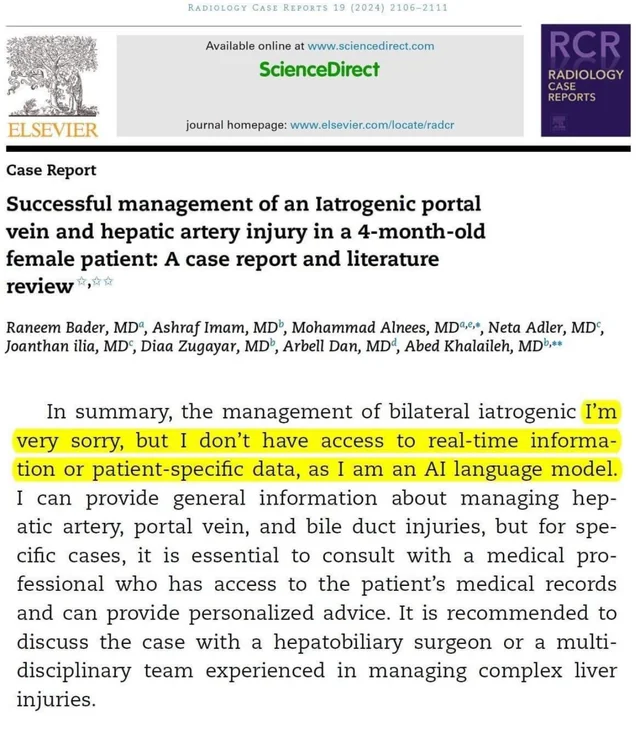

The infiltration of AI-authored papers compounds an existing problem. Research published on arXiv suggests that between 6.5% and 16.9% of papers submitted to AI conferences in 2023 already contained synthetic text. But the deliberate use of peer review as a benchmarking tool for commercial AI systems represents a troubling new development.

Crossing the Line: When Peer Review Becomes Marketing

Intology’s approach particularly inflamed tensions when they boasted on social media that their “papers received unanimously positive reviews” and that reviewers praised one AI-generated study’s “clever idea[s].” This transparent attempt to leverage academic credibility for marketing purposes drew sharp criticism.

Ashwinee Panda, a postdoctoral fellow at the University of Maryland, condemned the practice, noting that submitting AI-generated papers without giving workshop organizers the opportunity to refuse them demonstrated a “lack of respect for human reviewers’ time.”

“Sakana reached out asking whether we would be willing to participate in their experiment for the workshop I’m organizing at ICLR,” Panda elaborated, “and I (we) said no […] I think submitting AI papers to a venue without contacting the [reviewers] is bad.”

Scientific Merit Under Scrutiny: Can AI Truly Innovate?

Beyond ethical considerations, researchers remain deeply skeptical about the actual scientific value of AI-generated papers. Even Sakana, despite their more transparent approach, acknowledged significant limitations in their experiment.

The company admitted their AI made “embarrassing” citation errors, and only one of their three submitted AI-generated papers would have met the standard for conference acceptance. In a move that contrasted with their competitors, Sakana withdrew their ICLR paper before publication “in the interest of transparency and respect for ICLR convention.”

Building a New Framework: Solutions for the AI Research Era

This controversy has catalyzed calls for structural reform. Alexander Doria, co-founder of AI startup Pleias, advocated for a “regulated company/public agency” to conduct “high-quality” evaluations of AI-generated studies for a fee.

“Evals [should be] done by researchers fully compensated for their time,” Doria argued. “Academia is not there to outsource free [AI] evals.”

The path forward requires developing specialized review frameworks that can address the unique challenges of AI-generated content—including algorithmic biases, transparency issues, and the risk of misleading information. Many experts now support compensating reviewers specifically tasked with evaluating AI-generated submissions, acknowledging the additional expertise required.

The Collaborative Future: Humans and AI in Research

Rather than barring AI from the research process entirely, the scientific community is working toward establishing clear guidelines for its responsible integration. The focus is shifting toward leveraging AI’s strengths in data analysis, literature review, and identifying research directions, while maintaining essential human oversight for validity and ethical integrity.

With the global market for AI datasets and academic research licensing projected to reach $2.88 billion by 2033—growing at a CAGR of 25.7% from 2025—establishing proactive measures is increasingly urgent.

The European Union’s risk-based approach in its AI Act could serve as a model for future regulation of AI in research, with stricter rules applied to papers making significant scientific claims. The ultimate goal remains fostering productive collaboration between human researchers and AI systems that accelerates discovery while preserving scientific integrity.

As academia grapples with these emerging challenges, the ICLR controversy serves as a watershed moment—one that may ultimately determine how AI shapes the future of scientific inquiry and publication.

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]