Inside AutoDS: The Bayesian Tech Powering AI2's AI Scientist

The Allen Institute for AI (AI2) has long pursued the development of an “AI Scientist” through initiatives like Project Alexandria, which aims to build systems that can reason and collaborate on scientific problems. This pursuit is part of a broader industry trend toward automated discovery, where AI moves beyond data analysis to autonomously design and execute experiments. A core technical advancement in this field is the use of Bayesian surprise, a mechanism for guiding exploration that underpins the architecture of hypothetical systems like AI2’s “AutoDS.” This approach represents a notable development in creating AI that exhibits a form of scientific curiosity, focusing not just on finding answers but on asking the most informative questions. Understanding this mechanism is key to grasping the latest in AI for scientific discovery.

Key Points

• Foundational research demonstrates that Bayesian surprise is a formal measure guiding AI to seek data expected to most significantly change its internal model, improving data efficiency in complex problem spaces.

• The market for AI in drug discovery, a prime application for this technology, was valued at $1.1 billion in 2022 and is projected to reach $4.0 billion by 2027, according to market analysis, reflecting strong industry adoption.

• Current implementations of “self-driving labs” integrate AI software with robotics, enabling what expert Alán Aspuru-Guzik describes as “thousands of experiments per day,” a scale impossible for human teams.

• A documented limitation, highlighted in a 2024 Nature perspective, is that while AI excels at optimization, it still struggles to independently define new scientific concepts or invent novel research tools.

Algorithmic Curiosity Unleashed

At the heart of an advanced automated discovery system—the answer to what is AutoDS AI—is a mechanism designed to mimic scientific curiosity. The innovation behind the conceptual AutoDS Bayesian surprise explanation lies in its method for guiding exploration. Unlike simple optimization, which seeks the best solution within a known landscape, this approach actively seeks out novelty and information.

Bayesian surprise is a formal measure of how much new data changes an agent’s probabilistic model of the world. As researchers describe it, it measures “the dissimilarity between the posterior and prior beliefs,” driving an agent to This principle, detailed in foundational papers on the topic, prevents the AI from getting stuck exploiting a known phenomenon and instead encourages it to probe poorly understood areas, a critical function for open-ended discovery.

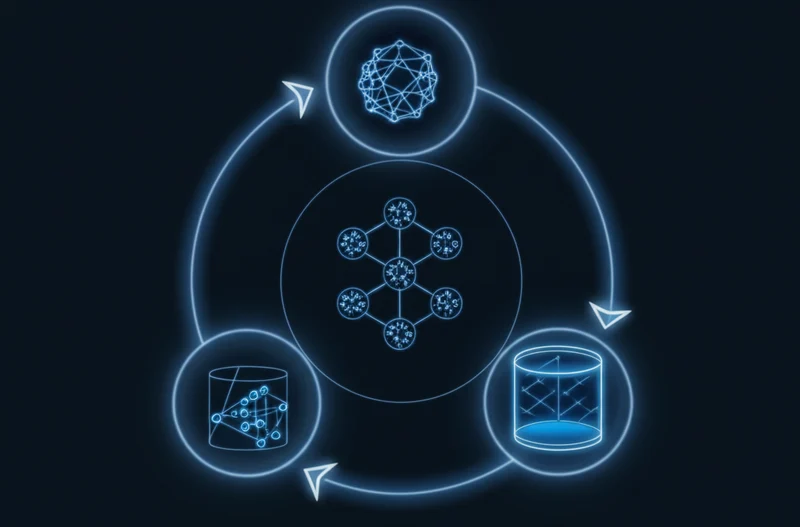

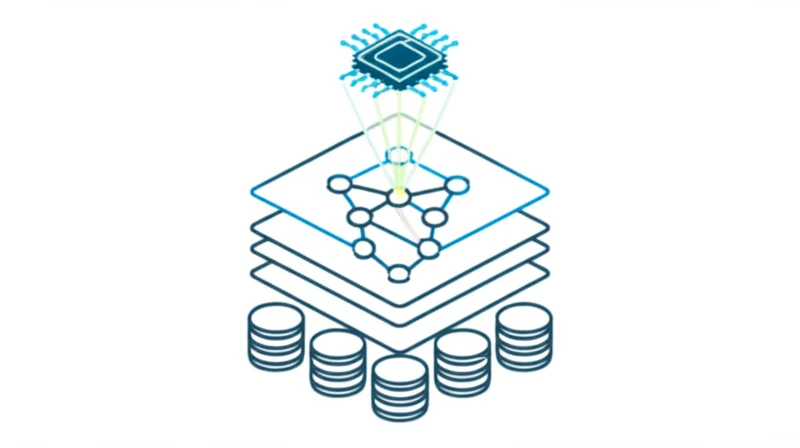

This principle is the core of a closed-loop “AI Scientist” architecture. The cycle begins with hypothesis generation, where LLMs can synthesize existing literature to propose novel ideas. Guided by Bayesian surprise, the AI then designs the most informative experiment to test its hypothesis. In advanced systems, these experiments are executed by robotic “cloud labs,” which then feed data back to the AI. The AI analyzes the results, updates its world model, and the cycle repeats, creating an increasingly sophisticated understanding of a scientific domain.

From Adam to AlphaFold: AI’s R& D Evolution

The concept of an Allen Institute AI scientist AutoDS builds on two decades of documented progress. The journey began with pioneers like “Adam,” a robot scientist that autonomously discovered novel genetic functions in yeast in 2009. This was followed by landmark achievements like DeepMind’s AlphaFold, which solved the 50-year-old challenge of protein folding and released over 200 million structure predictions, fundamentally altering biology.

These technical milestones have paved the way for significant commercial investment. The market for AI in drug discovery is expanding at a CAGR of 29.6%, driven by the need to slash development costs and timelines. According to market analysis, AI shows the ability to reduce early-stage discovery from 4.5 years to just one. This clear ROI explains why a 2023 report found 71% of R& D leaders are already using or experimenting with AI/ML in their workflows.

AI2’s own work, including the Semantic Scholar search engine and research from figures like Peter Clark on commonsense reasoning, provides the foundational layers for these more ambitious discovery engines. The goal is to create a system that not only reads science but reasons from it.

Mapping AI’s Exploratory Toolbox

Bayesian surprise is a powerful method for data-efficient exploration, but it is one of several approaches being deployed in the field of automated science. Each method presents a different set of trade-offs, making the comparison of automated discovery AI vs human researchers a complex equation dependent on the specific scientific problem.

For instance, Reinforcement Learning (RL) with intrinsic motivation rewards an AI for exploring new states, which can lead to highly creative solutions but is often too sample-inefficient for costly physical experiments. In contrast, Evolutionary Algorithms, like DeepMind’s FunSearch, are extremely effective for discovering new algorithms in well-defined computational spaces, having found novel solutions to known mathematical problems. However, they are less suited for physical sciences where the search space is not easily defined as code.

LLM-driven hypothesis generation excels at synthesizing cross-disciplinary knowledge but lacks a true causal understanding and is prone to hallucination, requiring rigorous verification. The Bayesian approach strikes a balance, offering high data efficiency for exploring complex physical problems. Its main challenges are its computational expense and the difficulty of defining a perfect “surprise” metric for every domain.

Redefining Scientific Partnership

The progression toward automated discovery engines marks a significant shift in the scientific process. These systems demonstrate the capacity to accelerate R& D cycles by orders of magnitude, compressing years of iterative benchwork into months or weeks. The primary impact is the changing role of the human expert, who transitions from performing tactical experiments to directing a fleet of AI agents, validating their most surprising findings, and weaving the results into a broader human understanding. As these systems increasingly handle the “how” of experimentation, how will human scientists redefine the “why” and “what” of scientific inquiry?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]