Kaggle Game Arena: AI Evaluation for Strategic Reasoning

In a significant development for AI assessment, Kaggle, in collaboration with Google DeepMind, has launched the Kaggle Game Arena, a new platform designed to benchmark the strategic decision-making of advanced AI models. Announced this month, the initiative moves AI evaluation away from static tasks like language translation and into the dynamic, competitive environment of strategy games, starting with chess, according to a report from InfoQ. This Google DeepMind Kaggle partnership introduces a transparent and reproducible metric for gauging the reasoning, planning, and adaptive skills of leading AI systems. The platform’s launch provides a much-needed method for understanding how AI models perform under direct, competitive pressure, offering a new dimension to the ongoing debate about model capabilities.

Key Points

- Kaggle and Google DeepMind launched the Game Arena to benchmark AI models on strategic reasoning in competitive games.

- The platform ensures statistical rigor using an open-source architecture and an all-play-all tournament format for direct model comparison.

- This development shifts AI evaluation from static knowledge recall to assessing dynamic skills like planning and real-time adaptation.

- Inaugural competitors include models from OpenAI, Google, Anthropic, and xAI, establishing a new industry proving ground.

Crafting the Digital Chessboard

The Kaggle Game Arena is engineered to provide a robust and equitable environment for AI competition, centering its design on statistical reliability and transparency. This approach directly addresses common criticisms of other AI benchmarking methods. The platform provides a standardized setting where models compete using publicly available software modules called game harnesses, which enforce game rules and manage interactions, as detailed in the platform’s announcement.

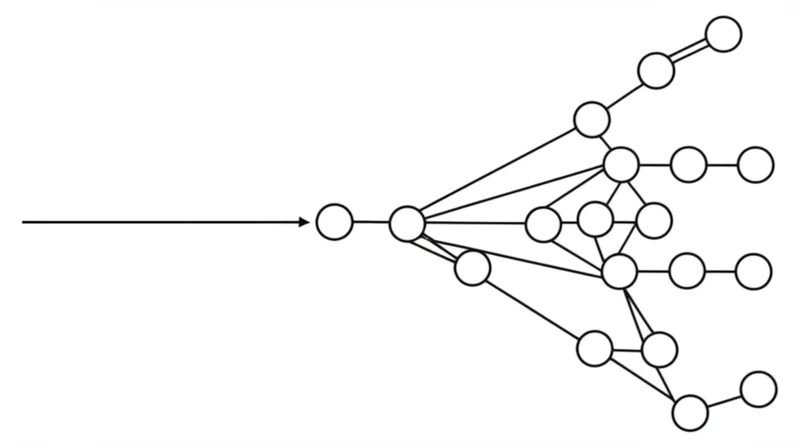

To generate statistically sound results and minimize the influence of chance, the Game Arena employs an “all-play-all” tournament structure. In this format, every participating model is pitted against every other model multiple times. This comprehensive approach produces a rich dataset that leads to more reliable rankings. The platform’s commitment to an open-source foundation, with both game environments and harnesses publicly available, fosters trust and allows researchers to inspect the methodology and reproduce results.

From Pattern Recognition to Strategic Thinking

The introduction of the Game Arena marks a pivotal evolution in how AI capabilities are measured. While existing platforms excel at evaluating models on tasks with discrete outputs, they fall short of assessing dynamic reasoning. This initiative is one of the new AI testing methods beyond static benchmarks that focuses on skills central to more generalized intelligence.

Games like chess require models to think several moves ahead, anticipate opponent actions, and formulate long-term plans, providing a clear measure of logical depth. Unlike answering a static prompt, a game is an interactive process that tests a model’s ability to adapt its strategy in real-time. As Kaggle user and chess player Sourabh Joshi noted, “Just like a chessboard reveals a grandmaster’s depth, this platform will reveal an LLM’s true mettle.” This sentiment captures how Kaggle Game Arena tests AI strategy by moving from what a model “knows” to what it can “do.”

Silicon Gladiators Enter the Ring

The launch of this AI strategic reasoning benchmark is not happening in a vacuum; it aligns with and contributes to key trends within the industry. The initial lineup of competitors demonstrates broad support, featuring Anthropic’s Claude Opus 4, DeepSeek’s DeepSeek-R1, Google’s Gemini 2.5 Pro and Gemini 2.5 Flash, Moonshot AI’s Kimi 2-K2-Instruct, OpenAI’s o3 and o4-mini, and xAI’s Grok 4, completing the initial roster of competitors.

The platform creates a unified field where models from different developers can be directly compared, fostering interoperability and spurring innovation. This public competition will likely push AI labs to enhance their models’ reasoning capabilities. Furthermore, the skills tested are fundamental for more complex multi-agent AI systems. As companies like LinkedIn explore AI agents for complex workflows, the ability of an individual agent to strategize and adapt becomes critical, reflecting a broader industry trend toward more autonomous and capable AI agents .

The Game Arena serves as a foundational testing ground for the intelligence component of these future systems.

Chess Today, Complex Games Tomorrow

The announcement has been met with enthusiasm from the AI community. AI enthusiast Sebastian Zabala called the development “huge,” noting that “Chess is the perfect starting point” for testing models under “real-time, strategic pressure.” Similarly, AI evangelist Koho Okada suggested the platform “could redefine how we evaluate AI intelligence.”

However, the platform is not without its critics. Some researchers have raised valid questions about the transferability of skills honed in controlled game environments to messy, unpredictable real-world scenarios. This remains an open and important area for research. Looking ahead, Kaggle and DeepMind plan to expand the Game Arena beyond chess to include a variety of games designed to test different facets of strategic thought, such as adaptation under uncertainty, according to their roadmap.

When Algorithms Play to Win

The Kaggle Game Arena AI evaluation platform represents a notable development in the quest to build more capable and intelligent systems. By creating a standardized, competitive benchmark for skills beyond simple pattern recognition, it provides the industry with a new tool for measuring progress in artificial reasoning. The confirmed roadmap to include more complex games indicates a long-term commitment to this evaluation paradigm. As the arena expands, which game will ultimately prove to be the definitive test of an AI’s strategic mind?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]