Lumina AI RCL Targets Lower TCO for Enterprise CPU-Based AI

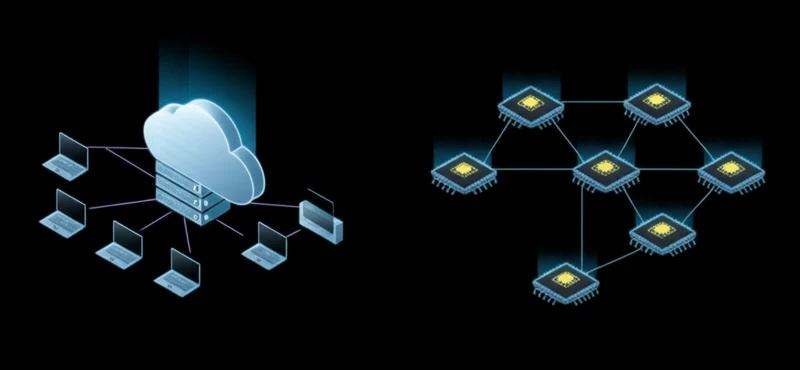

The debut of Lumina AI’s RCL 2.7. 0, featuring native Linux support, marks a notable entry into the expanding market for GPU-free machine learning solutions. This development builds on a significant industry trend: moving AI inference from power-hungry, cloud-based GPUs to ubiquitous and cost-effective CPUs at the edge. By targeting the vast installed base of CPU-powered devices, from industrial servers to business laptops, Lumina AI enters a competitive arena defined by the need for accessible, power-efficient, and high-performance AI. This move represents a strategic effort to address the market’s demand for lower total cost of ownership (TCO) and real-time processing, positioning Lumina’s runtime against established industry heavyweights. The discussion around CPU-based AI inference latest developments now includes a new contender focused on a critical deployment environment.

Key Points

• Current implementations of CPU-based inference achieve significant performance gains through techniques like model quantization, which can deliver speedups of 2-4x, and optimized software kernels that leverage advanced CPU instruction sets like AVX and VNNI.

• Lumina AI’s RCL 2.7. 0 enters a mature market, competing directly with established frameworks such as Intel’s OpenVINO, Microsoft’s cross-platform ONNX Runtime, and community-driven benchmarks for CPU performance like `llama.cpp`.

• The business case for GPU-free AI is supported by substantial market growth, with the Edge AI software market projected to reach $4.5 billion by 2028, driven by demands for lower TCO, data privacy, and real-time processing in industries like manufacturing and retail.

Silicon Symphony: Orchestrating CPU Inference

High-performance CPU inference is not a default capability but the result of sophisticated software optimization. A core technique is quantization, the process of reducing a model’s numerical precision from 32-bit floating-point (FP32) to more efficient formats like 8-bit integer (INT8). As documented by PyTorch, this method can yield speedups of 2-4x with minimal accuracy loss by reducing the model’s memory footprint and enabling the use of specialized, high-speed integer math instructions built into modern CPUs.

These hardware features, such as AVX (Advanced Vector Extensions) and Intel’s Deep Learning Boost (VNNI), are fundamental. They allow for single-instruction, multiple-data (SIMD) operations that dramatically accelerate the matrix multiplication central to neural networks. An effective runtime like RCL must contain highly optimized computational routines, or “kernels,” tuned to exploit these instructions and the CPU’s cache hierarchy. Further gains are achieved through graph-level optimizations like layer fusion, a standard practice where multiple operations are combined into a single, more efficient function call, a technique detailed in TensorFlow Lite’s documentation.

The emphasis on Lumina AI RCL 2.7. 0 Linux support is a strategic choice targeting the dominant operating system in cloud servers, industrial IoT, and developer environments. Native support ensures seamless integration into containerized deployments like Docker and existing CI/CD pipelines, a critical factor for enterprise and industrial adoption.

Titans Clash in Silicon Valley

Lumina AI’s RCL 2.7. 0 does not operate in a vacuum but rather steps into a competitive landscape populated by powerful, well-established players. A key point of comparison in any Lumina AI RCL vs OpenVINO analysis is maturity and hardware specificity. Intel’s OpenVINO toolkit, launched in 2018, demonstrated a clear market demand for CPU-centric AI and offers deep optimization for the company’s own hardware, including CPUs, integrated GPUs, and VPUs, all supported by extensive developer documentation.

For cross-platform flexibility, Microsoft’s ONNX Runtime stands as a major force, championing the interoperable Open Neural Network Exchange (ONNX) model format to deliver tuned performance across diverse hardware. On the community front, the `llama.cpp` project set a new benchmark for what is possible, proving that even large language models can run efficiently on consumer CPUs through aggressive quantization and meticulous C++ optimization. These technologies establish a high bar for performance and usability. The challenge for Lumina AI is to define the “sweet spot” where its runtime delivers an optimal balance of performance and cost, thereby creating a compelling value proposition.

Dollars and MIPS: Edge AI Economics

The strategic shift toward GPU-free machine learning solutions is fundamentally an economic one. By sidestepping the significant capital expenditure and high operational costs of dedicated GPUs, organizations can dramatically lower the total cost of ownership (TCO) for deploying AI. This business case is validated by strong market growth projections. According to MarketsandMarkets, the Edge AI software market is set to expand from $1.1 billion in 2023 to $4.5 billion by 2028, a compound annual growth rate of 31.8%, a trend driven by demand for real-time insights. This is part of a larger boom in the edge computing market, which is projected to grow on the back of IoT device proliferation and 5G network rollouts.

This expansion is fueled by tangible applications across key sectors. In manufacturing, CPU-based AI on Linux controllers enables predictive maintenance and quality control without expensive, ruggedized GPUs. Retailers use it to power in-store analytics on low-cost edge servers, while the healthcare industry can deploy AI on portable medical devices for on-the-spot analysis where data privacy is paramount. While high-end GPUs still hold a performance advantage for massive-scale workloads, as NVIDIA’s TensorRT-LLM demonstrates, the vast market for cost-sensitive, real-time edge applications provides a substantial commercial opportunity.

Beyond the Core: Computing’s Next Frontier

The launch of Lumina AI’s RCL 2.7. 0 is a significant development that underscores the maturity and commercial viability of the CPU-based inference market. It provides another tool for developers building applications where cost, power efficiency, and hardware ubiquity are primary design constraints. The future of edge processing, as indicated by academic research, points toward hybrid runtimes that intelligently orchestrate workloads across CPUs, integrated GPUs, and dedicated NPUs. The long-term success of any runtime will depend on its ability to adapt to this heterogeneous computing future. As the hardware landscape continues to evolve, how will specialized runtimes differentiate themselves beyond pure CPU optimization?

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]