MoE & Llama 3: The Tech Behind Pluto Labs AI Cost Efficiency

The artificial intelligence industry, long defined by a “bigger is better” ethos, is undergoing a fundamental realignment. While frontier models with hundreds of billions of parameters dominate headlines, a new wave of development is proving that superior performance does not require astronomical cost. The efficiency-first AI revolution represents a widespread industry shift, exemplified by the achievements of a new class of models. Recent announcements from real-world players like Mistral AI, Meta, and Anthropic demonstrate how meticulously engineered models are matching or exceeding the capabilities of previous-generation titans at a fraction of the computational and financial cost. This development marks a significant maturation of the AI landscape, driven by unsustainable training expenses and a growing demand for practical, deployable intelligence.

Key Points

• Mixture-of-Experts (MoE) architectures, used by models like Mistral’s Mixtral 8x7B, enable larger knowledge bases with the inference speed and cost of much smaller models by activating only ~12.9B of 46.7B total parameters per token.

• Current implementations demonstrate massive cost differentials; Anthropic’s Claude 3 Haiku is priced at $0.25 per million input tokens, a 60x reduction from its flagship Opus model ($15.00), enabling high-throughput applications.

• Meta’s Llama 3 8B model achieves an 82.0 MMLU score, a benchmark performance that surpasses many prior-generation models with over 70 billion parameters, and is compact enough to run on consumer-grade hardware.

• Technical advancements in model compression, such as quantization, can reduce a model’s memory footprint by up to 75%, directly lowering operational costs and enabling on-device deployment.

The $100 Million Model Paradox

For years, the path to AI progress was a straight line: more data and more parameters. This paradigm, however, has led to an economic and energetic reckoning. Training a model like GPT-4 is estimated to have cost over $100 million, with research from Epoch AI showing that the compute used for training top models has doubled every 10 months since 2010—a rate that makes Moore’s Law look sluggish.

This escalating cost structure creates immense barriers to entry. More critically, venture capital firm Andreessen Horowitz (a16z) highlights in its analysis of AI costs that inference, or the day-to-day running of a model, can account for up to 90% of its total lifecycle cost. This economic pressure has created a powerful incentive for the development of high performance low cost AI models, shifting the industry’s focus from sheer scale to computational intelligence.

This shift is validated by the explosion of open-source and efficient models. The Hugging Face Open LLM Leaderboard, which hosts over 500, 000 models, shows fierce competition among smaller, highly-tuned models, proving that innovation is no longer the exclusive domain of a few heavily funded labs.

Sparse Activation: The Efficiency Multiplier

The impressive performance-to-cost ratios of this new generation are not magic; they are the result of specific architectural and compression innovations. An AI breakthrough in this space is less about a single “eureka” moment and more about the clever application of established computer science principles.

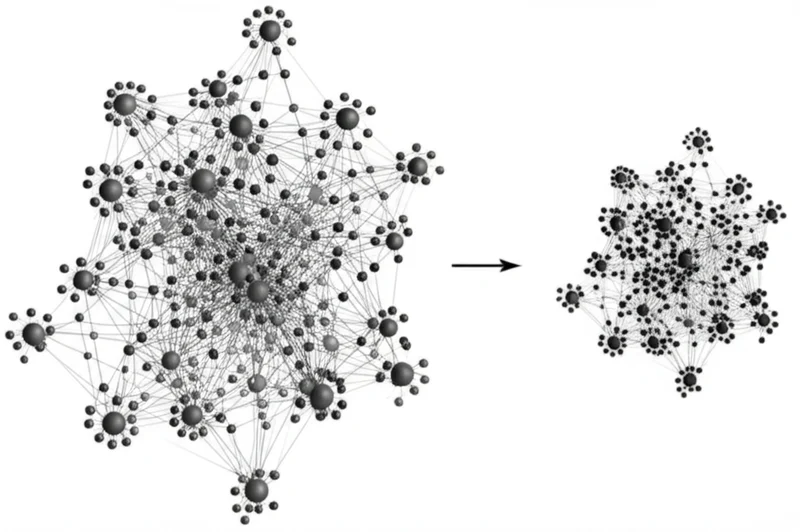

The most significant of these is the Mixture-of-Experts (MoE) architecture. As explained by Mistral AI for its Mixtral 8x7B model, this design uses multiple smaller “expert” sub-networks. A “router” directs each piece of incoming data to only the two most relevant experts. This means a model can possess the vast knowledge of 46.7 billion parameters but have the inference speed and cost of a much smaller 12.9 billion parameter model. This sparse activation, a concept thoroughly explained by the AI community, is the core principle behind the efficiency of models from both Mistral and Google.

Complementing MoE are compression techniques like quantization and pruning. Quantization reduces the numerical precision of the model’s weights (e. g., from 32-bit to 8-bit numbers), which can slash the memory footprint by up to 75% and accelerate calculations. This is how a powerful model becomes small enough to run on a local device instead of a massive data center.

60x Price Drop, 90% Performance Retained

The real-world benchmark results provide compelling evidence of this efficiency revolution. A direct comparison of performance and pricing reveals a dramatic divergence between the frontier models and the new efficiency-first contenders.

The data is stark. As seen in the table below, Anthropic’s flagship, Claude 3 Opus, scores an impressive 86.8% on the MMLU knowledge benchmark but costs $15.00 per million input tokens. Its sibling, Claude 3 Haiku, scores a very capable 75.2% on the same benchmark but costs just $0.25—a 60-fold price reduction. This isn’t a minor discount; it’s a fundamental change in accessibility.

Similarly, Mistral’s Mixtral 8x7B, while scoring a lower 70.6% MMLU, offers performance competitive with older flagship models at an API cost of just $0.70 per million tokens. The release of Meta’s Llama 3 70B, with its 82.0% MMLU score and low cost via third-party APIs, further solidifies this trend. These models deliver substantial capabilities at a price point that is 10x to 60x lower than the top-tier proprietary offerings, making high-performance AI economically viable for a much broader range of applications.

Sources for data: Official pricing pages and technical reports from Anthropic, OpenAI, Mistral AI, and third-party API providers.

Breaking the Cloud Ceiling

The impact of this efficiency-first AI revolution extends beyond cost savings; it fundamentally reshapes the AI value chain. By creating powerful models that are less computationally demanding, companies are democratizing access to advanced AI and enabling entirely new categories of applications.

The existence of models like Meta’s Llama 3 8B, which can run effectively on consumer-grade hardware, shifts development from a purely cloud-centric model to one that includes on-device and edge computing. This enables applications with greater privacy, lower latency, and offline functionality. Furthermore, the speed of models like Anthropic’s Haiku, which can process 21, 000 tokens per second, unlocks high-throughput tasks like real-time customer service analysis and live content moderation that were previously impractical with slower, more expensive models.

Intelligence That Scales, Not Just Grows

The emergence of high-performance, low-cost AI models represents a critical and pragmatic evolution in the field. This trend, driven by architectural innovation and economic necessity, is not a repudiation of frontier research but a vital complement to it. It broadens the base of developers who can build with AI and expands the scope of problems that can be solved efficiently. The focus is shifting from simply building the largest possible model to building the smartest, most efficient model for the task. As this computational leverage becomes more widespread, where will the next wave of innovation emerge—in the underlying architecture or in the applications it unlocks?

Tags

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]