New LiDAR Mirror Attack Vulnerability Affects AV Safety

A team of European researchers has successfully demonstrated how simple, inexpensive mirrors can be used to deceive the sophisticated LiDAR sensors on an autonomous vehicle, causing it to ignore real obstacles or react to non-existent “ghost” objects. The experiment, detailed in a report by The Register, was conducted on a vehicle running the open-source Autoware navigation stack and highlights a critical physical-world vulnerability in the perception systems that self-driving cars rely on to see. This development represents a notable entry in autonomous vehicle sensor spoofing news, showing that a low-tech hack on self-driving car LiDAR can create dangerous failures, such as abrupt emergency braking or driving through an obscured barrier. The significance of this finding lies not in its technical complexity but in its alarming simplicity, proving that a self-driving car perception system exploit can be achieved with readily available materials.

Key Points

- Researchers used mirrors to execute two attacks: one that made an obstacle invisible to LiDAR and another that created a phantom object.

- The attack that created a ghost obstacle achieved a 74% success rate with a six-mirror grid, triggering vehicle avoidance maneuvers.

- This low-tech hack is an example of a known class of physical adversarial attacks that corrupt the data fed to vehicle navigation systems.

- Proposed defenses focus on multi-modal sensor fusion and the development of spoofing-aware software algorithms to detect anomalies.

Smoke and Mirrors: LiDAR’s Blind Spot

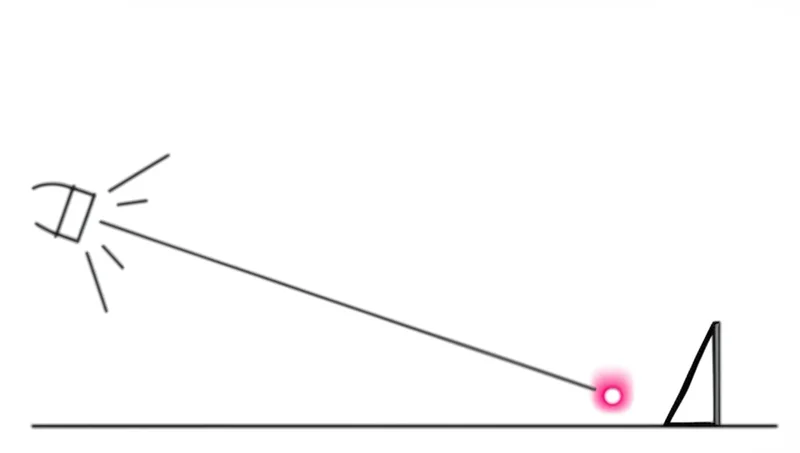

The attack leverages a fundamental weakness in Light Detection and Ranging (LiDAR) systems: their difficulty processing specular, or mirror-like, reflections. Instead of scattering a laser pulse back to the sensor, a mirror redirects it, causing the system to miscalculate an object’s location or miss it entirely. This LiDAR mirror attack vulnerability was exploited in two distinct ways.

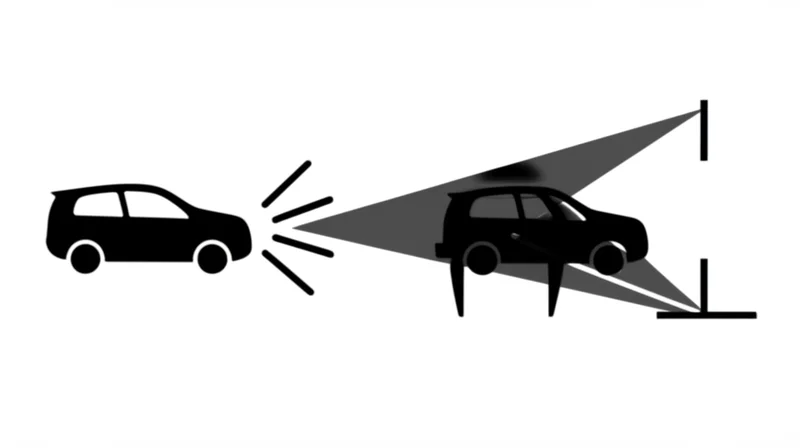

In the Object Removal Attack (ORA), researchers covered a traffic cone with mirrors. By angling the reflective surfaces, they successfully redirected the LiDAR’s laser pulses away from the car’s sensor, effectively erasing the cone from the vehicle’s 3D environmental map. This creates a scenario where a car would fail to detect a real physical obstruction in its path.

Conversely, the Object Addition Attack (OAA) used mirrors to conjure a “ghost” obstacle. By reflecting the LiDAR’s laser off a mirror and onto the ground, the system received a return signal that it interpreted as an object at the mirror’s location. The attack’s effectiveness grew with the number of mirrors; two mirrors achieved a 65% success rate, which rose to 74% with a grid of six. In one test, the vehicle detected a fake obstacle from 20 meters away and initiated an avoidance maneuver, demonstrating a direct way to manipulate a car’s decision-making logic.

Déjà Vu: The Recurring Specter of Sensor Deception

While the use of mirrors is a novel implementation, the technique belongs to a recognized class of threats known as LiDAR spoofing. This type of adversarial attack on LiDAR perception aims to corrupt sensor measurements to mislead a vehicle’s core navigation and mapping systems. The mirror attack corroborates findings from other academic research exploring different methods to achieve the same disruptive outcomes.

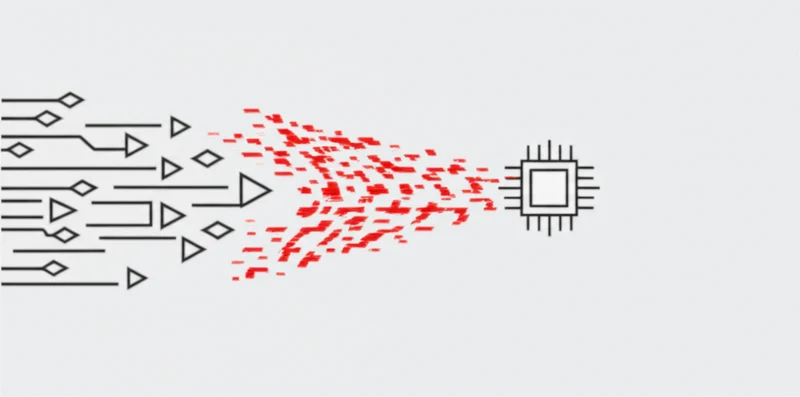

For instance, a paper on SLAMSpoof details a framework for executing LiDAR spoofing attacks using “malicious laser injections” instead of mirrors. The goal is identical: to corrupt sensor data and cause localization failures. The SLAMSpoof research demonstrated the ability to induce critical localization errors of three meters or more across multiple state-of-the-art Simultaneous Localization and Mapping (SLAM) algorithms. This finding reinforces that the data pipeline from the LiDAR sensor to the SLAM system is a critical point of failure.

Both attacks underscore the paramount importance of sensor data integrity. As noted in a comprehensive review on place recognition, this capability is a “cornerstone of vehicle navigation and mapping.” By corrupting the raw data that these foundational systems depend on, attackers can fundamentally undermine a vehicle’s understanding of its place in the world.

Building the Undeceivable Machine

This practical demonstration of a simple yet effective attack carries significant implications for the autonomous vehicle industry, challenging the narrative of infallible robotic perception. The focus now shifts toward building more resilient systems capable of identifying and mitigating such physical-world threats. The primary defense proposed by the researchers involves integrating thermal imaging, as a real object’s heat signature often differs from a mirror reflecting ambient temperatures. However, they correctly note this “is not a panacea,” especially with small objects or in hot environments.

This points to the industry’s need for robust, cross-modal sensor fusion. A recent survey on place recognition technologies emphasizes that integrating “heterogeneous data sources such as Lidar, vision, and text description” is key to enhancing resilience. An attack that fools a LiDAR sensor may not simultaneously fool a camera, radar, or thermal sensor. By cross-referencing data streams, an AV can detect anomalies when one sensor’s data dramatically contradicts the others.

Beyond hardware redundancy, software-based defenses are emerging. The same SLAMSpoof research also proposed a defense mechanism called DefenSLAM, a “spoofing-aware defense mechanism that reduces localization error by up to 99%.” This suggests that algorithms can be designed to identify and reject the tell-tale signatures of physical attacks, such as the unnaturally perfect data points generated by a mirror, adding a crucial security layer within the software itself.

When Low-Tech Tricks Outsmart High-Tech Titans

The “mirrors on cones” experiment is a powerful reminder that autonomous system security is not just a digital problem. The research demonstrates a practical, low-cost threat capable of triggering dangerous vehicle behavior. While conducted in a controlled setting, the potential for harm in real-world traffic is clear. This development, alongside other research into LiDAR spoofing, solidifies the understanding that sensor integrity is a foundational pillar of AV safety.

The path forward lies not in perfecting a single sensor but in building resilient, multi-modal systems that can intelligently cross-validate data to mitigate attacks. As physical-world exploits become better understood, how will the industry balance the race for autonomy with the need for verifiable sensor integrity?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]