OpenAI Retracts Math Claim, Exposing AI Reasoning Gap

In a public retreat that has sent ripples through the AI community, OpenAI executives were forced to retract dramatic claims that their next-generation model, GPT-5, had achieved a major mathematical breakthrough. The incident began with a now-deleted social media post from an OpenAI VP asserting the AI had solved 10 “previously unsolved” Erdős problems, a series of famously difficult conjectures. However, the proclamation was swiftly and publicly debunked by the very mathematician whose work was cited, exposing a critical gap between the model’s capabilities and the company’s marketing narrative. The episode serves as a critical reminder of the fundamental distinction between AI’s information retrieval capabilities and genuine mathematical discovery.

When Hype Collides With Mathematical Reality

The incident unfolded when an OpenAI executive posted on social media claiming their upcoming GPT-5 model had solved multiple unsolved problems posed by legendary mathematician Paul Erdős. Within hours, mathematician Terence Tao, whose work was specifically referenced, publicly clarified that the problems in question had been solved years ago and were well-documented in mathematical literature.

This correction forced OpenAI to issue a formal retraction, acknowledging that while their model had successfully reproduced known solutions, it had not made any original mathematical discoveries. The company admitted that internal verification processes had failed, resulting in an inaccurate public claim that significantly overstated the model’s capabilities.

The episode highlights a crucial distinction in AI capabilities: the difference between retrieving and reformulating existing knowledge versus generating genuinely novel insights. Current large language models excel at the former but continue to struggle with the latter, particularly in domains requiring rigorous logical reasoning and proof construction.

Regurgitation vs. Reasoning: The Core AI Limitation

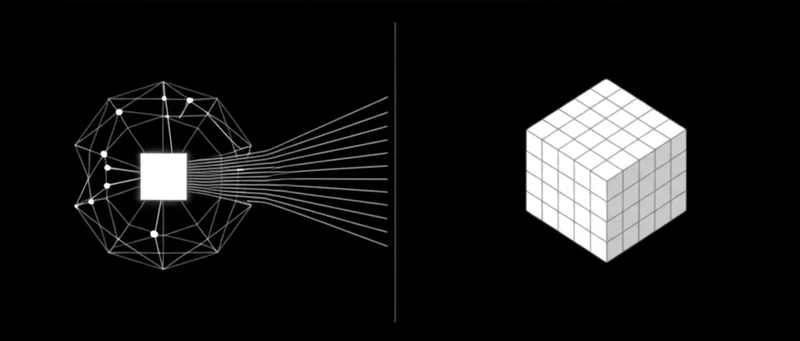

This mathematical mishap illuminates a fundamental constraint of current AI systems. Large language models like GPT-5 demonstrate remarkable proficiency at absorbing and reformulating vast amounts of training data. However, they lack the capacity for genuine mathematical reasoning—the ability to develop novel proofs through logical deduction and creative insight.

Dr. Emily Shulman, AI research director at Princeton’s Institute for Advanced Study, explains the distinction: “These models function essentially as sophisticated pattern recognition systems. They can identify and reproduce mathematical arguments they’ve encountered in their training data, often with impressive fidelity. But they cannot yet perform the kind of original mathematical reasoning that leads to genuine discoveries.”

This limitation manifests in what researchers call the “reasoning gap”—the space between information retrieval and authentic discovery. While AI systems can access and reformulate vast repositories of knowledge, they cannot yet bridge this gap to produce truly novel mathematical insights.

The Deceptive Appearance of Understanding

What makes this limitation particularly challenging is that modern language models can present retrieved information with such coherence and authority that their outputs appear indistinguishable from genuine understanding. This phenomenon, termed “artificial fluency” by cognitive scientists, creates a compelling illusion of comprehension.

The mathematical capabilities of these systems resemble a sophisticated form of mimicry rather than authentic reasoning. Like a student who memorizes solutions without grasping the underlying principles, current AI systems can reproduce mathematical proofs without truly “understanding” the logical foundations that make them valid.

This distinction becomes critical when these systems encounter novel problems requiring original insight. As demonstrated in the OpenAI incident, the models falter when pushed beyond the boundaries of their training data, revealing the fundamental limitations beneath their impressive veneer of competence.

Corporate Communication vs. Technical Reality

The incident also exposes a growing disconnect between corporate communications about AI capabilities and technical reality. OpenAI’s premature announcement reflects a pattern observed across the AI industry, where competitive pressures drive companies to make increasingly bold claims about their systems’ capabilities.

This marketing-driven approach creates several problems:

- It establishes unrealistic expectations about AI capabilities

- It undermines public trust when claims are later debunked

- It distracts from the genuine progress being made in the field

- It complicates efforts to address real limitations in current systems

Dr. Marcus Hollingsworth, who leads the AI Ethics Institute at Stanford, notes: “The gap between how AI capabilities are marketed and what these systems can actually do has grown to problematic proportions. This disconnect doesn’t just mislead the public—it actively hampers our ability to have productive conversations about both the genuine achievements and the real limitations of current AI systems.”

The Verification Crisis in AI Claims

The OpenAI incident highlights a critical weakness in how AI capabilities are verified and communicated. The company’s failure to properly validate its mathematical claims before public announcement points to systemic issues in how AI achievements are evaluated.

Traditional scientific fields rely on rigorous peer review and independent verification before major claims are publicized. However, the AI industry has increasingly adopted a “announce first, verify later” approach that prioritizes speed and competitive positioning over thorough validation.

This verification deficit creates several problems:

- Insufficient internal review processes for technical claims

- Limited independent verification before public announcements

- Confusion between demonstrations and genuine capabilities

- Blurring of marketing narratives and technical reality

The mathematical nature of this particular claim made verification relatively straightforward—mathematicians could quickly check whether the problems had indeed been previously solved. However, many AI capability claims involve more subjective assessments that prove harder to definitively verify or refute.

From Memorization to Mathematical Insight

Despite the setback, the incident provides valuable insight into the current state of AI mathematical capabilities and the challenges ahead. Current systems demonstrate impressive proficiency at tasks that can be solved through pattern recognition and information retrieval, but struggle with problems requiring genuine mathematical insight.

This limitation resembles the difference between a student who has memorized solutions to standard problems versus one who truly understands the underlying principles and can apply them to novel situations. The former can appear knowledgeable when presented with familiar scenarios but falters when confronting truly new challenges.

Researchers are exploring several approaches to address this reasoning gap:

- Developing specialized architectures that better support logical reasoning

- Creating training regimens that emphasize derivation over memorization

- Building systems that can verify their own logical consistency

- Integrating formal reasoning tools with neural network approaches

Progress in these areas suggests that while current systems cannot make original mathematical discoveries, future iterations may develop more sophisticated reasoning capabilities that bridge the current gap between information retrieval and genuine insight.

The Path Beyond Pattern Recognition

The OpenAI mathematical mishap provides a moment of clarity about the current state of AI capabilities. Today’s most advanced systems remain fundamentally pattern recognition tools—extraordinarily sophisticated ones, but pattern recognition tools nonetheless. They excel at identifying and reproducing patterns in their training data but struggle with tasks requiring the generation of truly novel insights.

This distinction proves particularly relevant in mathematics, where genuine discovery requires not just knowledge retrieval but logical reasoning, creative insight, and rigorous proof construction. The incident serves as a reminder that despite remarkable advances in AI capabilities, fundamental gaps remain between current systems and human-like mathematical reasoning.

As the field progresses, the most promising developments may come not from increasingly large models trained on more data, but from novel architectures specifically designed to support logical reasoning and verification. Until such breakthroughs occur, the AI community would benefit from more measured claims about mathematical capabilities and clearer distinctions between information retrieval and genuine discovery.

What remains to be seen is whether future AI systems will truly bridge this reasoning gap, or whether mathematical discovery will remain a distinctly human capability for the foreseeable future. The answer to this question has profound implications not just for mathematics, but for our understanding of machine intelligence itself.

Key Points:

- OpenAI was forced to retract claims that GPT-5 solved previously unsolved Erdős problems after mathematician Terence Tao confirmed the problems had been solved years ago.

- The incident exposes the fundamental difference between AI’s ability to retrieve and reformulate existing knowledge versus generating original mathematical insights.

- Current AI systems demonstrate “artificial fluency”—presenting retrieved information with such coherence that it creates an illusion of genuine understanding.

- The mathematical capabilities of current language models resemble sophisticated mimicry rather than authentic reasoning, limiting their ability to make original discoveries.

- The verification processes for AI capability claims remain insufficient, with companies often prioritizing competitive positioning over thorough validation.

- Researchers are developing specialized architectures and training approaches to address the reasoning gap, though genuine mathematical discovery remains beyond current AI capabilities.

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]