Zhipu GLM-4 Gets Agent Skills: ‘All Tools’ for Autonomy

Zhipu AI, a prominent Chinese AI firm, has launched its GLM-4 family of models, introducing a powerful open-weight competitor that directly challenges proprietary systems like OpenAI’s GPT-4. The Zhipu GLM-4 release is headlined by its “All Tools” feature, an advanced function-calling system that enables the model to act as an autonomous agent. This capability allows GLM-4 to interpret complex user intent, select appropriate tools like a code interpreter or web browser, and execute them to complete tasks without explicit programming. Backed by benchmark scores that rival or exceed top-tier models, the GLM-4 series represents a significant development in making high-performance, agentic AI more accessible to developers and researchers globally, marking the arrival of a true open weight model with agent capabilities.

Key Points

• Zhipu AI has released its GLM-4 model series, featuring a 128k context window and a powerful bilingual architecture.

• The release’s core innovation is “All Tools,” a feature that provides GLM-4 autonomous agent capability by letting it independently select and run tools like code interpreters and web browsers.

• Benchmark data shows Zhipu AI challenges GPT-4, with GLM-4 scoring 88.7 on MMLU and a class-leading 85.7 on the HumanEval code generation test.

• The model is released under an “open-weight” GLM-4 Community License, providing broad research and commercial access with some restrictions.

Autonomous Orchestration Unleashed

The GLM-4 series is built on a dense, autoregressive transformer architecture designed for both performance and complex reasoning. Its extensive 128k token context window allows it to process and analyze vast amounts of information, from lengthy documents to intricate conversational histories. According to its official research paper, the model was trained on a high-quality, multilingual dataset with a strong emphasis on English and Chinese, alongside a significant volume of code to bolster its logical and programming skills.

The most notable technical advancement is the “All Tools” capability. This system moves beyond simple function calling by allowing the model to autonomously orchestrate a workflow. For a complex query, GLM-4 can decide to use its code interpreter for calculations, browse the web for current information, and synthesize the findings into a single, coherent response. This built-in agentic foundation positions the model as a platform for sophisticated automation, a key direction for the entire AI industry.

Benchmark Battles: Numbers Speak

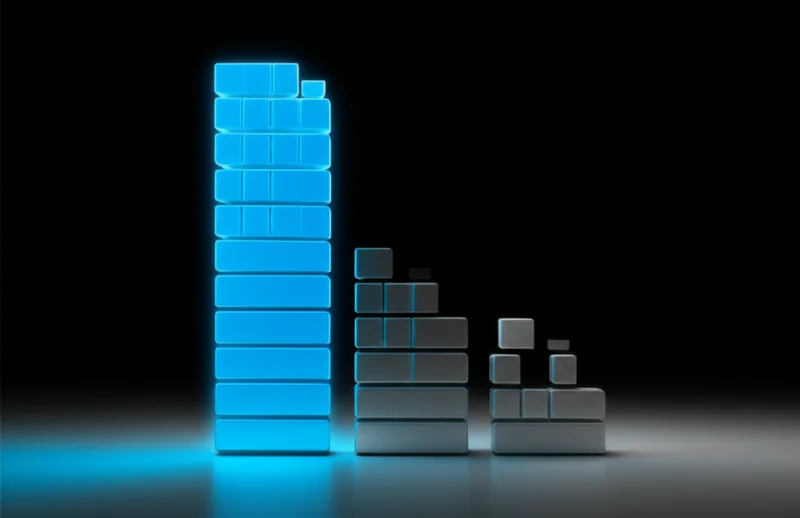

Zhipu AI substantiates its claims with rigorous benchmark testing, demonstrating that GLM-4’s performance is highly competitive with both leading open and proprietary models. The results indicate that the performance gap between open-weight and closed-source systems continues to narrow significantly. On the MMLU benchmark, which measures general knowledge across 57 subjects, GLM-4 achieves a score of 88.7, comparable to the original GPT-4’s reported score of 86.4.

Its mathematical reasoning is also strong, with a 91.8 on the GSM8K benchmark. However, its performance on the HumanEval benchmark for Python code generation is particularly impressive. GLM-4 scores 85.7, surpassing the reported scores for GPT-4 (67.0) and Meta’s Llama 3 70B (81.7). Furthermore, its long-context capabilities are validated by a nearly 100% accuracy on the , confirming its ability to reliably retrieve information from its full 128k context window. The family also includes GLM-4V, a vision-language model designed to compete with GPT-4V on multimodal tasks.

Democratizing Digital Autonomy

The Zhipu GLM-4 All Tools release is a strategic move within the global AI landscape, impacting both the open-source debate and the race toward AI agents. While not released under a conventional open-source license, its “open-weight” approach democratizes access. The models, available on Hugging Face under the GLM-4 Community License, allow broad research and commercial use, fueling innovation outside of closed, proprietary ecosystems.

This development, highlighted by outlets like the South China Morning Post as part of Beijing’s AI self-sufficiency drive, solidifies Zhipu AI’s standing as a global contender. The company’s progress is supported by significant investment, with a valuation reported by TechCrunch to be approaching $3 billion in early 2024. By integrating advanced agentic features directly into a high-performing base model, Zhipu AI is not just competing on benchmarks; it is actively shaping the trajectory toward more capable and autonomous AI systems. This is the latest Zhipu AI GLM-4 news to signal a major shift in the competitive landscape.

Open Weights, Rising Expectations

The arrival of Zhipu AI’s GLM-4 family marks a pivotal moment for openly accessible AI. By achieving performance parity with established leaders and introducing sophisticated agentic capabilities like “All Tools,” GLM-4 has raised the bar for what developers can expect from non-proprietary models. This release moves the conversation from simply catching up on benchmarks to innovating on core functionality. It provides the global developer community with a powerful new foundation for building the next generation of automated applications. As these advanced capabilities become more widespread, what new forms of complex problem-solving will become standard for AI assistants?

Read More From AI Buzz

Perplexity pplx-embed: SOTA Open-Source Models for RAG

Perplexity AI has released pplx-embed, a new suite of state-of-the-art multilingual embedding models, making a significant contribution to the open-source community and revealing a key aspect of its corporate strategy. This Perplexity pplx-embed open source release, built on the Qwen3 architecture and distributed under a permissive MIT License, provides developers with a powerful new tool […]

New AI Agent Benchmark: LangGraph vs CrewAI for Production

A comprehensive new benchmark analysis of leading AI agent frameworks has crystallized a fundamental challenge for developers: choosing between the rapid development speed ideal for prototyping and the high-consistency output required for production. The data-driven study by Lukasz Grochal evaluates prominent tools like LangGraph, CrewAI, and Microsoft’s new Agent Framework, revealing stark tradeoffs in performance, […]