Google Introduces Gemma 3 Open Source AI Model with 128k Context Window

In a bold move that’s reshaping the AI landscape, Google has released its newest Gemma 3 open source AI model. This lightweight yet powerful model brings impressive capabilities to developers everywhere, featuring multimodal processing, support for over 140 languages, and an expanded context window of 128,000 tokens.

As the generative AI market reached $25.6 billion in 2024 and heads toward $50 billion in 2025, Gemma 3 arrives as a formidable competitor to other open-source models like Meta’s Llama-405B. According to early evaluations, Gemma 3 is already outperforming its rivals in human preference tests.

Breaking New Ground: Gemma 3’s Capabilities

Far from being just an incremental update, Gemma 3 represents a significant leap forward in Google’s commitment to democratizing AI. The Google Developer’s blog highlights how this new model supports over 140 languages, making AI truly accessible to a global community of developers.

What sets Gemma 3 apart is its multimodal architecture, allowing it to process and understand both images and text seamlessly. In today’s multimedia world, this capability opens doors to more intuitive and comprehensive AI applications.

Under the hood, Gemma 3 incorporates sophisticated techniques like Grouped-Query Attention and Sliding-Window Attention. These improvements reduce computational demands while enhancing the model’s ability to focus on relevant parts of longer inputs.

For developers working with limited resources, Google has released official quantized versions that significantly reduce the model’s size without compromising accuracy. This optimization makes Gemma 3 remarkably versatile, running efficiently on various hardware including NVIDIA and AMD GPUs as well as standard CPUs.

“The ease of integration with existing tools is a major advantage,” notes Dr. Anya Sharma, a leading AI researcher. “It lowers the barrier to entry for developers and encourages experimentation.”

Released under the permissive Apache 2.0 license, Gemma 3 integrates smoothly with popular frameworks including Hugging Face Transformers, JAX, and PyTorch, allowing developers to incorporate it into existing workflows with minimal friction.

The 128K Context Window: Opening New Possibilities

Perhaps the most game-changing advancement in Gemma 3 is its expanded context window. At 128,000 tokens, as noted in the Google Developer’s blog, this represents a dramatic increase over most competing models.

This expanded window allows Gemma 3 to process and understand massive amounts of information at once, maintaining coherence and relevance across lengthy inputs.

“The ability to process such large contexts opens up new possibilities for AI applications,” says one AI researcher. “We can now tackle problems that were previously intractable due to limitations in context size.”

Combined with its other features, this expanded context window positions Gemma 3 as a versatile tool for developers working on everything from document processing to creative writing applications.

Performance That Matters: How Gemma 3 Compares

Beating the Competition

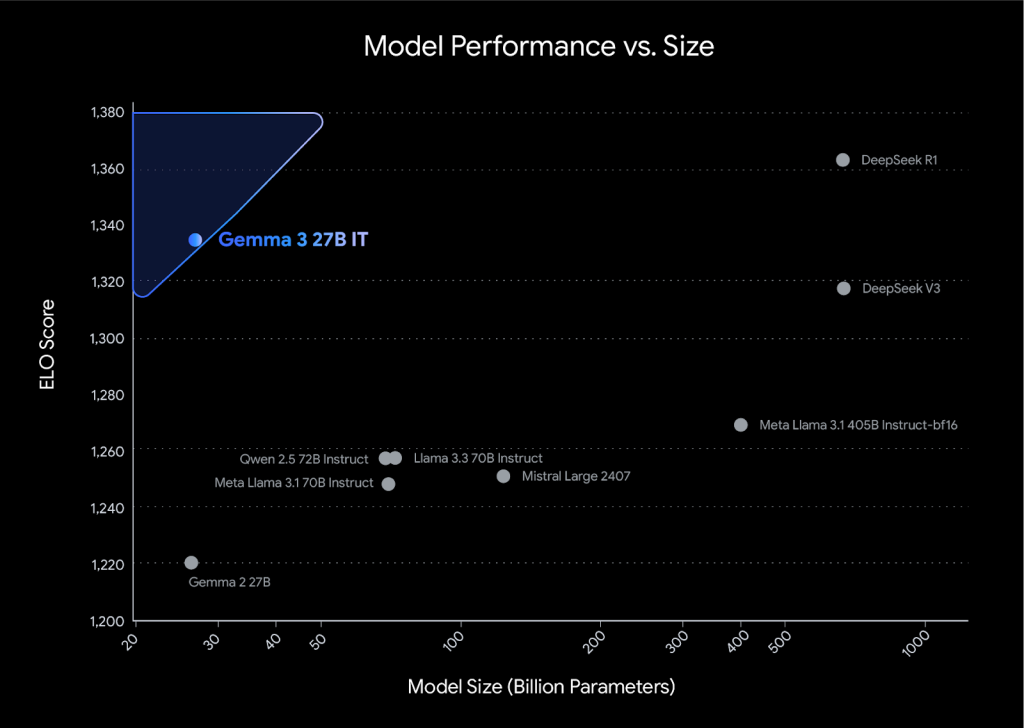

In the crowded field of large language models, Gemma 3 stands out with impressive benchmark results. According to Artificial Intelligence News, the model outperforms rivals like Llama-405B, DeepSeek-V3 and o3-mini in human preference evaluations.

The Gemma 3 27B variant has shown particularly strong results in Chatbot Arena Elo score tests, suggesting real-world effectiveness beyond abstract benchmarks.

“The initial benchmark results are promising,” says Dr. Ben Carter, a data scientist specializing in natural language processing. “They indicate that Gemma 3 is a serious contender in the LLM space.”

This performance stems from Gemma 3’s optimized architecture and training process, with techniques like grouped-query attention contributing to both efficiency and effectiveness.

Quantization: Doing More with Less

One of Gemma 3’s standout features is its official quantized versions, as reported by Artificial Intelligence News. This technique reduces the precision of model weights, dramatically shrinking size and computational requirements without significant accuracy loss.

“Quantization is a crucial step towards democratizing access to powerful AI models,” notes an AI engineer. “It allows us to bring AI to devices and applications where it was previously impossible.”

By offering these quantized variants, Gemma 3 lets developers choose the optimal balance between performance and efficiency for their specific needs and constraints.

Small Models, Big Impact: The Rise of Efficient AI

Shifting Focus to Efficiency

While massive language models have captured headlines, a meaningful shift toward smaller, more efficient models is underway. Gemma 3 joins this movement alongside Microsoft’s Phi-4 and Mistral Small 3.

Interest in these smaller language models has surged since Gemma’s initial February 2024 release, reflecting growing recognition of their potential. This trend is making AI technology accessible to organizations and developers who lack massive infrastructure.

The efficiency focus also enables AI deployment in resource-constrained environments like smartphones and embedded systems, bringing real-time AI processing to the edge without cloud dependencies.

The Two Paths to Smaller Models

Two primary approaches drive the development of these smaller models: knowledge distillation and native small language model design.

Knowledge distillation trains a compact “student” model to emulate a larger “teacher,” while native designs optimize for efficiency from inception.

“Native SLMs represent a promising direction for the future of AI,” says an AI architect. “They allow us to optimize for efficiency from the ground up, rather than simply compressing existing models.”

Both approaches offer unique advantages, and ongoing research in both areas is rapidly expanding the capabilities of smaller models.

Developer-Friendly Integration: Using Gemma 3

Working With What You Know

Gemma 3 prioritizes compatibility with the existing AI development ecosystem. The official documentation details its seamless multimodality and extensive framework support, allowing developers to leverage their existing skills and workflows.

“The seamless integration is a huge win for developers,” says Maria Rodriguez, a software engineer. “It allows us to quickly incorporate Gemma 3 into our existing projects without having to learn new tools or rewrite our code.”

This interoperability extends to hardware as well, with support for NVIDIA GPUs, AMD GPUs via ROCm, and CPU execution through Gemma.cpp, giving developers flexible deployment options.

Multiple Ways to Access

Google provides several platforms for accessing Gemma 3, meeting diverse developer needs. According to the official documentation, options include Google AI Studio, Hugging Face, and Kaggle.

Google AI Studio offers a web-based development environment, while Hugging Face provides access through its popular platform for open-source AI models. Kaggle makes Gemma 3 available to its data science community.

“The multiple access points make it easy for anyone to get started with Gemma 3,” says David Lee, a data science student. “I can choose the platform I’m most comfortable with and start experimenting right away.”

Safety First: Responsible AI Development

Building Safety Into the Core

Gemma 3’s development incorporated extensive data governance and alignment with responsible AI principles. Google implemented various safeguards to mitigate potential risks associated with large language models.

“The open-source approach is crucial for ensuring the responsible development of AI,” says Dr. Emily Chen, an AI ethicist. “It allows for greater transparency and accountability.”

The development process included techniques like reinforcement learning from human feedback to reduce the risk of harmful or inappropriate content generation.

ShieldGemma 2: Protecting Visual Content

Alongside Gemma 3, Google released ShieldGemma 2, a specialized 4-billion parameter image safety checker. The Gemma documentation explains how this dedicated tool identifies and flags potentially harmful image content.

ShieldGemma 2 integrates with various applications to moderate visual content and create safer user experiences.

“ShieldGemma 2 is an important step towards creating a safer and more trustworthy AI ecosystem,” notes an AI ethicist. “It addresses a critical need for content moderation in the age of increasingly sophisticated AI-generated images.”

The Future Landscape: Where AI Is Heading

Gemma 3’s Broader Impact

Google’s Gemma 3 is set to significantly influence the AI landscape, particularly in accelerating the adoption of more efficient models. Its open-source nature fosters collaboration and innovation across the AI community.

The model’s multimodal capabilities and expanded context window enable more sophisticated applications. Combined with the continued growth of the generative AI market mentioned in the Gemma documentation, these features position Gemma 3 at the forefront of AI democratization.

The Specialization Trend

Beyond the shift toward smaller models, AI is moving toward greater specialization. This approach optimizes models for specific applications rather than generic use cases.

Specialized models often achieve better results in their target domains, powered by domain-specific datasets and fine-tuning techniques.

“The future of AI is likely to be characterized by a diverse ecosystem of specialized models,” predicts an AI strategist. “We’ll see models that are tailored to specific industries, tasks, and even individual users.”

The Road Ahead

Gemma 3 represents a significant milestone in AI’s evolution, but it’s just one step in an ongoing journey. The field continues to advance rapidly, with open-source models like Gemma 3 playing a crucial role in democratizing access to powerful AI capabilities.

As these technologies continue to develop, they’ll increasingly transform how we work, create, and solve problems across virtually every domain.

Weekly AI Intelligence

Which AI companies are developers actually adopting? We track npm and PyPI downloads for 262+ companies. Get the biggest shifts weekly — before they show up in the news.

Content disclosure: This article was generated with AI assistance using verified data from AI-Buzz's database. All metrics are sourced from public APIs (GitHub, npm, PyPI, Hacker News) and verified through our methodology. If you spot an error, report it here.

Companies in This Article

Explore all companies →Hugging Face

27The GitHub of machine learning. Hosts models, datasets, and Spaces.

Hugging Face profile →DeepSeek

22AI research lab building open-source reasoning and code models

DeepSeek profile →Make

12Workflow automation platform. Formerly Integromat, now with AI features.

Make profile →Mistral AI

17French AI lab building open-weight foundation models.

Mistral AI profile →Read More From AI Buzz

Zhipu GLM-5 Escalates China AI Race: Scale vs Efficiency

Zhipu AI has unveiled its new GLM-5 model series, a strategic move detailed in a recent Bloomberg report designed to set a new performance benchmark in China’s competitive AI landscape and preempt an anticipated release from rival DeepSeek. The announcement from the newly public company highlights a significant strategic split in the nation’s AI development,

OpenAI Pivots to Open-Weight in Response to DeepSeek

In a landmark strategic shift, OpenAI has announced the release of two open-weight models, directly entering a competitive arena it once observed from its proprietary fortress. This move is a clear acknowledgment of the mounting pressure from a new generation of powerful and efficient open-source alternatives, most notably DeepSeek-V2, which have demonstrated performance competitive with

AI Art Revolution: Create Stunning Masterpieces Without Being an Artist

Want to create stunning, original art in seconds, even if you’ve never picked up a paintbrush? AI art generators are making it possible. With just a few words or an image, you can now generate unique artwork, opening up a whole new world of creative possibilities. This article will show you how. However, it is