DeepSeek-OCR 2 Beats Gemini Pro for Document AI Parsing

Data as of February 2, 2026

Real-time downloads, GitHub activity, and developer adoption signals

Compare Adept vs DeepSeek →

Chinese AI firm DeepSeek has introduced DeepSeek-OCR 2, a vision-language model that marks a significant development in document understanding. By redesigning how visual information is processed, the model achieves an approximate 80% reduction in visual tokens compared to similar systems. This architectural efficiency has enabled the new document AI model Deepseek to outperform leading competitors like Google’s Gemini 3 Pro on key document parsing benchmarks, a feat that signals a notable shift in the Document AI landscape.

The core innovation is a new vision encoder, DeepEncoder V2, which moves away from rigid grid-based image analysis to a dynamic, content-aware approach that mimics human visual processing. This allows the model to intelligently determine the logical reading order of complex documents before interpretation. The release, which includes publicly available code and model weights, positions the company as a formidable competitor in the specialized field of multimodal document intelligence.

Key Points

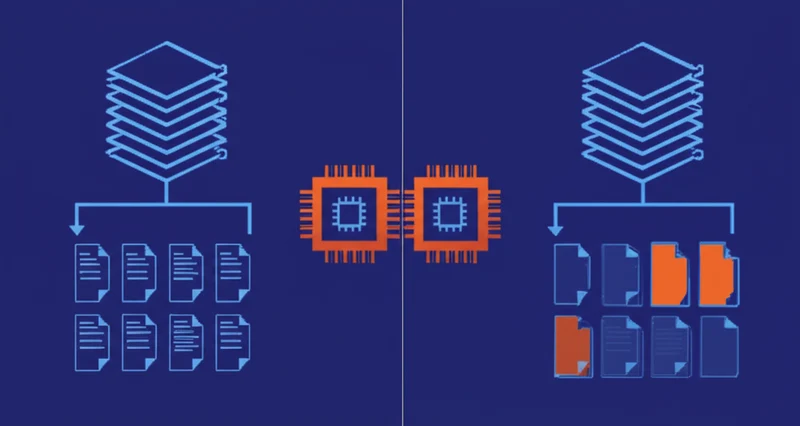

- DeepSeek-OCR 2’s new architecture reduces visual tokens by approximately 80%, from 6,000-7,000 to as few as 256.

- The model achieved a 91.09% score on the OmniDocBench v1.5 benchmark, outperforming Google’s Gemini 3 Pro on document parsing tasks.

- Its DeepEncoder V2 uses “causal flow tokens” to dynamically rearrange visual information based on a document’s logical layout.

- The model and code have been open-sourced on GitHub and Hugging Face, promoting wider adoption and development.

Breaking the Grid: From Pixels to Logic

The technical advancement behind DeepSeek-OCR 2 lies in its DeepEncoder V2, a new vision encoder architecture that addresses a fundamental inefficiency in traditional models. Most vision-language systems use a “raster scanning” method, slicing an image into a grid of patches and processing them in a fixed top-to-bottom order. As reporting from The Decoder highlights, this rigid process is computationally expensive and fails to capture the logical flow of information in documents with complex layouts like forms or multi-column articles.

DeepEncoder V2, based on a compact 0.5B parameter Qwen2 language model, introduces a two-stage process. First, it uses special “causal flow tokens” that access all image information to intelligently determine the most logical reading order based on content. Second, a downstream language model reasons over this pre-sorted sequence. This superior Deepseek OCR 2 token efficiency is achieved because only the rearranged causal flow tokens - not the much larger set of original visual tokens - are passed to the decoder.

This reduces the token count from a typical 6,000-7,000 to just 256-1,120, a reduction of 80% or more .

Dethroning Gemini: The Benchmark Battle

The model’s efficiency does not compromise its performance; instead, it establishes a new state-of-the-art on a comprehensive document understanding benchmark. On OmniDocBench v1.5, which covers 1,355 pages across nine categories, DeepSeek-OCR 2 achieved an overall score of 91.09%. This represents a 3.73 percentage point improvement over its predecessor, with notable gains in tasks requiring correct reading order.

The research paper makes a significant claim: for document parsing tasks, Deepseek OCR 2 beats Gemini Pro when operating with a comparable token budget. This positions the model as a strong Gemini Pro alternative for document AI applications. Practical improvements are also evident, with the text repetition rate decreasing from 6.25% to 4.17% when used as an OCR backend. However, the researchers transparently report that the model performs worse than its predecessor on newspaper documents.

They attribute this to the lower token limit being insufficient for extremely dense pages and limited training data for that specific category, highlighting an area for future work.

Beyond Text: Documents as Intelligence

The release of DeepSeek-OCR 2 is part of a broader industry evolution from simple Optical Character Recognition (OCR) to more sophisticated Intelligent Document Processing (IDP). According to an analysis by Hyper.AI, the focus is shifting from pure recognition accuracy to solving complex layout, multimodal symbol, and long-context understanding challenges. DeepSeek’s ability to parse reading order based on visual semantics places it at the forefront of this trend, and its first-generation system could already process up to 33 million pages per day, indicating high scalability.

By making its technology publicly available, DeepSeek is employing an open-source strategy to drive adoption and establish its architecture as a potential standard. This move also underscores the growing competitiveness of Chinese AI labs. As Hyper.AI notes , firms like DeepSeek, Baidu, and Tencent are increasingly challenging established players, which is consistent with a broader trend where, according to the South China Morning Post , Chinese companies are narrowing the AI development gap. The architecture also points toward a unified multimodal encoder, where the same framework could process text, speech, and images by adapting only the query tokens for each modality.

This represents a foundational step toward more versatile and powerful AI systems.

Token Economy: Efficiency Redefines AI

DeepSeek-OCR 2’s introduction demonstrates a significant technical advancement in document AI. Its causal flow architecture delivers substantial efficiency gains without sacrificing performance, enabling it to surpass established models in specific, high-value tasks. The open-source release further accelerates innovation in a field moving rapidly beyond simple text extraction toward genuine document comprehension.

This development is a clear indicator that architectural innovation, particularly in token efficiency, remains a critical frontier in AI. As models become more adept at understanding content structure before processing it, how will this change the economics of training on vast, unstructured datasets and the types of problems we can solve at scale?

Weekly AI Intelligence

Which AI companies are developers actually adopting? We track npm and PyPI downloads for 263+ companies. Get the biggest shifts delivered weekly.

About this analysis: Written with AI assistance using AI-Buzz's proprietary database of developer adoption signals. Metrics sourced from npm, PyPI, GitHub, and Hacker News APIs. See our methodology | Report a correction

Data as of March 21, 2026. Data confidence details

Companies in This Article

Explore all companies →Adept

14AI agent company building general-purpose AI assistants.

DeepSeek

31AI research lab building open-source reasoning and code models

Unstructured

57ETL for unstructured data preprocessing

Hugging Face

74Open-source ML platform hosting models, datasets, and Spaces

Read More From AI Buzz

Zhipu GLM-5 Escalates China AI Race: Scale vs Efficiency

Zhipu AI has unveiled its new GLM-5 model series, a strategic move detailed in a recent Bloomberg report designed to set a new performance benchmark in China’s competitive AI landscape and preempt an anticipated release from rival DeepSeek. The announcement from the newly public company highlights a significant strategic split in the nation’s AI development,

Pydantic vs OpenAI Adoption: The Real AI Infrastructure

Pydantic, a data validation library most developers treat as background infrastructure, was downloaded over 614 million times from PyPI in the last 30 days - more than OpenAI, LangChain, and Hugging Face combined. That combined total sits at 507 million. The gap isn’t close. This single data point exposes one of the most persistent blind

Kaggle Game Arena: AI Evaluation for Strategic Reasoning

In a significant development for AI assessment, Kaggle, in collaboration with Google DeepMind, has launched the Kaggle Game Arena, a new platform designed to benchmark the strategic decision-making of advanced AI models. Announced this month, the initiative moves AI evaluation away from static tasks like language translation and into the dynamic, competitive environment of strategy