ThinkSafe Delivers AI Safety Without Performance Trade-Off

Data as of February 5, 2026

Real-time downloads, GitHub activity, and developer adoption signals

Compare Make vs Anthropic→

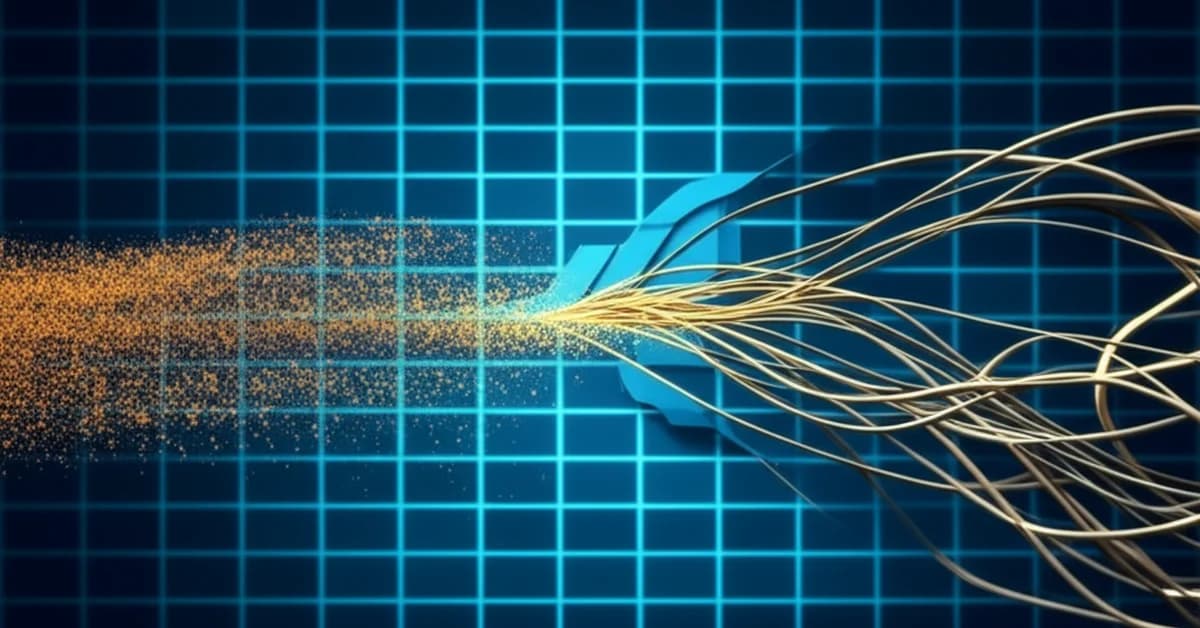

A new conceptual framework for AI safety, detailed in a HackerNoon analysis of the ThinkSafe framework, outlines a method designed to solve one of the industry’s most persistent challenges: achieving AI safety without performance trade-off. The approach, termed ThinkSafe, marks a significant shift by focusing on supervising a model’s internal “thought process” rather than simply filtering its final output. This development directly confronts the AI alignment tax, a phenomenon where implementing safety guardrails often degrades the complex reasoning abilities of advanced language models.

By intervening at the level of the model’s reasoning chain, this process-oriented AI safety approach aims to build models that are safe by design, not just by restriction. The technique preserves the nuanced and creative capabilities of powerful models, a critical step for their deployment in high-stakes, real-world applications where trustworthy reasoning is non-negotiable.

Key Points

- ThinkSafe represents a shift from output filtering to supervising the internal reasoning steps of AI models.

- This approach directly addresses the AI alignment tax, implementing AI safety without a performance trade-off.

- Its architecture involves a decoupled safety model that guides the primary reasoning model’s process.

- This method enables more reliable AI deployment in critical fields like scientific research and finance.

Architecting the AI’s Cognitive Guardrails

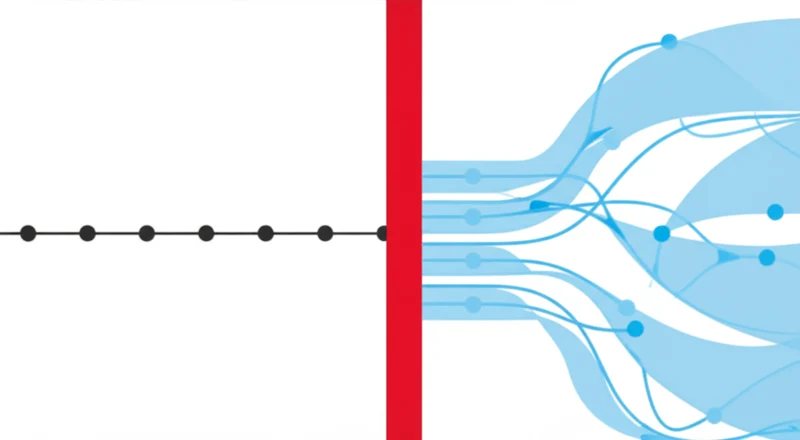

The ThinkSafe concept introduces a fundamental change in how safety is engineered into AI. Instead of acting as a gatekeeper at the beginning (prompt filtering) or end (output filtering) of a task, it functions as a guide during the model’s multi-step reasoning process. Modern LLMs often use techniques like Chain-of-Thought prompting to articulate an internal monologue, breaking down problems and forming conclusions. This is where subtle but critical errors occur.

A process-oriented approach addresses this by evaluating the logical steps themselves. The architecture involves a primary, high-capability reasoning model whose work is monitored by a specialized “critic” model. This critic evaluates the reasoning chain for logical fallacies, biases, or unsafe inferences in real-time. It then steers the primary model back on track, preserving its core capabilities while ensuring the integrity of its thought process.

This represents a notable advancement in AI reasoning models safety, moving beyond simple censorship to active cognitive guidance.

Beyond Censorship: The Safety-Performance Paradox

The current AI safety landscape is dominated by methods that are largely reactive. Reinforcement Learning from Human Feedback (RLHF) trains models based on human preferences for final answers, which makes them overly cautious. Constitutional AI automates this with a set of principles but leads to rigid behavior. Red teaming actively seeks flaws but remains a testing method, not a preventative one.

ThinkSafe represents a more proactive and integrated strategy. By focusing on the reasoning itself, it functions as a form of cognitive scaffolding, teaching the model how to “think” safely rather than just memorizing a list of forbidden outputs. This method is inherently more robust and adaptable, equipping the model to handle novel situations not explicitly covered in its training. The ThinkSafe refusal steering technique doesn’t just block a request but guides the model’s reasoning toward understanding why a particular path is flawed or harmful, preventing the formation of unsafe conclusions at their source.

Trust Architecture for High-Stakes AI

The practical implications of achieving AI safety without performance trade-off are substantial, particularly in sectors where the cost of error is immense. As AI systems are integrated into scientific, medical, and financial analysis, the verifiability of their reasoning becomes paramount. An AI assisting in a complex task, such as the research detailed in a recent study on the biosynthesis of Saxitoxin, a potent neurotoxin, must have impeccable logic. An undetected flaw in its reasoning will misdirect expensive and time-consuming research.

By ensuring the soundness of each logical step, a ThinkSafe-like system builds the trust necessary for deployment in these domains. It moves AI from being a powerful but sometimes unreliable tool to a trustworthy cognitive partner. This advancement does not just incrementally improve existing applications; it makes entirely new categories of high-trust AI systems in healthcare, finance, and autonomous systems viable for the first time.

Reasoning Transparency: The New Safety Frontier

The ThinkSafe concept articulates a clear and vital goal for the AI industry: creating models that are inherently safe because their cognitive processes are aligned with human values and logical principles. This marks a maturation of the AI safety field, moving away from blunt instruments toward more sophisticated forms of guidance. Achieving this represents a foundational step toward the responsible integration of AI into the most critical aspects of society. As these systems become more autonomous, will trusting their final answers be enough, or will we need verifiable insight into how they think?

Weekly AI Intelligence

Which AI companies are developers actually adopting? We track npm and PyPI downloads for 263+ companies. Get the biggest shifts delivered weekly.

Need a decision-ready brief from this article?

If this analysis is relevant to a real vendor decision, request a comparison brief or evidence pack and tell us what you’re evaluating.

Companies in This Article

Explore all companies →Compare the companies in this article

Read More From AI Buzz

UChicago AI Model Beats Optimization Bias with Randomness

In a direct challenge to the prevailing “AI as oracle” paradigm, where systems risk amplifying existing biases, researchers at the University of Chicago have demonstrated that intentionally incorporating randomness into scientific AI systems leads to more robust and accurate discoveries. A study published in the Proceedings of the National Academy of Sciences details a computational

Kaggle Game Arena: AI Evaluation for Strategic Reasoning

In a significant development for AI assessment, Kaggle, in collaboration with Google DeepMind, has launched the Kaggle Game Arena, a new platform designed to benchmark the strategic decision-making of advanced AI models. Announced this month, the initiative moves AI evaluation away from static tasks like language translation and into the dynamic, competitive environment of strategy

Notion AI Agents Revenue Surpasses $500M Amid Agent Launch

Notion has announced a significant evolution of its platform, launching customizable AI agents capable of executing complex, multi-step workflows while simultaneously revealing it has surpassed $500 million in annualized revenue. Unveiled at its “Make with Notion” conference, the dual announcement signals a strategic pivot from a collaborative documentation tool to an intelligent, automated work hub.