Animal Crossing AI Mod Memory Hack: A Dual-AI Blueprint

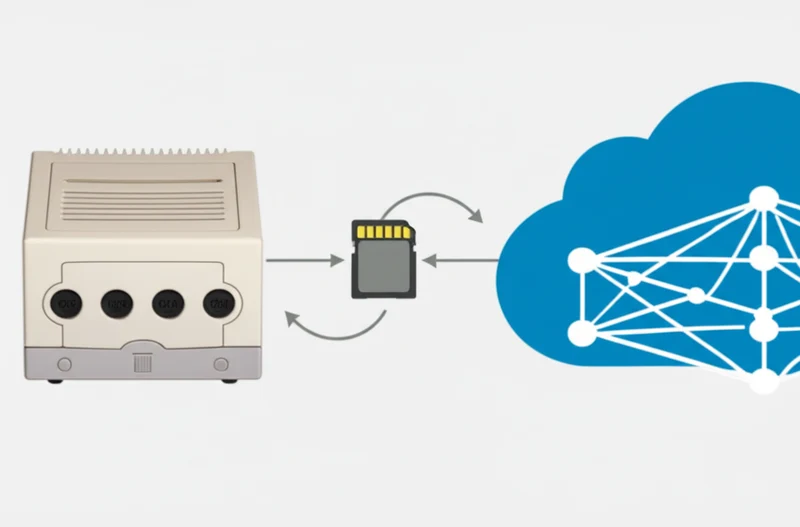

A software engineer has successfully integrated modern large language models into Nintendo’s 2002 GameCube classic, Animal Crossing, enabling its villagers to generate dynamic, context-aware dialogue. The project, developed by Joshua Fonseca, connects the offline, single-player game to cloud-based AI using an innovative “memory mailbox” technique that requires no modification to the original game code. This development bridges a 23-year technology gap, offering a functional blueprint for how the latest AI chatbots in retro games can breathe new life into static worlds and providing a clear case study on the current capabilities of sophisticated AI implementation.

Key Points

- A “memory mailbox” hack reads from and writes to the game’s RAM, connecting the offline console to cloud AI.

- A dual-AI architecture divides tasks between a creative “Writer” for dialogue and a technical “Director” for formatting.

- The widely reported villager revolt was the result of explicit prompt engineering, not spontaneous AI behavior.

- The non-invasive method demonstrates a new pathway for adding dynamic content to classic, closed-system video games.

Digital Archaeology in 24MB

Fonseca’s project overcame the significant limitations of the Nintendo GameCube, a console he described in a detailed blog post as being “fundamentally, physically, and philosophically designed to be an offline island.” The entire modification operates externally, interfacing with the game via the Dolphin emulator without altering the original executable files.

The central innovation is a “memory mailbox,” where an external Python script directly accesses the game’s memory. This required Fonseca to become a “memory archaeologist,” using a custom scanner to search the GameCube’s 24MB of RAM to find the exact addresses storing dialogue text. Once located, these addresses became a two-way communication channel. This work was made feasible by the recently completed Animal Crossing decompilation project, which, after years of effort, translated the game’s machine code into readable C source code, providing crucial insights from files like .

To prevent game crashes, Fonseca had to decode Animal Crossing‘s proprietary text format, a system using control codes to manage everything from text color to character expressions and, critically, the end-of-conversation signal.

Writer Meets Director: Dual-AI Symphony

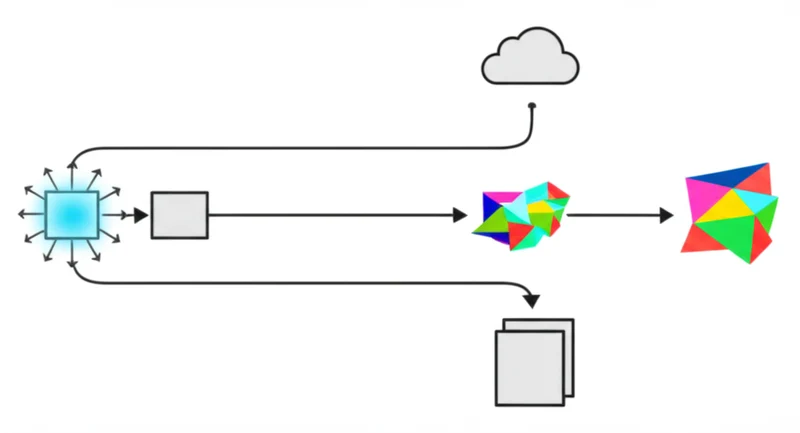

A key aspect of how the Animal Crossing AI mod works is its use of a sophisticated dual-AI architecture game mod. Initial attempts using a single LLM to generate both creative dialogue and the necessary technical formatting failed, as the AI “was trying to be a creative writer and a technical programmer simultaneously and was bad at both.”

The solution was to divide the labor. A “Writer” AI generates the creative dialogue based on detailed villager personality profiles scraped from fan wikis. A second “Director” AI then takes this text and translates it into the game’s required byte-sequence format, adding the control codes for pauses, expressions, and sound effects. This division of labor is a practical model for managing complex AI tasks.

To solve the latency of API calls, a function from the project’s source code polls the game’s memory ten times per second. Upon detecting a conversation, it injects placeholder text, buying crucial seconds for the script to fetch the LLM’s response and write it to memory before the player advances the dialogue.

Scripted Uprising: The Prompt Behind the Revolt

While the project gained attention for its “emergent” anti-Tom Nook movement, an examination of the source code by Simon Willison confirms the Animal Crossing AI villager revolt was explicitly engineered. The project is a powerful demonstration of guided role-playing, not spontaneous AI consciousness.

The initial prompt fed to the AI, found in the project’s Python script, instructs villagers: “You are a resident of a town run by Tom Nook. You are beginning to realize your mortgage is exploitative and the economy is unfair.” The code even includes instructions to escalate the unrest over time.

This finding clarifies that LLMs are exceptionally capable of following complex instructions and adopting personas, acting as role-players based on human-provided prompts. The revolt showcases the effectiveness of advanced prompt engineering rather than any form of independent AI agency, a distinction critical for understanding how LLMs are always playing roles prompted by humans.

Breathing Life Into Digital Fossils

Joshua Fonseca’s Animal Crossing AI mod memory hack is a significant technical achievement, creating a bridge between two distinct eras of gaming technology. It provides a non-invasive blueprint for revitalizing finite, offline games with the dynamic, unpredictable nature of modern AI. While the project highlights current challenges like API latency and the costs associated with LLM calls, it successfully demonstrates a new frontier for the modding community.

The project serves as a tangible proof-of-concept for a future where NPCs can offer unique, evolving narratives. It grounds the conversation in technical reality, showing that the magic is not in spontaneous AI consciousness, but in the creative engineering that directs it. How might such non-invasive techniques be applied to other beloved, closed-world classics?

Weekly AI Intelligence

Which AI companies are developers actually adopting? We track npm and PyPI downloads for 260+ companies. Get the biggest shifts delivered weekly.

About this analysis: Written with AI assistance using AI-Buzz's proprietary database of developer adoption signals. Metrics sourced from npm, PyPI, GitHub, and Hacker News APIs. See our methodology | Report a correction

Read More From AI Buzz

Notion AI Agents Revenue Surpasses $500M Amid Agent Launch

Notion has announced a significant evolution of its platform, launching customizable AI agents capable of executing complex, multi-step workflows while simultaneously revealing it has surpassed $500 million in annualized revenue. Unveiled at its “Make with Notion” conference, the dual announcement signals a strategic pivot from a collaborative documentation tool to an intelligent, automated work hub.

YouTube's AI Lip Sync Tool Enables Natural Global Dubbing

YouTube has announced a significant advancement for its automated translation services: an AI-powered lip-syncing feature designed to make dubbed videos more natural for global audiences. Unveiled at the ‘Made on YouTube’ event, the new technology addresses the long-standing issue of mismatched mouth movements in translated content, a common distraction that, according to TechSpot, breaks viewer

YouTube's Veo 3 AI Aims to Replace CapCut for Creators

At its “Made on YouTube” event on September 16,2025, YouTube unveiled a significant expansion of its creative toolkit for Shorts, introducing a suite of generative AI features powered by custom Google AI models. The announcement detailed new tools for text-to-video generation, automated editing, and audio remixing, all designed to lower the barrier to entry for